Machine Learning for Sales: What Works in 2026

74% of organizations invested in AI last year, according to Deloitte, with tech budgets jumping from 8% to 14% of revenue. Yet 85% of AI projects still fail to deliver expected results. That tension - massive spending, mediocre outcomes - is the defining story of machine learning for sales right now. The AI sales tools market hit $3B last year and is growing roughly 13% annually. Money is pouring in. Results aren't keeping pace.

The gap between what ML vendors promise and what sales teams actually get is enormous. Your VP came back from a conference wanting to "add AI to the stack," armed with a vendor deck, a budget number pulled from thin air, and zero clarity on what problem they're solving. We've seen this play out dozens of times. Closing the gap starts with understanding what works, what doesn't, and where to spend first.

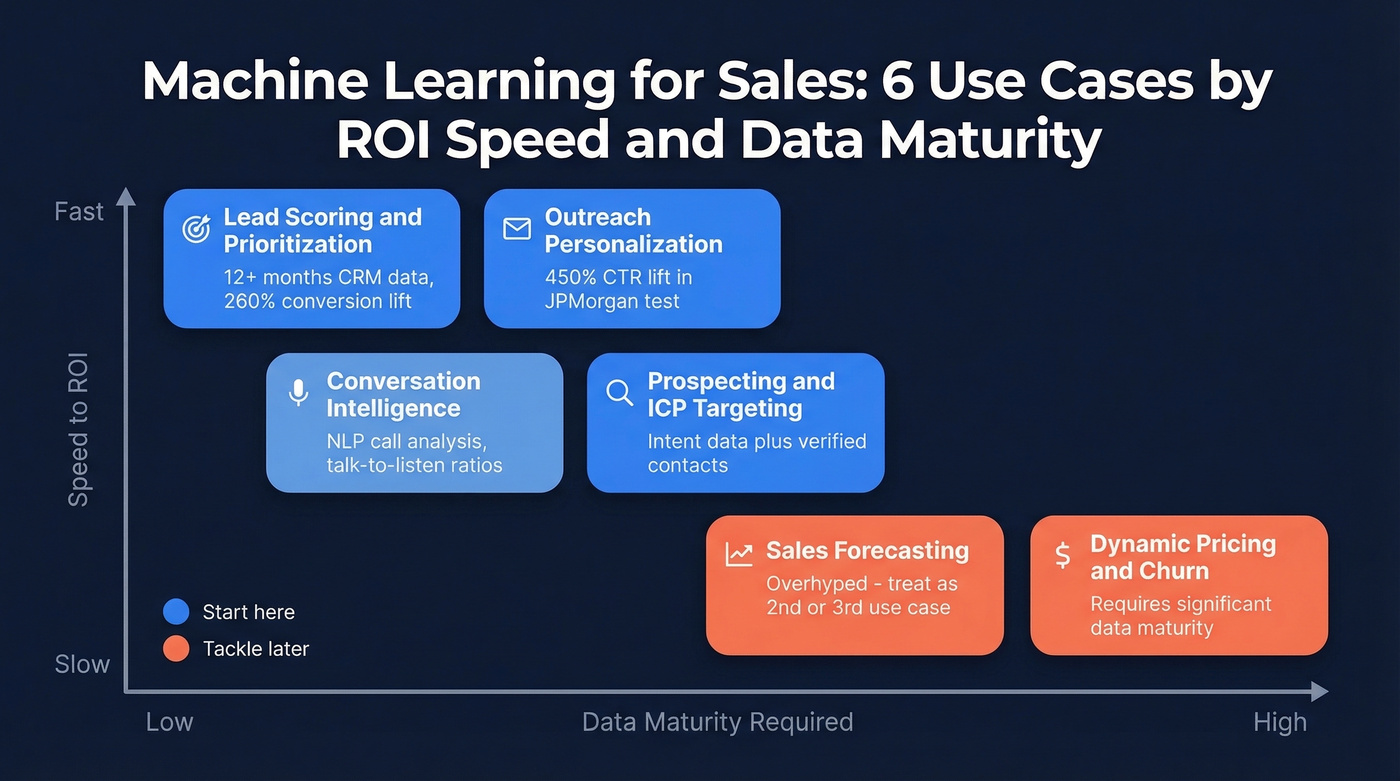

Start Here

Pick one use case. Lead scoring has the fastest ROI and the most proven case studies. Before you buy anything, audit your CRM data - you need 70%+ field completeness across key objects and at least 12-24 months of history with hundreds of labeled outcomes.

Budget $45-550/user/month for CRM AI, plus $10-400/user/month for conversation, sequencing, and forecasting tools depending on your stack. And if your contact data bounces above 5%, fix that before touching any ML tool. Clean data is the prerequisite. Everything else is optional.

What ML in Sales Actually Means

Vendors blur three things together. Rules-based automation is "if deal stage = proposal and no activity in 14 days, send alert." That's not ML. A true model learns from your historical data - which deals closed, which didn't, what patterns preceded each outcome - and makes predictions that improve over time. Generative AI is the layer that writes your emails and summarizes calls.

Sales is a strong domain for predictive models because the outcomes are binary and measurable: closed-won or closed-lost. Every CRM has thousands of these labeled outcomes sitting in it already. The feedback loop - data flows in, the model spots patterns, makes predictions, outcomes get recorded, the model retrains - is what makes predictive analytics practical here where it fails in fuzzier domains.

How Sales Teams Use ML Today

Lead Scoring & Prioritization

Use this if you've got 12+ months of CRM data with clear win/loss labels and your reps waste time on leads that never convert. U.S. Bank deployed ML-based lead scoring and saw a 260% lift in lead conversion rates with a 35% shorter sales cycle. Grammarly used Salesforce Einstein to push conversions up 80% on upgraded plans and compressed their sales cycle from 60-90 days down to 30. HES FinTech built a scoring model from three years of HubSpot data and processed 40% more loans per week. Across HubSpot's customer base, predictive lead scoring lifted lead-to-customer rates by 30% and pushed SQL accuracy from 55% to 85%.

Skip this if your CRM has fewer than a few hundred closed-won records or your data hygiene is a mess. The model will learn the wrong patterns and you'll trust scores that are essentially random.

Sales Forecasting

Here's the thing about forecasting: it's the most overhyped ML use case in sales. Salesforce Einstein users report +42% forecast accuracy and +26% win rates, which sounds incredible. But the practitioner skepticism on r/SalesOperations is real - people openly question whether ML forecasting produces better projections or is just vendor hype dressed up in dashboards. Every forecasting vendor claims "up to 95% accuracy." Ask them for the methodology. Ask them what happens when your CRM data is 60% complete.

Forecastio walks through the model taxonomy - regression, ARIMA, Prophet, neural networks - but the gap between academic accuracy and real-world pipeline messiness is where most implementations die. We'd recommend treating forecasting as a second or third use case, not your starting point.

Outreach Personalization

AI-powered personalization goes beyond "Hi {first_name}." JPMorgan ran an experiment where AI-generated marketing copy outperformed human-written versions by 450% on click-through rates. That's a marketing case, but the principle applies directly to sales outreach: models trained on your historical engagement data identify which messaging angles, send times, and content formats drive replies for specific personas. Outreach and Salesloft now bake this into their sequencing engines.

If you want a deeper playbook on what actually moves reply rates, see our guide to AI-powered personalization.

Conversation Intelligence

NLP models analyze recorded sales calls to surface what top performers do differently - talk-to-listen ratios, objection handling patterns, competitor mentions, pricing sensitivity signals. Gong is the category leader at $250-400/user/month. Fathom offers a surprisingly capable free tier for teams that just need transcription and basic insights. For teams doing fewer than 20 calls a week, Fathom is probably all you need.

If your team is still building fundamentals, pair this with tighter phone sales skills and a consistent sales script.

Prospecting & ICP Targeting

This is where ML meets your data layer. Intent data and enrichment signals feed models that identify which accounts are actively researching solutions like yours. The quality of those predictions depends entirely on the quality of your underlying contact data - verified contacts layered with real-time intent give models clean inputs instead of stale, bouncing records.

Prospeo covers 300M+ professional profiles with 98% email accuracy and tracks intent signals across 15,000 topics via Bombora, refreshing data every 7 days compared to the 6-week industry average. One sales team using verified data saw bounce rates drop from 35% to under 5%, and their AE-sourced pipeline jumped 180%. That kind of data quality is what makes downstream ML models actually work instead of training on noise.

If you're evaluating providers, start with our ranked list of the best B2B databases and the shortlist of verified contact databases.

Dynamic Pricing & Churn

Elasticity modeling finds the price points that maximize revenue without killing conversion. Churn prediction scores existing customers on likelihood to leave based on usage patterns, support tickets, and engagement decay. Both require significant data maturity - clean historical pricing data or detailed product usage telemetry. Most teams tackle these after lead scoring is already working.

If churn is a priority, use this alongside a practical plan for how to prevent churn.

Every ML model in your sales stack trains on your contact data. Feed it stale records and you're optimizing on noise. Prospeo delivers 300M+ profiles at 98% email accuracy with a 7-day refresh cycle - so your lead scoring, intent signals, and personalization models actually work.

Fix your data layer first. Everything downstream depends on it.

What ML Sales Tools Cost in 2026

The pricing spread is enormous. Here's what you're actually looking at:

| Category | Tool | Price Range | Setup Time | Best For |

|---|---|---|---|---|

| CRM AI | Salesforce Einstein | $200-550/user/mo | 2-3 months | Enterprise orgs |

| CRM AI | HubSpot | $45-150/user/mo | 2-4 weeks | SMB/mid-market |

| Conversation | Gong | $250-400/user/mo | 8-12 weeks | Call coaching |

| Conversation | Fathom | Free-$25/user/mo | Same day | Budget teams |

| Conversation | Fireflies | $10-39/user/mo | Same day | Async meeting notes |

| Forecasting | Clari | $200-300/user/mo | 6-10 weeks | Revenue ops |

| Sequencing | Outreach | $100-165/user/mo | 6-8 weeks | Outbound at scale |

| Sequencing | Salesloft | $100-175/user/mo | 6-8 weeks | Mid-market outbound |

| Prospecting | Prospeo | ~$0.01/email | Instant | Data accuracy |

| Prospecting | Apollo | $49-149/user/mo | ~1 week | All-in-one SMB |

| Prospecting | ZoomInfo | $15K-50K/year | 2-4 weeks | Enterprise data |

Salesforce Einstein can run $200-550/user/month before implementation costs, and a full deployment takes 2-3 months with consultants. The smartest teams start with clean data - cheap - before layering on expensive prediction tools. A turbocharger on a car with no oil doesn't make it faster.

If you're comparing categories and vendors, this pairs well with our roundup of best AI sales tools and CRM automation software.

ROI - What to Actually Expect

The most recent large-scale benchmark from Optif.ai, covering 938 B2B companies, found that AI-augmented reps generated 41% higher revenue per rep - $1.75M versus $1.24M - while performing 18% fewer activities per month. ICP targeting precision jumped from 52% to 78%.

On the other side, Gartner projects fewer than 40% of sellers will report AI agents improved their productivity by 2028. Sellers who effectively partner with AI are 3.7x more likely to meet quota, but that "effectively" is doing a lot of heavy lifting.

The gap between these stats comes down entirely to implementation quality. Teams that nail data hygiene, pick one use case, and run a disciplined pilot see the 41% lift. Teams that buy an enterprise ML suite, dump it on reps with no training, and expect magic see the sub-40% number. Same technology, wildly different outcomes.

If your average deal size is under $10K, you probably don't need a $200/user/month ML platform. Start with a $45/month CRM tier, clean your data, and use the built-in scoring. You'll capture 80% of the value at 20% of the cost.

To pressure-test ROI assumptions, map the lift to your account executive KPIs and your sales pipeline management baseline.

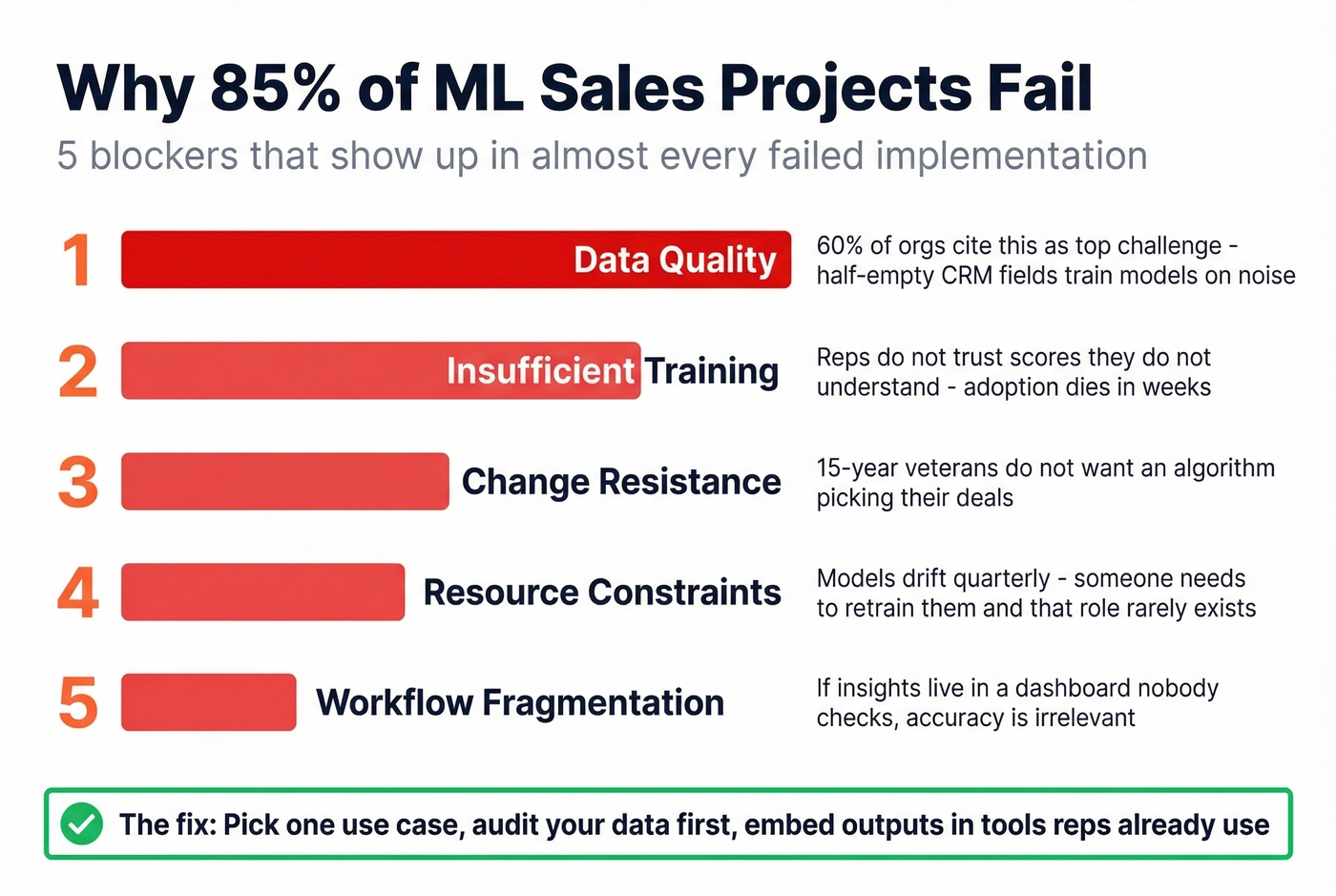

Why Most ML Sales Projects Fail

That 85% failure rate has been consistent for years. The five blockers show up in almost every failed implementation we've seen:

Data quality is the #1 killer. 60% of organizations cite it as their top challenge. Half-empty CRM fields mean the model trains on noise, and the predictions it spits out are confidently wrong - which is worse than having no predictions at all.

Insufficient training is a close second. Reps don't trust scores they don't understand. If nobody explains why Lead A scored higher than Lead B, adoption dies within weeks.

Change resistance is human nature. Reps who've been selling for 15 years don't want an algorithm telling them which deals to prioritize, especially when the algorithm can't explain its reasoning in plain language.

Resource constraints catch teams off guard. ML isn't set-and-forget. Models drift as your market changes. Someone needs to monitor performance and retrain quarterly, and that person usually doesn't exist in the org chart.

Workflow fragmentation is the silent killer. If the ML output lives in a dashboard nobody checks, it doesn't matter how accurate it is. The insight has to surface inside the tool reps already live in - their CRM, their sequencer, their inbox.

The contact data problem deserves special attention. If 35% of your email addresses bounce, then every ML-driven outbound workflow is optimizing against garbage. Real-time verification catches invalid addresses, spam traps, and catch-all domains before they poison your models. Prospeo's free tier gives you 75 emails/month to test the impact before committing budget.

If you're troubleshooting deliverability, start with check bounce and then pick a tool from our list of the best email verifier tools.

6-Step Implementation Framework

Stop trying to implement five ML use cases at once. Pick one. Nail it. Expand.

1. Audit your CRM data. You need 70%+ field completeness across key objects and 12-24 months of history with hundreds of labeled outcomes. If you don't have this, step one is backfilling, not buying tools.

2. Fix your contact data quality. Run your database through a verification tool. If bounce rate exceeds 5%, every downstream ML tool is optimizing against garbage. This is the cheapest, highest-impact step you can take.

3. Pick ONE use case. Lead scoring has the fastest, most measurable ROI. Forecasting takes 2-3 quarters to validate. Start where you can prove value in 90 days.

4. Match the tool to your stack. Don't buy Salesforce Einstein if you're on HubSpot. Don't buy Gong if your team does 10 calls a week. Let's be honest - most teams overbuy because the demo looked cool, not because the use case justified the spend.

5. Run a 90-day pilot with a control group. Split your team or territory. Half uses ML-scored leads, half uses the existing process. Measure conversion rate, cycle time, and revenue per rep. No control group means no real proof.

6. Measure, retrain, expand. If the pilot group outperforms, roll out and retrain the model quarterly. Then add a second use case. This iterative approach is how ML delivers compounding returns over time - not the big-bang rollout vendors push during procurement.

Teams using verified data saw bounce rates drop from 35% to under 5% and AE-sourced pipeline jump 180%. At $0.01 per email with 15,000 intent topics built in, Prospeo gives your ML tools the clean, enriched inputs they need to deliver real ROI - not dashboard theater.

Stop training your models on garbage. Start with data that's 98% accurate.

FAQ

What's the Difference Between AI and ML in Sales?

AI is the umbrella covering any system that mimics human intelligence. ML is the subset that learns from historical data to make predictions - like deal close probability or lead quality scores. Most "AI sales tools" run ML models trained on your CRM data under the hood. When a vendor says "AI-powered," ask which model type they're using and what data it trains on. If they can't answer, it's probably rules-based automation with a marketing label.

How Much Historical Data Do I Need?

Minimum 12-24 months of CRM history with hundreds of labeled closed-won and closed-lost outcomes. The more data points per record - activities, emails, call logs - the better the model performs. Fewer than 300 labeled outcomes and most models produce unreliable scores.

Can Small Teams Use ML?

Yes. You don't need a data science department. HubSpot ($45/user/mo) and Apollo ($49/user/mo) both ship pre-built scoring models out of the box. Pair them with verified contact data at ~$0.01/email, and a 5-person team can run a credible ML-scored outbound motion for under $100/rep/month.

How Long Before I See ROI?

Expect 90 days for a properly scoped lead scoring pilot. Forecasting takes 2-3 quarters to validate. Conversation intelligence shows qualitative value within weeks but takes a full quarter to measure revenue impact.

What's the Minimum Budget to Start?

As low as $45/user/month with HubSpot's built-in AI features. A realistic starter stack - CRM AI plus verified prospecting data - runs $50-200/user/month total. Enterprise stacks with Salesforce Einstein, Gong, and Clari can exceed $1,000/user/month.