MEDDPICC Metrics: Quantify Pain, Build the Business Case, and Kill Bad Deals

It's Thursday afternoon. Pipeline review. The rep says "metrics are solid" and the manager nods. Nobody asks what the number actually is. Nobody can name one. The deal sits at Stage 3 for another two weeks, and everyone pretends that's fine.

This is the MEDDPICC metrics problem. 73% of SaaS companies selling above $100K ARR run some version of the framework, yet most implementations decay within 12 months because Metrics - the most important letter in the acronym - gets reduced to a text field nobody reads. Fully adopted MEDDPICC correlates with 18% higher win rates, and Metrics is the element doing the heaviest lifting. For context on when MEDDPICC makes sense over MEDDIC (generally ACV >$50K, cycles >90 days), our breakdown covers the thresholds.

Here's the contrarian take most sales orgs miss: Metrics isn't just about building the business case. It's your fastest tool for killing deals that will never close. No quantified pain? No baseline after two discovery calls? Walk away. The best reps use Metrics to disqualify, not just to justify.

What "Metrics" Means in MEDDPICC

MEDDPICC Metrics are the quantified measures of your buyer's pain and the value your solution delivers. Not your internal activity KPIs. Not your pipeline velocity. The buyer's numbers.

Your team's call volume and email open rates are activity metrics - totally different animal. The "M" in the framework refers to the buyer's revenue gap, cost overrun, or churn rate. MEDDIC Academy puts it bluntly: metrics are the language of rational decision-making. Without them, you're asking an Economic Buyer to approve a purchase on vibes. (If you need a refresher on how to run the rest of the framework, start with MEDDIC sales qualification.)

If your CRM field says "improve efficiency," you don't have metrics. You have a wish. Real metrics have a baseline, a target, and a dollar sign.

Three Types That Matter

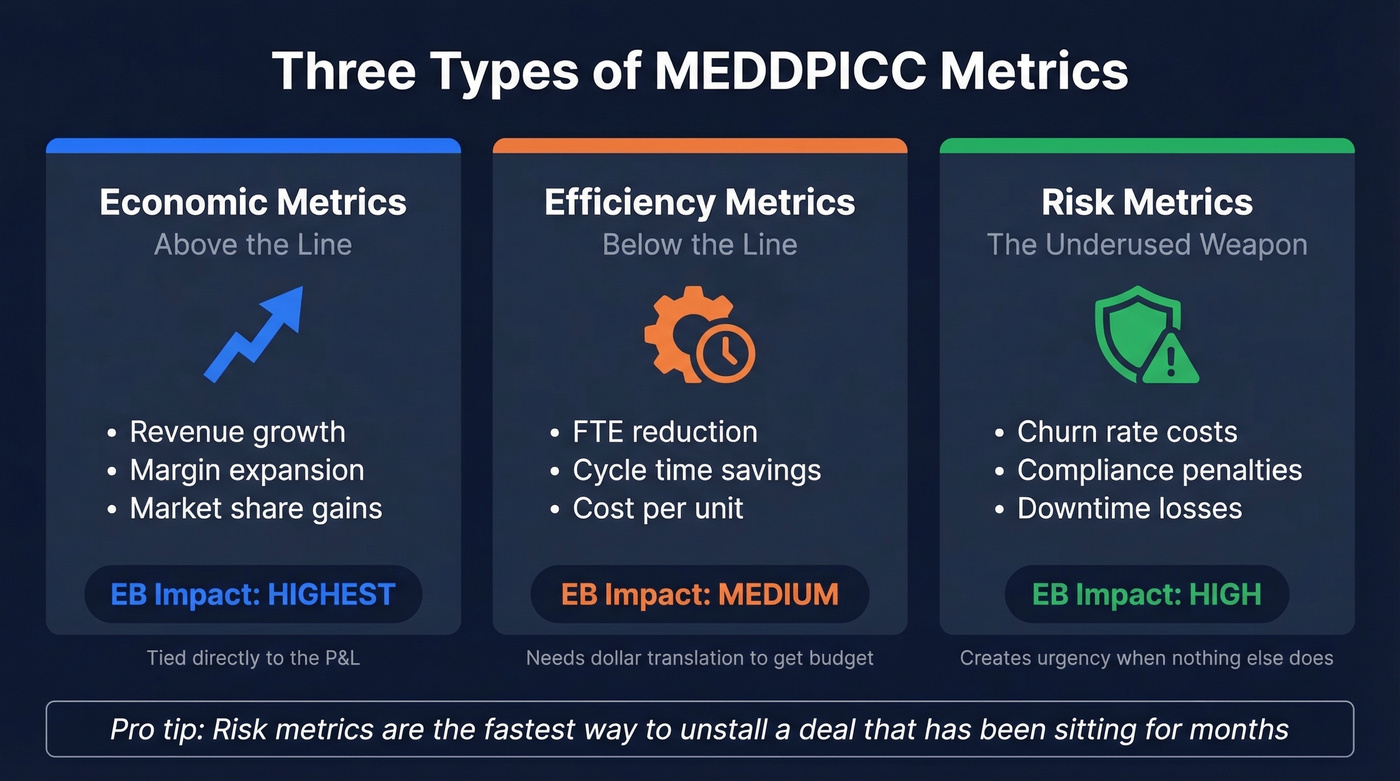

Not all metrics carry equal weight with the people who sign checks. The "M" is the element most often reduced to a vague text field, yet it most directly determines whether a deal has real economic justification.

| Type | Examples | EB Impact |

|---|---|---|

| Economic (above the line) | Revenue growth, margin, market share | Highest - tied to P&L |

| Efficiency (below the line) | FTE reduction, cycle time, cost/unit | Medium - needs $ translation |

| Risk | Churn rate, compliance fines, downtime | High when quantified |

Economic metrics sit above the line and speak directly to the P&L. They're what Economic Buyers care about most. Efficiency metrics like FTE avoidance and cycle time reduction are easier to calculate but harder to get budget for - they require translation into dollars before an EB takes them seriously. (This is also where teams confuse buyer value with internal sales operations metrics.)

Risk metrics are the underused weapon. Compliance penalties, churn costs, downtime - these create urgency when nothing else does. We've seen deals stalled for months suddenly accelerate when someone quantified the cost of a compliance gap. In healthcare, the metric might be readmission rate reduction; in manufacturing, OEE improvement or scrap rate. If you're not surfacing risk metrics in discovery, you're missing the one type that actually moves stalled deals. (If churn is the risk, use a simple churn analysis to quantify it.)

The Metric Lifecycle: M1, M2, M3

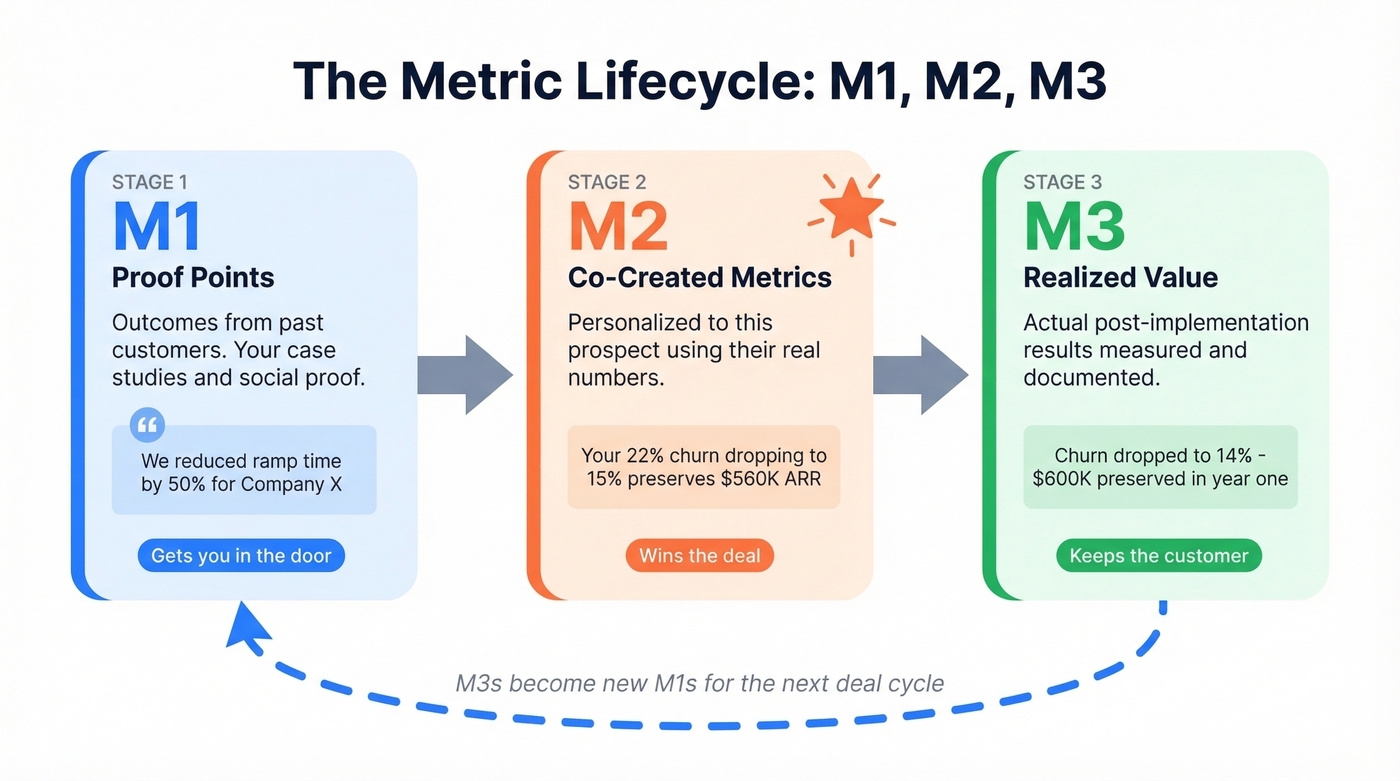

Metrics evolve through a deal and beyond it, following what Cuvama calls the M1/M2/M3 framework.

M1s are proof points - outcomes you've delivered for existing customers. "We reduced ramp time by 50% for Company X." These get you in the door: your case studies, your social proof, your reason to take the meeting.

M2s are co-created metrics - personalized to this specific prospect, built during discovery using their actual numbers. A generic "we save companies 30%" is an M1. "Your 22% churn rate dropping to 15% preserves $560K in ARR" is an M2. M2s are where deals are won, and they're the hardest to build because they require the prospect to share real data with you, which only happens when trust is already established.

M3s are realized value - what actually happened post-implementation. They feed back into new M1s for the next deal. M1s get you in the door. M2s win the deal. M3s keep the customer and fuel the next cycle. (If you’re building a repeatable process around this, tie it into pipeline health reviews.)

M1 proof points win meetings. But you need real conversations to build them. Prospeo's 98% email accuracy and 30% mobile pickup rate mean your reps actually reach the buyers who validate those metrics - not bounced inboxes and voicemail graveyards.

Stop building business cases on data that bounces.

Worked Metric Math

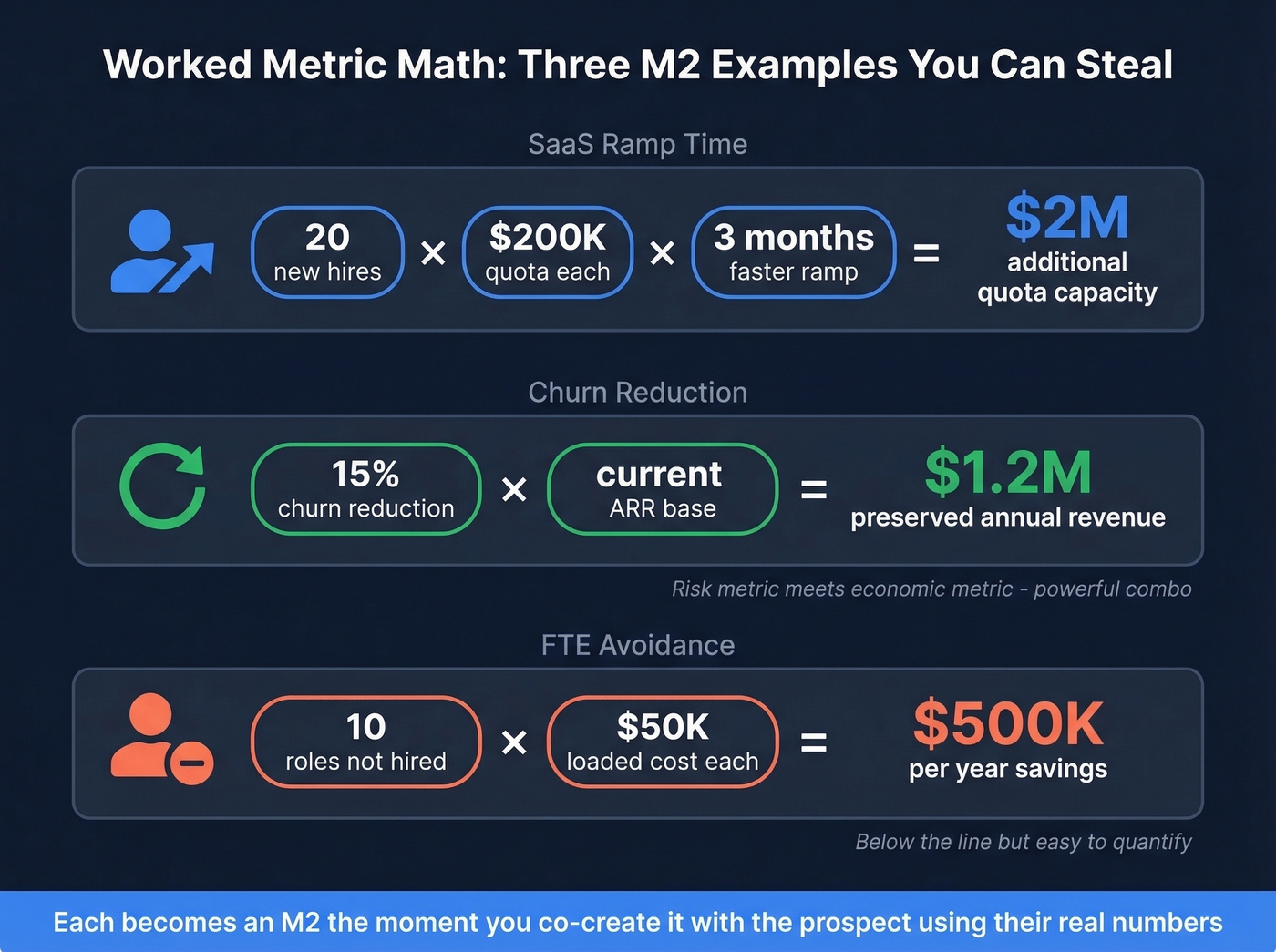

Every M2 follows the same pattern: current state, improvement, dollar impact. Here are three you can steal.

SaaS ramp time. 20 new hires annually x $200K quota each x 3 months faster ramp = $2M in additional quota capacity. Ask the prospect: "Does that math track with your numbers?" Now it's their M2, not yours. (If you want a tighter discovery flow to get to these numbers faster, use a discovery call script.)

Churn reduction. A 15% reduction in churn rate can preserve $1.2M in annual revenue. Risk metric meets economic metric - that's a powerful combination for getting an EB's attention. (For definitions and formulas, see what is churn.)

FTE avoidance. 10 roles you don't need to hire at $50K loaded cost each = $500K/year in savings. Below-the-line, but easy to quantify and hard to argue with.

Each becomes an M2 the moment you co-create it with the prospect using their real numbers instead of your assumptions.

Discovery Questions That Surface Real Numbers

Your prospect shouldn't know you're running MEDDPICC. These questions feel like a normal business conversation, not a qualification checklist.

- "How do you measure ROI for projects like this today?"

- "What are your current benchmarks for [the metric they mentioned]?"

- "Can you quantify the financial impact of not solving this in the next 12 months?"

- "If we cut [problem] by X%, what does that mean in dollars for your team?"

- "What specific goals would you measure success against?"

- "What industry-specific KPIs does your board track?"

- "Who needs to see these numbers before a decision gets made?"

Hyperbound's research positions MEDDPICC as an "internal compass" - your prospect experiences a great conversation, not an interrogation. The last question is sneaky-important: it bridges Metrics to the Economic Buyer and Decision Criteria elements of the framework in a single sentence. (If you want more prompts like these, pull from MEDDIC discovery questions.)

Scoring Metrics in Your CRM

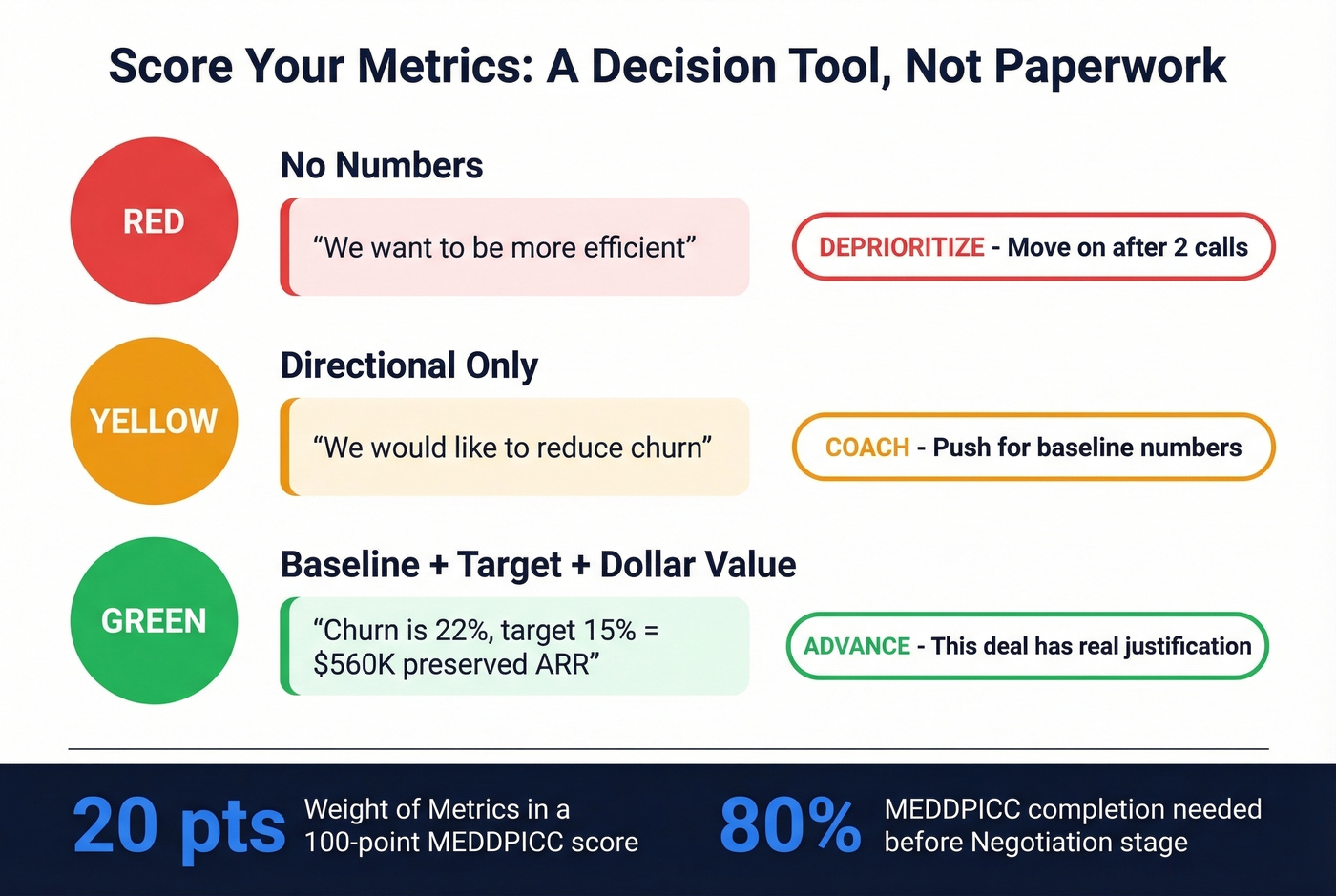

The goal is a decision tool, not paperwork.

| Score | What It Means | Example |

|---|---|---|

| 🔴 Red | No numbers | "We want to be more efficient" |

| 🟡 Yellow | Directional only | "We'd like to reduce churn" |

| 🟢 Green | Baseline + target + $ value | "Churn is 22%, target 15% = $560K preserved ARR" |

A Red score on Metrics isn't a coaching opportunity - it's a signal to deprioritize. If a prospect can't quantify their pain after two discovery calls, they either lack budget authority or they don't have a real problem. Either way, move on. (This is also why teams should separate buyer metrics from internal funnel metrics.)

Let's be honest about what happens in practice, though. In Salesforce, you create a MEDDIC_Metrics__c long text field on the Opportunity object. DemandFarm's scoring model weights Metrics at 20 out of 100 points. You set stage gates: don't advance to Proposal without 60% MEDDPICC completion, Negotiation without 80%. You put the MEDDIC section above the fold in your Lightning layout because if reps have to scroll to find it, they won't fill it in. (If you’re evaluating tooling, it helps to know the Salesforce pricing tradeoffs too.)

And then adoption craters anyway. Month 1, fields get created and everyone's excited. Month 3, only 34% are filled. Month 6, reps game the system with "TBD/In Progress." The consensus on r/sales is that MEDDPICC becomes "CRM theater" - reps fill fields to survive reviews, nobody uses them to decide deal state. The fix isn't more enforcement. It's making the scoring useful enough that reps want to fill it in because it helps them kill bad deals before wasting another month on a ghost opportunity. (If you need a practical way to operationalize this, start with sales process optimization.)

MEDDPICC dies when reps spend 4-6 hours a week hunting for contact data instead of running discovery. Prospeo gives your team 300M+ verified profiles with 30+ filters - including buyer intent signals across 15,000 topics - so they spend time co-creating M2 metrics, not scraping LinkedIn.

Give your reps the contacts. Let them do the qualifying.

FAQ

What's the difference between Metrics and Identified Pain?

Pain is the problem; Metrics quantify how much it costs. Pain says "our reps ramp too slowly." Metrics says "slow ramp costs us $2M in annual quota capacity." You need both - Pain creates emotional urgency, Metrics creates the rational business case that gets the Economic Buyer to sign off.

Who should validate the metrics you co-create?

The Economic Buyer. Your Champion co-creates M2 metrics during discovery, but they only become deal-winning when the EB confirms the numbers match their P&L priorities. Without EB validation, your M2 is still an assumption.

What if a prospect can't provide baseline numbers?

Deprioritize the deal. After two discovery calls with no quantified baseline, the prospect either lacks budget authority or doesn't have a real problem. Offer to share industry benchmarks as a starting point - if they still can't engage with the numbers, that's a Red score and a signal to move on.