AI Opportunity Scoring: What It Is, How It Works, and Which Tools Deliver in 2026

95% of companies see zero measurable bottom-line impact from their AI spending. MIT's research backs that stat, and it's a sharp reminder of why opportunity scoring AI projects fail: the gap between teams who get results and everyone else comes down to data quality and knowing what you're actually buying.

What Is AI Opportunity Scoring?

AI opportunity scoring predicts the probability that an open deal in your CRM will close. Unlike lead scoring, which evaluates pre-pipeline prospects on activity and fit, this approach works on deals already in motion - analyzing CRM history, rep activity, conversation signals, and deal properties to output a win probability.

Quick note for product managers: "opportunity scoring" in your world means prioritizing feature ideas. This article covers sales deal scoring.

The core distinction matters. Basic lead scoring measures activity. Opportunity scoring measures intent and fit within the buying process, learning from your closed-won and closed-lost patterns to dynamically re-weight signals. When paired with a broader opportunity management strategy, these scores become the foundation for pipeline prioritization and coaching decisions.

The Short Version

What it does: Predicts deal close probability using CRM history, activity data, and conversation signals - refreshed daily or every few hours.

Where to start: If you're on HubSpot Sales Hub Professional or Enterprise, try native deal scoring first. It's included. On Salesforce, Einstein scoring depends on your edition and add-ons.

What matters most: The biggest variable isn't the tool - it's whether your CRM data is clean enough to train on. Garbage contact data means garbage scores. Full stop.

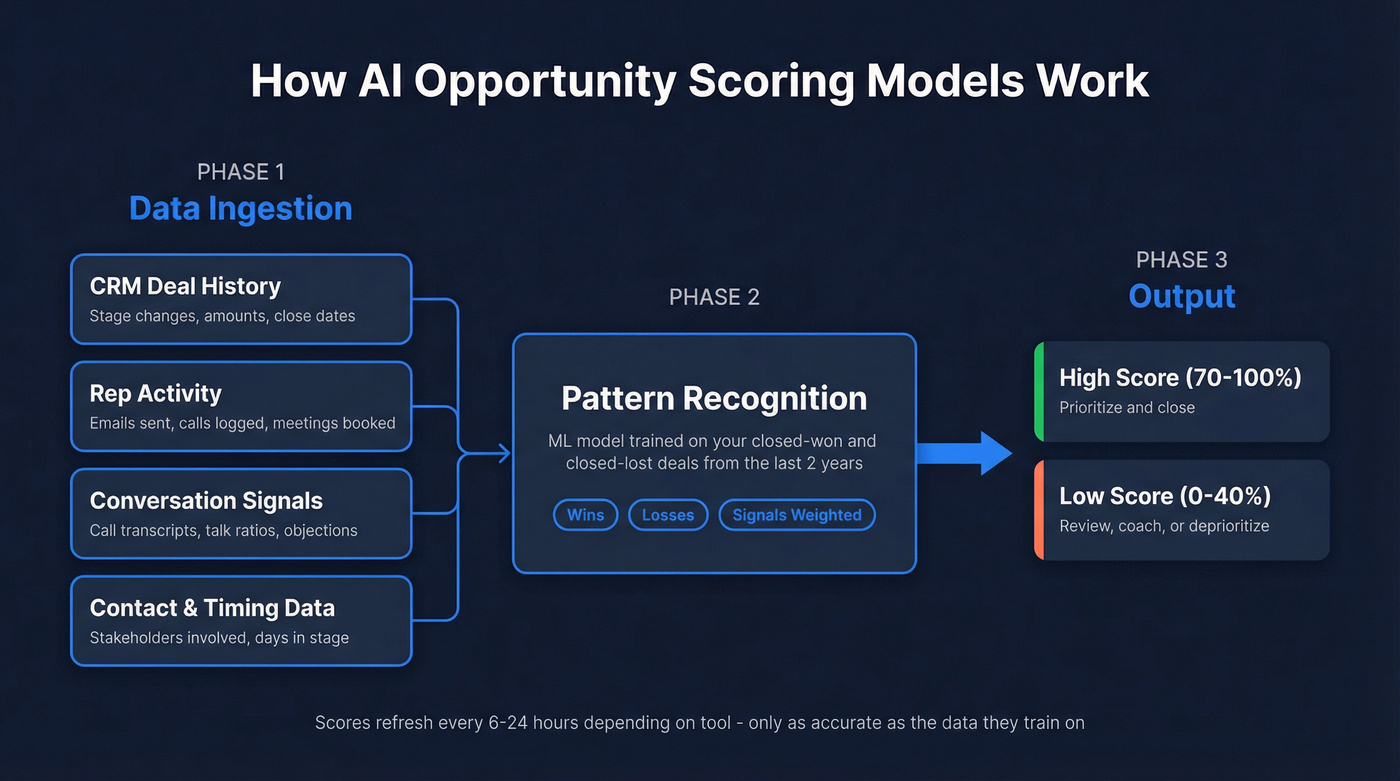

How AI Deal Scoring Models Work

Every scoring tool follows the same basic pattern: ingest historical deal data, identify patterns that distinguish wins from losses, then apply those patterns to open deals. The differences show up in signal weighting and refresh frequency.

HubSpot's deal scores pull from deal amount changes, close date timing, time in stage, activity recency, next step freshness, and owner assignment gaps. New deals get an initial score within 36-48 hours, then update within 6 hours whenever something significant changes beyond a 3% threshold.

Gong takes a fundamentally different approach. Their deal likelihood scores draw 50% of signals from conversation intelligence - what's actually being said on calls - and 50% from activity, contacts, timing, and historical data. The model runs daily with dynamic weights, not static rules. It starts with a pre-trained base model built on billions of interactions, then customizes using your last two years of closed deals.

Clari uses a two-year CRM opportunity history plus conversations and meeting data to train. Their output includes both a deal score and prescriptive prioritization that maps rep activity against deal health.

Here's the thing: all of these models are only as smart as the data they train on. If your CRM is full of stale contacts, missing activity logs, and inconsistent stage definitions, the model learns from noise.

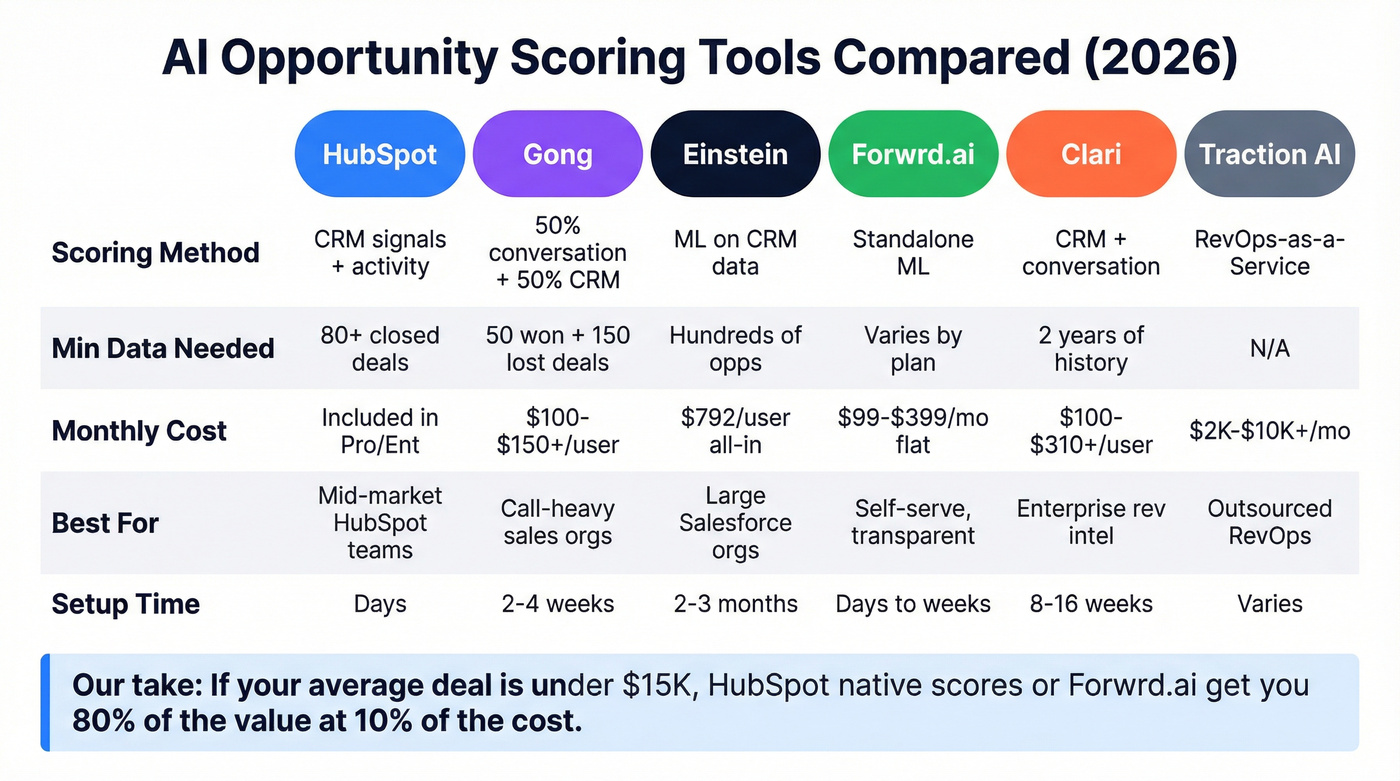

Top Scoring Tools Compared

| Tool | Scoring Method | Min Data Needed | Pricing | Best For |

|---|---|---|---|---|

| HubSpot | CRM signals + activity | ~80+ closed deals | Included in Sales Hub Pro/Ent | Mid-market teams on HubSpot |

| Gong | 50/50 conversation + CRM | 50 won / 150 lost deals | ~$100-$150+/user/mo | Conversation-heavy sales orgs |

| Einstein | ML on CRM data | Hundreds of opps | ~$792/user/mo all-in | Large Salesforce-native orgs |

| Forwrd.ai | Standalone ML scoring | Varies by plan | $99-$399/mo | Transparent, self-serve scoring |

| Clari | CRM + conversation data | 2 years of history | Quote-based (~$100-$310+/user/mo) | Enterprise rev intel |

| Traction AI | RevOps-as-a-Service | N/A | ~$2K-$10K+/mo | Outsourced RevOps |

HubSpot Deal Scores

The obvious starting point if you're already paying for Sales Hub Professional or Enterprise. No extra module, no add-on fee. Scores refresh every 6 hours and surface directly on deal records. The limitation is signal depth - HubSpot only sees what's in HubSpot, with no conversation intelligence or external intent data. For many mid-market teams, that's enough. HubSpot's own documentation warns scores shouldn't be used as the only factor - treat them as a triage layer, not gospel.

Gong Deal Likelihood

Skip Gong unless your team actually runs calls through it. The 50/50 signal mix between conversation intelligence and CRM data gives Gong a genuine edge - they report being 21% more precise than sales reps at predicting wins by week 4 of a quarter. But you need Gong Forecast, not just Gong Pro, and you need at least 50 closed-won and 150 closed-lost deals from the last two years. That's a real barrier for younger companies.

Also worth knowing: scores are less accurate for deals with minimal logged activity or third-party-managed pipeline - a friction point RevOps teams flag regularly on Reddit and in community forums.

Salesforce Einstein

$792/user/month, all-in. That's the estimated cost once you add Data Cloud and professional services, per independent analysis. Implementation cycles stretch 2-3 months. If you're a large Salesforce org with hundreds of historical opportunities and dedicated admins, Einstein earns its keep. For everyone else, it's overkill.

Forwrd.ai

The only vendor here with fully transparent pricing: $99/mo for up to 40,000 monthly predictions, $199/mo for up to 100,000, and $399/mo for 100,000+. It's standalone, so it works across CRMs. In our experience, this is the best option for teams that want predictive deal scoring without buying an entire revenue intelligence platform.

Clari

Enterprise revenue intelligence where scoring is one piece of a broader platform including forecasting, pipeline inspection, and deal analytics. Quote-based pricing; in practice, expect $100-$310+/user/month depending on modules, plus $15K-$75K in professional services. Implementations run 8-16 weeks.

Traction AI

RevOps-as-a-Service rather than a pure software tool. No public pricing - expect a services engagement in the $2K-$10K+/month range based on scope. Worth exploring if you want someone to configure and manage your scoring stack rather than DIY.

Our take: If your average deal size is under $15K, you probably don't need Clari or Einstein-level scoring. HubSpot's native scores or Forwrd.ai will get you 80% of the value at 10% of the cost.

Every AI scoring model on this list depends on clean CRM data - and that starts with accurate contacts. Prospeo enriches your pipeline with 98% verified emails and 125M+ mobile numbers, refreshed every 7 days. When your CRM data is right, your opportunity scores actually predict outcomes.

Stop training your scoring model on stale data. Start with contacts you can trust.

Does It Actually Work?

The headline numbers are compelling. Bain & Company found early AI deployments in sales boosted win rates by more than 30%. Gartner reports sellers partnering with AI are 3.7x more likely to meet quota.

But those results come from companies that implemented well. We've seen the realistic range firsthand: expect 10-20% win-rate lift and 10-25% forecast accuracy improvement when scoring is paired with pipeline inspection and coaching. Teams that just enable a feature and walk away? They end up with a dashboard nobody opens.

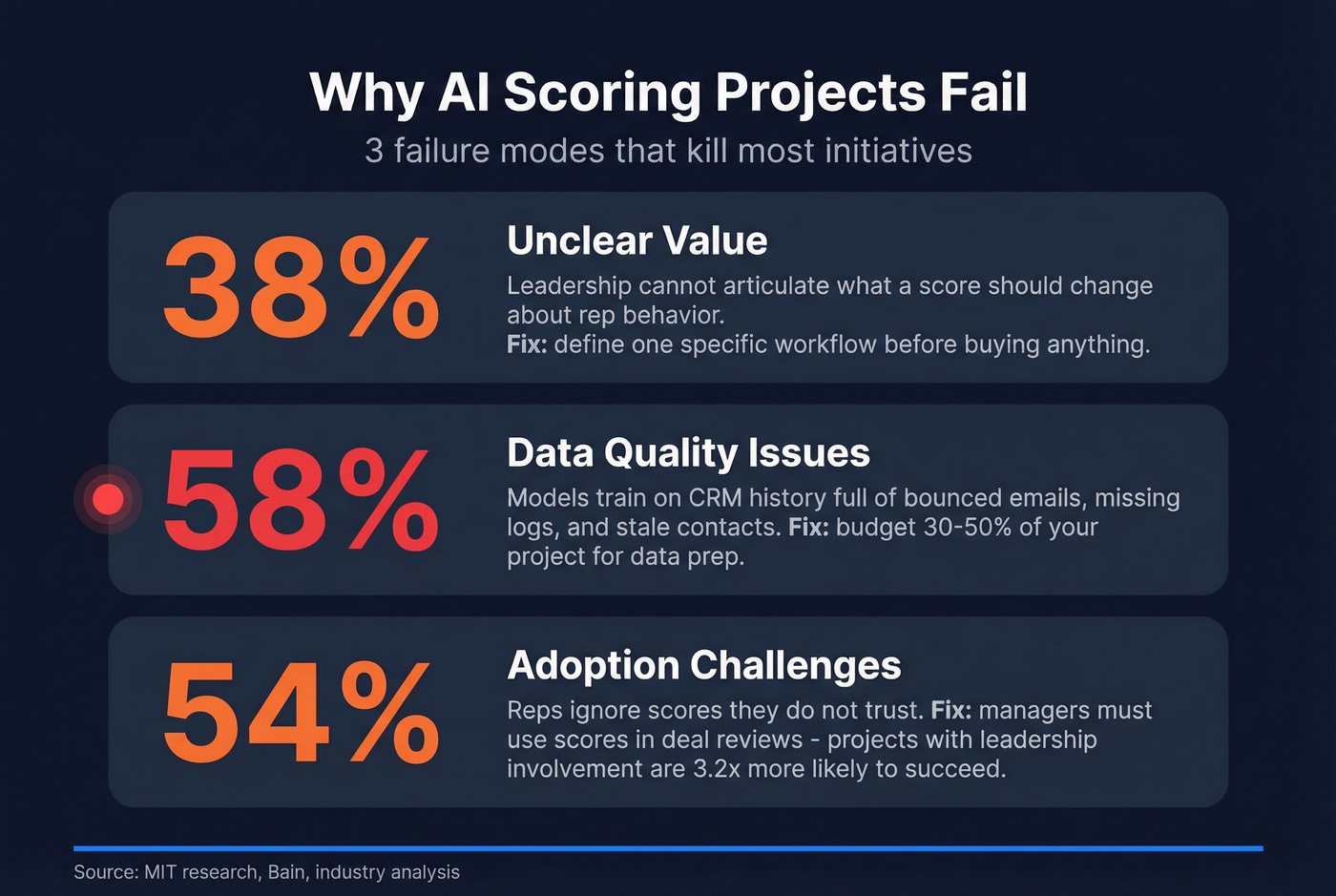

Why Scoring Projects Fail

Three failure modes account for most dead scoring initiatives.

Unclear value. 38% of failed AI projects die here. Leadership can't articulate what a score should change about rep behavior. Fix this before buying anything: define one specific workflow - e.g., "deals below 40% get a mandatory pipeline review."

Data quality issues. This is the big one, affecting 58% of AI projects. Models train on your CRM history, and if that history is full of bounced emails, missing activity logs, and contacts who left the company two years ago, scores are meaningless. Budget 30-50% of your scoring project for data prep. We can't stress this enough - we've watched teams burn months on tool selection only to realize their CRM data was too dirty to produce anything useful.

Adoption challenges. 54% of failed projects stall because reps ignore scores they don't trust. Projects with active leadership involvement are 3.2x more likely to succeed. That means managers using scores in deal reviews, not just enabling a feature.

Get Your CRM Ready First

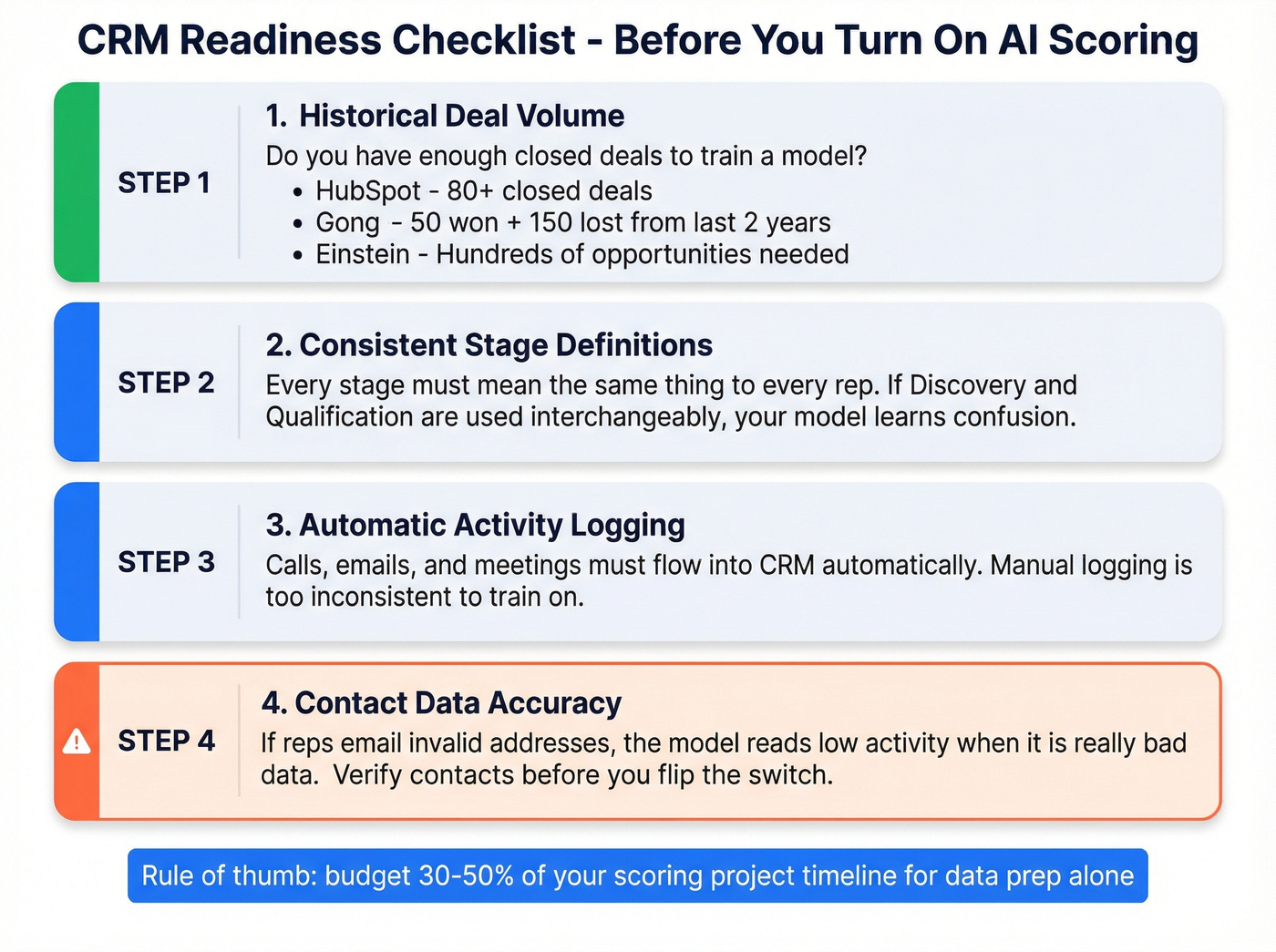

Before you turn on any scoring tool, run through this:

Historical deal volume. Gong needs 50 won + 150 lost deals from 2 years. Einstein needs hundreds. If you don't have the volume, start with HubSpot's lighter-weight approach.

Stage definitions. Every stage must mean the same thing to every rep. If "Discovery" and "Qualification" are used interchangeably, your model learns confusion.

Activity logging. Calls, emails, and meetings need to flow into the CRM automatically. Manual logging is too inconsistent to train on.

Contact data accuracy. Look - we've seen teams activate scoring on dirty CRM data and lose rep trust within two weeks. If reps are emailing invalid addresses, the model reads that as "low activity" when it's really bad data. Your scoring model trains on real engagement patterns, not bounced emails and dead numbers. Verify contacts before you flip the switch. Prospeo's 98% email accuracy on a 7-day refresh cycle is built for exactly this kind of pre-scoring cleanup, and it takes minutes to run through a CRM export.

You read it above: garbage contact data means garbage scores. Prospeo's CRM enrichment returns 50+ data points per contact at a 92% match rate - for roughly $0.01 per email. That's the foundation every deal scoring tool needs to work.

Clean pipeline data is the cheapest way to make AI scoring actually pay off.

Turning Scores Into Action

A score on a deal record is useless if nobody acts on it.

The real value comes when scores feed specific workflows: flagging at-risk deals for manager review, triggering multi-thread reminders when a single-threaded deal drops below 50%, or surfacing stalled opportunities in weekly pipeline meetings. Let's be honest - most teams that fail at scoring don't have a tool problem, they have a process problem. The best-performing teams we've seen treat AI-generated deal insights as coaching triggers, not just forecast inputs. Every score change becomes a conversation about what to do next, not just a number to report up.

FAQ

What's the difference between lead scoring and opportunity scoring?

Lead scoring evaluates pre-pipeline prospects on activity and fit. Opportunity scoring predicts close probability for deals already in your pipeline using CRM history, rep activity, and conversation data. Lead scoring decides who enters the funnel; deal scoring decides where reps focus inside it.

How much historical data do I need?

Gong requires 50 closed-won and 150 closed-lost deals from two years. Einstein needs hundreds. HubSpot works with fewer but improves with more. Under 80 total closed deals, most models won't produce useful scores - start with rule-based prioritization instead.

Can I use AI scoring with bad CRM data?

Technically yes, but unreliable scores are worse than no scores - they erode rep trust fast. Clean your contact data first so the model learns from real engagement signals, not bounced emails masquerading as low activity.

How does opportunity scoring AI fit into a broader pipeline strategy?

Scoring is one layer of a complete deal management approach. It works best when combined with pipeline inspection, deal analytics, and forecasting tools - giving managers a full picture of deal health rather than relying on rep gut feel alone. Define specific actions tied to score thresholds to drive adoption, like mandatory reviews for any deal that drops below 40%.