Sales Forecasting Challenges That Actually Break Your Number

Your CRM says there are 47 deals in the pipeline worth $1.2M. But 12 of those contacts changed jobs last quarter, eight emails are bouncing, and three "verbal commits" haven't returned a call in six weeks. That pipeline isn't a forecast - it's fiction.

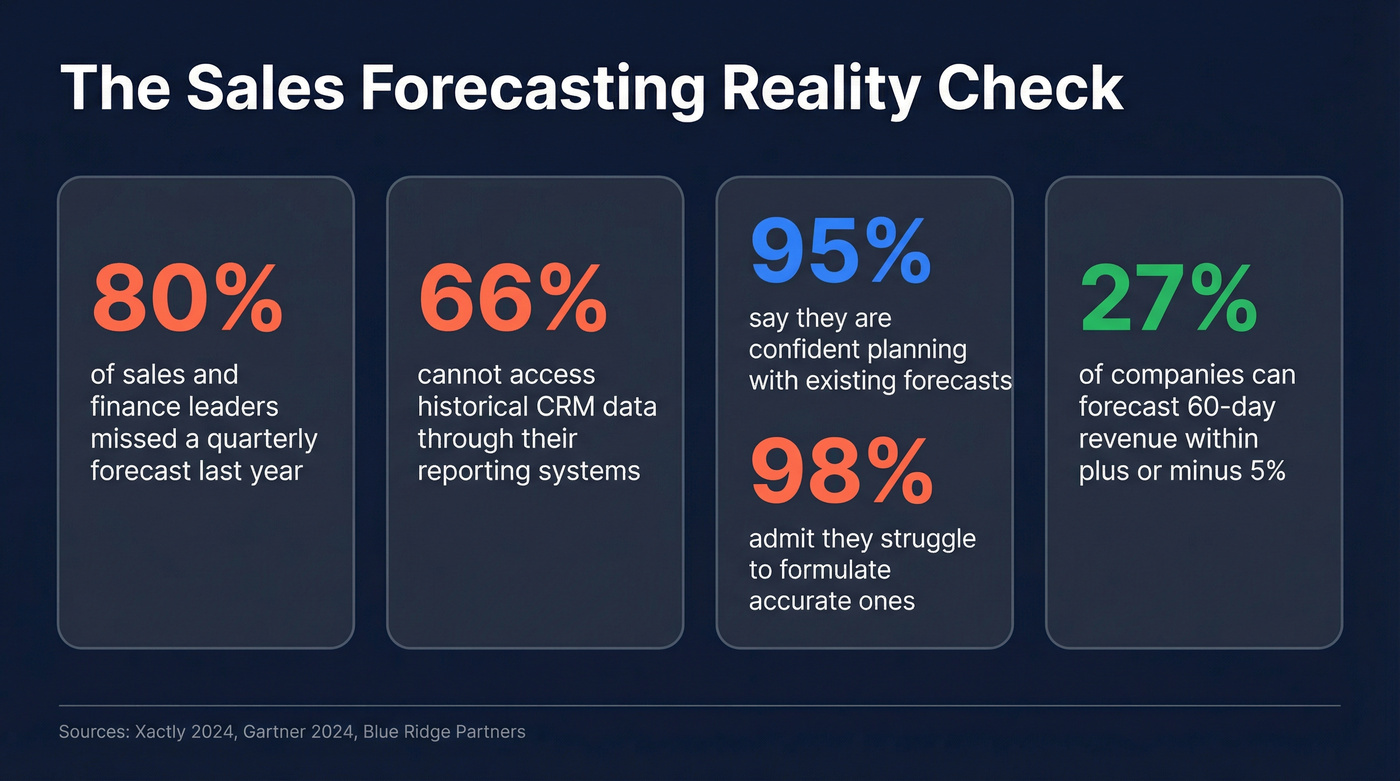

The data confirms it: 4 in 5 sales and finance leaders missed a quarterly forecast in the past year, with over half missing two or more times. These sales forecasting challenges aren't edge cases. They're the norm.

Fix These Three Things First

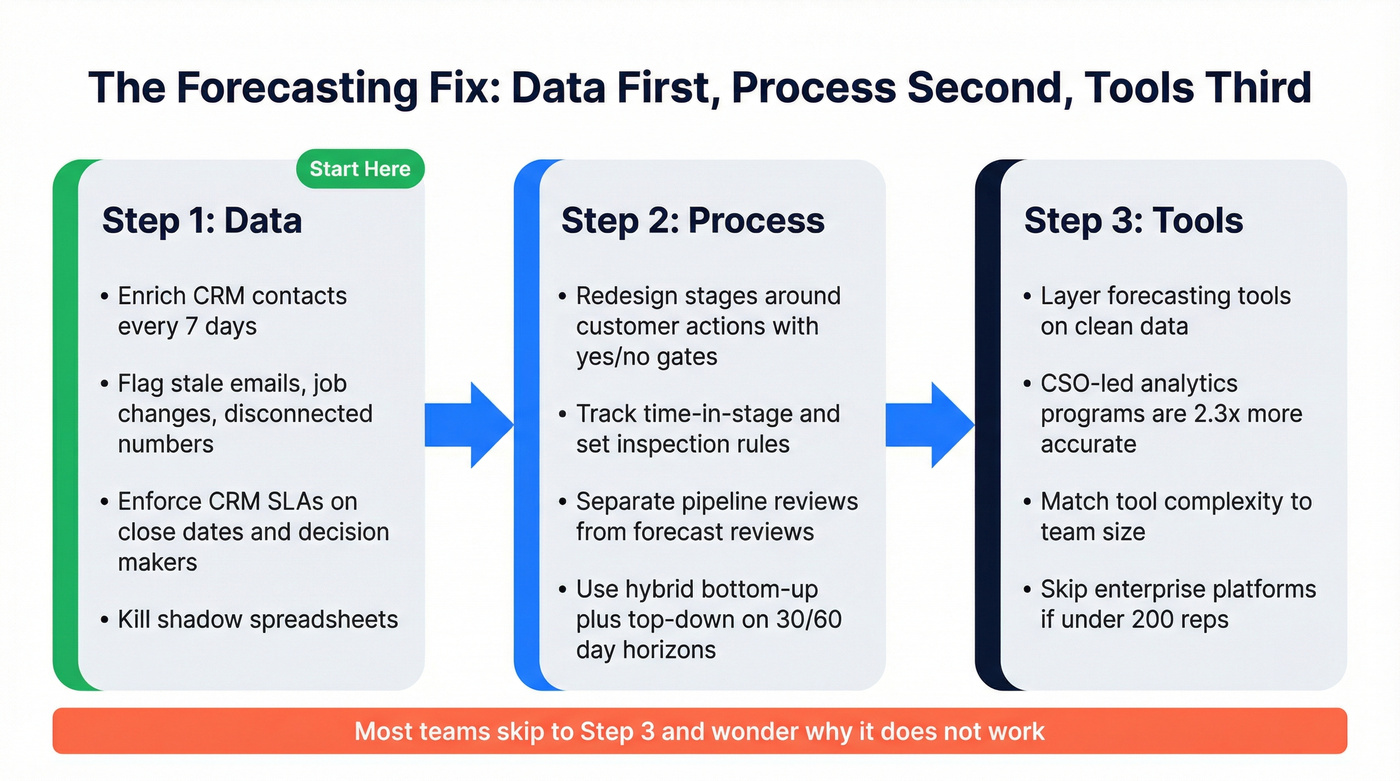

Before you buy another tool or redesign your forecast model, fix these in order:

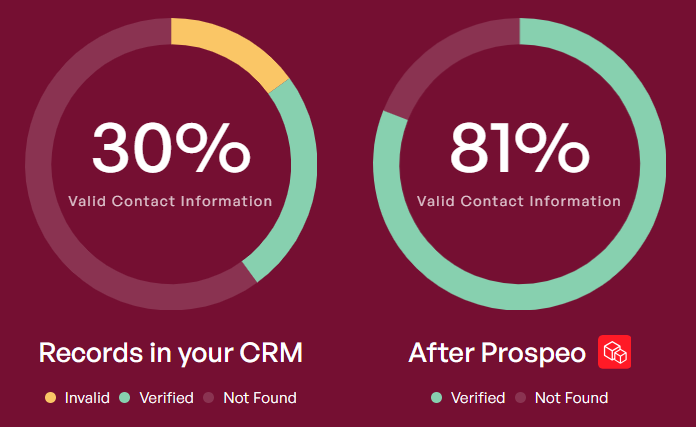

- Clean your pipeline data. Stale contacts, bounced emails, and job changes inflate your pipeline. Fix the inputs before you fix the math. (If you need a starting point, compare data enrichment options first.)

- Redesign pipeline stages around customer actions. "Proposal sent" is a seller activity. "Customer requested pricing" is a buying signal. The difference changes everything. (More on building objective stages in sales process optimization.)

- Separate pipeline reviews from forecast reviews. Combining them incentivizes sandbagging. Split them and you'll get honesty in both. (This is also a core sales operations metrics discipline.)

Only 27% of companies can forecast 60-day revenue within ±5%. These three fixes are how you join that group.

How Bad Is the Problem?

That Xactly survey of 400 revenue leaders found 66% can't access historical CRM data through their reporting systems - the single most common roadblock to accurate forecasting. Meanwhile, Gartner surveyed 303 sales leaders and 84% said sales analytics delivered less influence on performance than leadership expected.

Here's the confidence paradox that should worry every CRO: 95% of leaders express confidence planning with existing forecasts, yet 98% acknowledge struggling to formulate accurate ones. A $5M quarter could land anywhere between $3.75M and $6.25M. Understanding why forecasts fail at this rate means looking beyond the spreadsheet and into the process itself.

Where Forecasts Actually Break Down

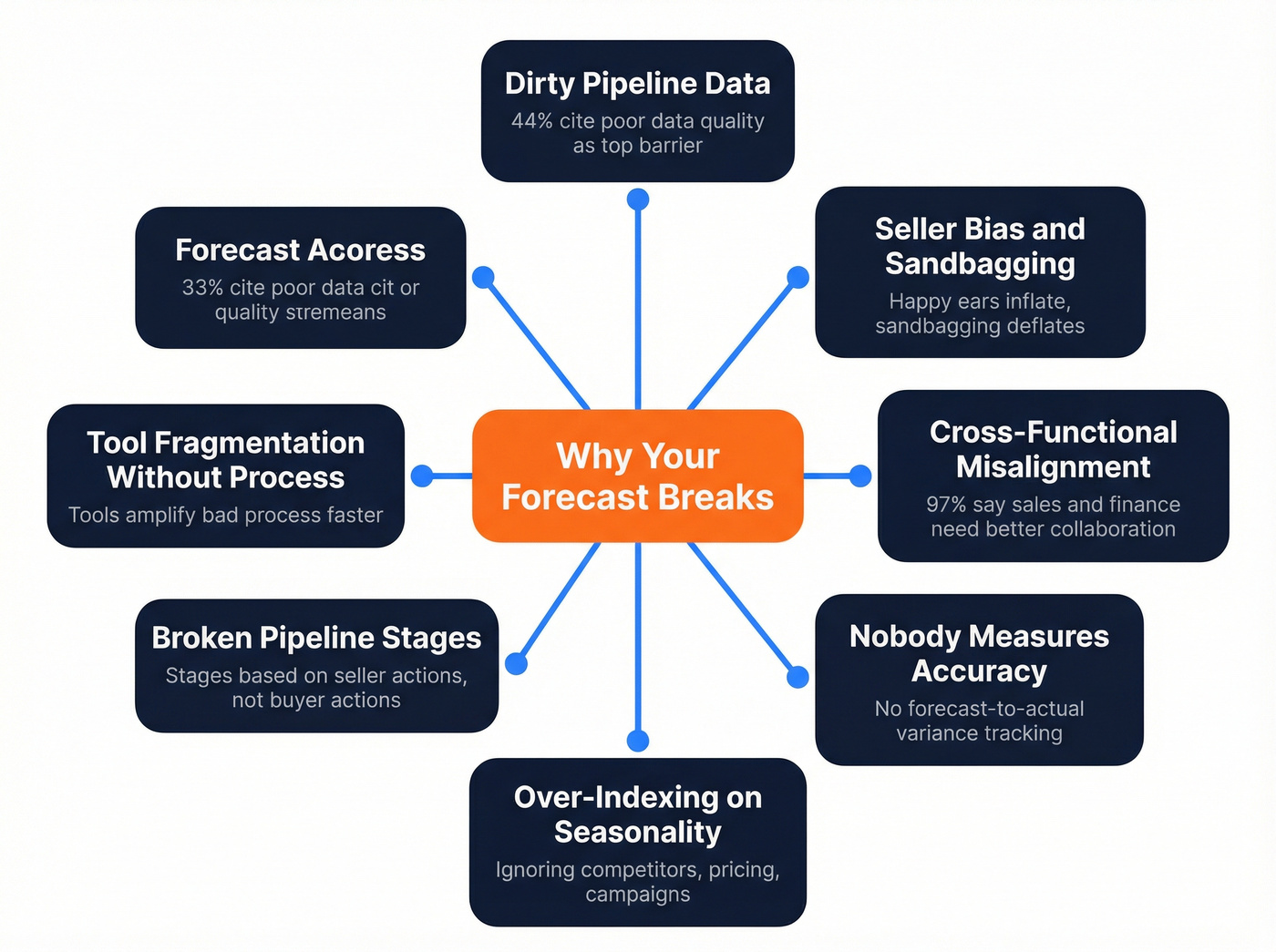

Dirty Pipeline Data

B2B contact records are typically refreshed on roughly a six-week cycle across most data providers. If a meaningful chunk of your pipeline contacts are stale, your forecast inherits that distortion automatically. When 44% of organizations cite poor data quality as a top barrier to analytics success, the problem isn't your forecast model - it's what's feeding it. (This is exactly what pipeline health metrics are meant to surface early.)

Seller Bias and Sandbagging

That confidence paradox - 95% confident, 98% struggling - is packed with happy ears, gut feel, and reps telling managers what they want to hear. Optimism bias inflates deals. Sandbagging deflates them. Both are common forecast-killing mistakes, and sandbagging deserves its own section below because it's the one nobody wants to address. (If you want a structured way to reduce gut-feel calls, start with data-driven selling.)

Cross-Functional Misalignment

Xactly's data is stark: 97% of leaders agree sales and finance need better collaboration. When sales forecasts live in a silo and finance builds models independently, the two numbers diverge - and the board gets a nasty surprise. (This is also where a strong RevOps manager function pays for itself.)

Nobody Measures Accuracy

The consensus on r/SalesOperations is telling: "We talk about forecasts constantly but nobody tracks forecast-to-actual variance with formal error metrics." If you don't measure accuracy, you can't improve it. Full stop.

Over-Indexing on Seasonality

Picture this: your model predicts a strong Q4 because Q4 is always strong. Then a competitor launches a free tier in October and your close rates crater. A great thread on r/supplychain nails it - traditional revenue forecasting problems often stem from models that lean heavily on seasonality while ignoring pricing changes, marketing campaigns, and competitor moves. Seasonality is one input among many, not the whole model. (If you need a framework for tracking competitor moves, use a competitive intelligence strategy.)

Broken Pipeline Stages

Ask yourself: at each pipeline stage, can you answer "has the customer done X?" with a definitive yes? If not, the deal shouldn't advance. Blue Ridge Partners recommends defining stages around objective customer actions rather than seller activities, using simple yes/no gate questions at each transition to reduce bias. We've seen teams cut their forecast variance by double digits just by rewriting their stage definitions - no new tools required. (For related pitfalls, see these sales pipeline challenges.)

Tool Fragmentation Without Process

Look, buying Clari or Gong won't fix a broken process. Gartner found that CSO-led analytics programs are 2.3x more likely to achieve higher forecast accuracy. The differentiator isn't the tool - it's whether leadership owns the analytics strategy. Tools amplify good process. They also amplify bad process, just faster. (If you're evaluating vendors anyway, start with a shortlist of sales forecasting solutions.)

Dirty pipeline data is the #1 forecast killer - and most providers refresh contacts every 6 weeks. Prospeo refreshes every 7 days, delivers 98% email accuracy, and flags job changes so your CRM reflects reality, not last quarter's org chart.

Stop forecasting on stale data. Fix your pipeline inputs for $0.01 per email.

The Sandbagging Problem

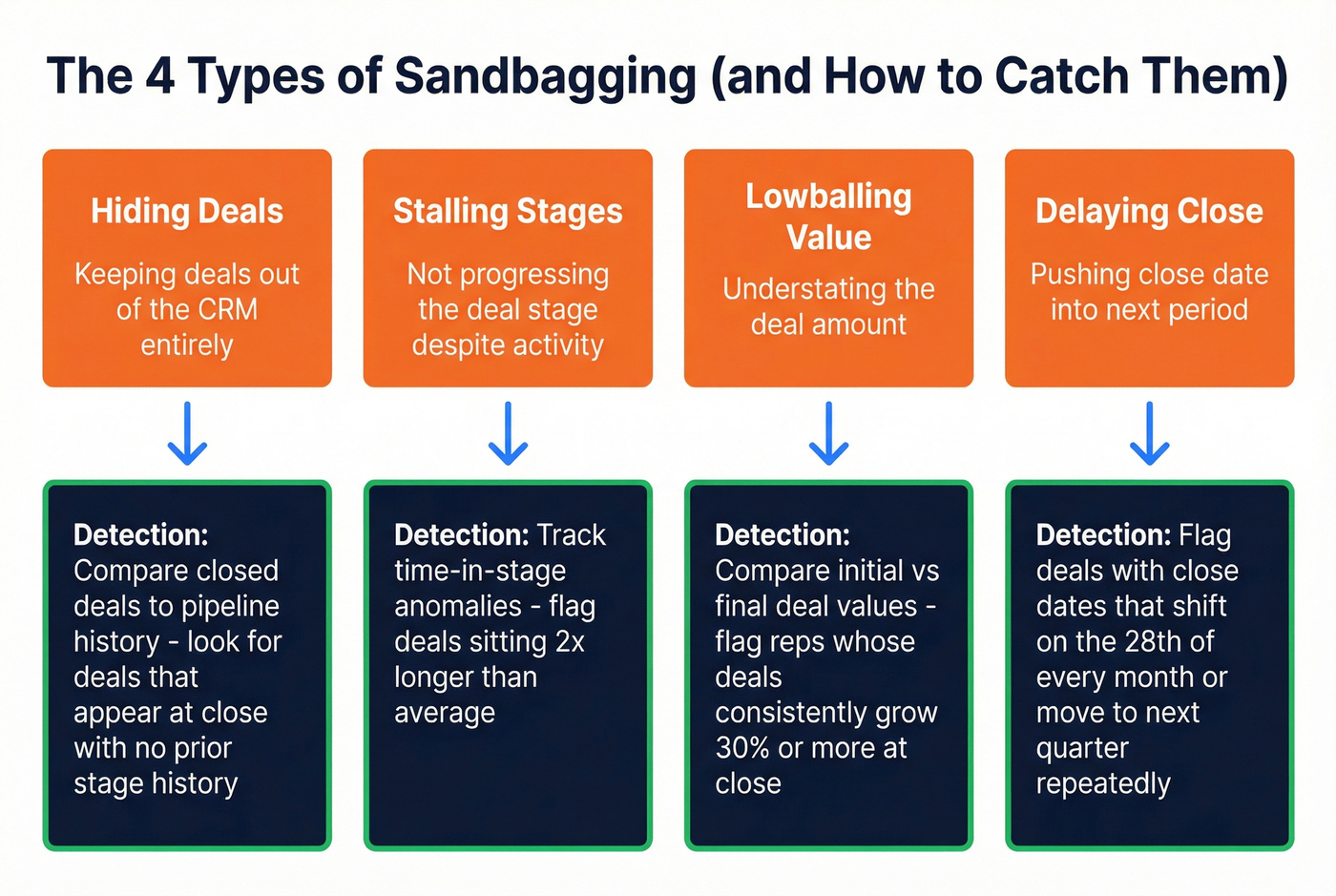

Sandbagging - intentionally understating deal value, visibility, or timing - comes in four forms: keeping deals out of the CRM entirely, not progressing the stage, lowballing the deal value, and delaying close into the next period.

The motivations are rational. Reps sandbag to manage expectations, avoid scrutiny on big deals, manipulate commission thresholds, or protect themselves from ratcheted quotas. When leadership rewards "hero closes" - the rep who pulls a surprise deal at quarter end - they're actively incentivizing the behavior.

The prevention playbook starts with meeting design. Separate pipeline reviews from forecast reviews so reps aren't punished for transparency. Align comp to reward consistent performance rather than end-of-quarter heroics. Use CRM analytics to detect patterns: deals repeatedly pushed to next quarter, stage velocity anomalies, close dates that shift on the 28th of every month. One RevOps manager we spoke with flagged that a single rep had pushed the same three deals into "next quarter" four times running - once they surfaced that pattern in a team review, the behavior stopped overnight.

Here's the thing: if your average deal size is under $25K, you probably don't need an AI forecasting tool. You need a clean CRM, honest pipeline stages, and a manager who asks hard questions. The tooling obsession is a distraction from the process problem.

What Actually Fixes Forecasting

Data first, process second, tools third.

Data

Run your CRM contacts through enrichment to flag stale emails, job changes, and disconnected numbers. Enforce CRM SLAs - every deal needs an updated close date, an identified decision maker, and a documented next step. Kill shadow spreadsheets by making the CRM trustworthy. Prospeo's 7-day data refresh cycle and 98% email accuracy make this step fast: run your pipeline through enrichment and you'll see exactly which deals have real, reachable contacts versus which ones are ghosts inflating your number. (If you're building a broader stack, start with contact management software.)

Process

Adopt customer-action pipeline stages with yes/no gates. Track time-in-stage and set rules - too long means inspect or kill, too fast means verify. Roll frontline intelligence up to leadership in 24-48 hours, not at the weekly forecast call. Use hybrid forecasting: bottom-up pipeline analysis checked against top-down market reality on rolling 30/60-day horizons. Build in a cadence for adjusting forecasts mid-quarter as new deal intelligence surfaces. Waiting until the final week guarantees surprises. (For a clean way to define and score buying intent, use a guide to identifying buying signals.)

Models

One RevOps leader on r/salestechniques reduced forecast error from ~15% to 5% after implementing conversation intelligence and changing how they scored calls. That result only happened because the underlying data and process were already solid. In our experience, teams that fix data quality first get 2-3x more value from any forecasting tool they layer on top. Accurate models depend on clean inputs far more than sophisticated algorithms.

Let's be honest - most teams skip straight to the model and wonder why it doesn't work.

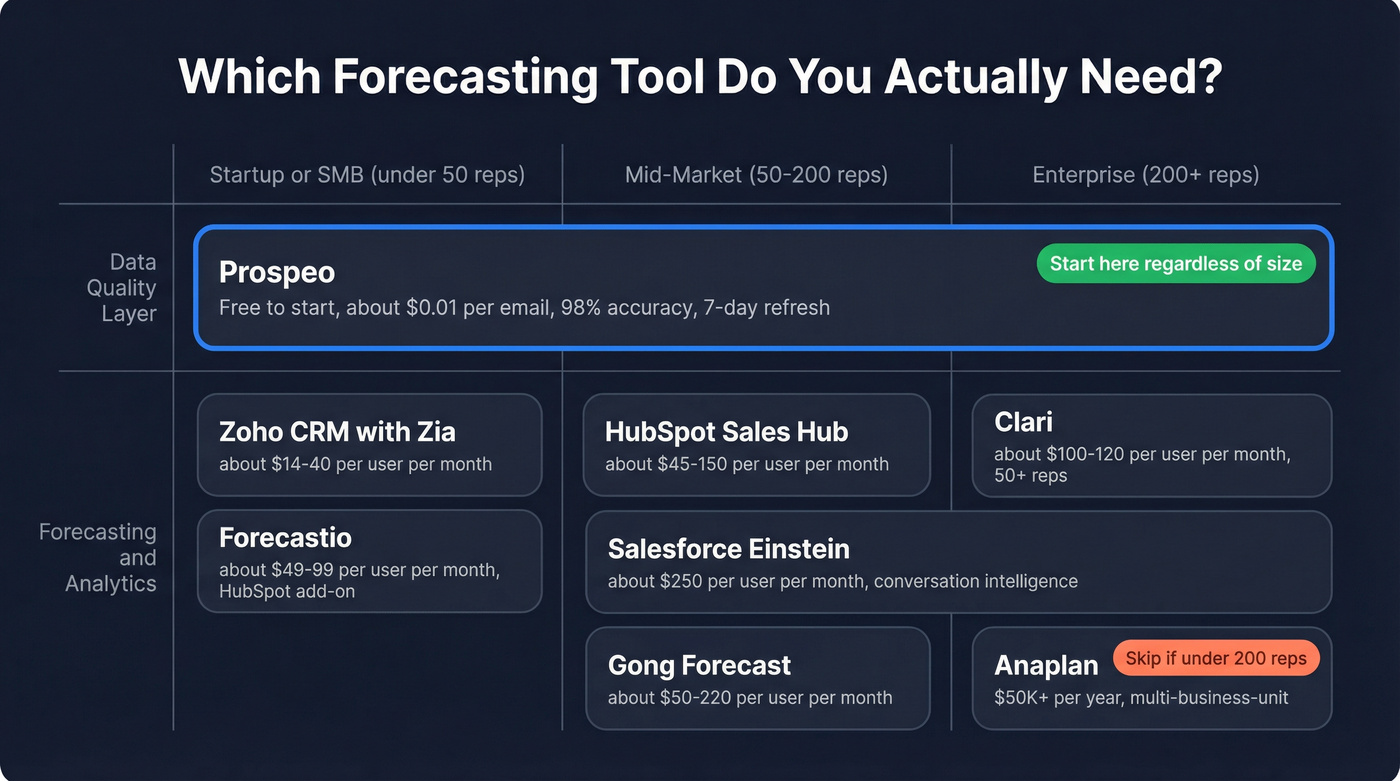

Forecasting Tools Worth Knowing

| Tool | Category | Starting Price | Best For |

|---|---|---|---|

| Prospeo | Data quality layer | Free; ~$0.01/email | Clean pipeline data before it hits your forecast |

| Clari | Pipeline forecasting | ~$100-120/user/mo | Enterprise RevOps teams with 50+ reps |

| Gong Forecast | Conversation intelligence + forecasting | ~$250/user/mo | Teams wanting call data as a forecast input |

| HubSpot Sales Hub | CRM + forecasting | ~$45-150/user/mo | Mid-market teams already on HubSpot |

| Salesforce Einstein | Native CRM AI | ~$50-220/user/mo | Salesforce-native orgs who won't switch |

| Zoho CRM (Zia) | Budget CRM AI | ~$14-40/user/mo | SMBs and startups watching every dollar |

| Forecastio | AI forecasting | ~$49-99/user/mo | HubSpot add-on for dedicated forecasting |

| Anaplan | Enterprise planning | ~$50K+/year | Multi-business-unit planning at scale |

For most mid-market teams: start with a data quality layer like Prospeo, add HubSpot or Salesforce forecasting, and only consider Clari or Anaplan at enterprise scale. Skip Anaplan entirely if you're under 200 reps - it's built for multi-business-unit planning and you'll spend more time configuring it than using it. CRM pipeline tools solve a different problem than enterprise planning platforms, so know which problem you're solving before you buy. (If you want a broader vendor roundup, see our best sales forecasting tools.)

You just read that 44% of orgs cite poor data quality as their top analytics barrier. Prospeo's CRM enrichment returns 50+ data points per contact at a 92% match rate - flagging bounced emails, job changes, and disconnected numbers before they inflate your forecast.

Enrich your pipeline now and forecast on data you can actually trust.

FAQ

What's a good forecast accuracy benchmark?

Most B2B teams hit ±10-25% variance. Only 27% of companies forecast 60-day revenue within ±5%. Track forecast-to-actual variance quarterly and aim to tighten by 3-5 percentage points each cycle.

Why do forecasts collapse at quarter end?

Sandbagging and zombie deals. Reps understate deals to manage expectations, then "pull them in" as heroes. Dead deals sit in the pipeline because nobody validated the contacts. These are the most persistent forecast-accuracy killers teams face, rooted in behavior rather than technology.

Do AI forecasting tools actually work?

Only when underlying data is clean. CSO-led analytics programs are 2.3x more likely to achieve higher accuracy - leadership and process matter more than the algorithm. Layer AI on top of verified pipeline data for the best results.

How do I fix pipeline data quality fast?

Run CRM contacts through an enrichment tool to flag stale emails, job changes, and disconnected numbers. A 7-day refresh cycle catches decay before it corrupts your forecast - and free tiers on most enrichment tools let you audit a sample before committing.