Sales Lead Quality: How to Measure, Score, and Fix It

Only 27% of leads sent to sales are actually qualified. For your SDR team, that means most of what hits the queue won't be worth an AE's time. Sales lead quality is the invisible bottleneck - and the fix isn't more effort. It's better data and a tighter framework.

Below: a steal-this scoring model with exact point values, industry benchmarks, and a framework comparison you can implement this week.

What Lead Quality Actually Means

Lead quality measures how likely a contact converts to revenue. A pipeline of 500 leads converting at 20% beats 5,000 converting at 2% every time - and it costs a fraction of the SDR hours.

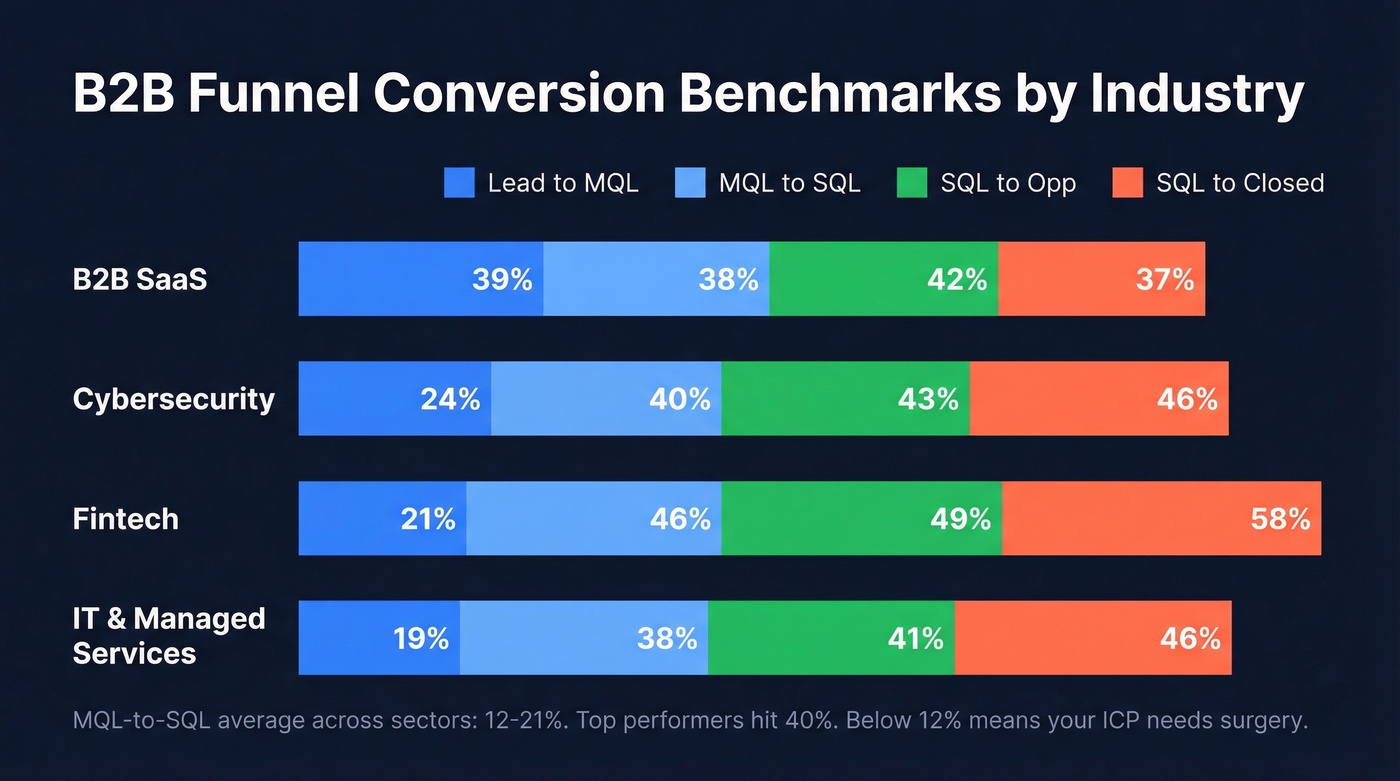

Here are funnel conversion benchmarks worth pinning to your wall:

| Industry | Lead-MQL | MQL-SQL | SQL-Opp | SQL-Closed |

|---|---|---|---|---|

| B2B SaaS | 39% | 38% | 42% | 37% |

| Cybersecurity | 24% | 40% | 43% | 46% |

| Fintech | 21% | 46% | 49% | 58% |

| IT & Managed Svcs | 19% | 38% | 41% | 46% |

MQL-to-SQL averages 12-21% across sectors. Top performers hit 40%. If you're below 12%, your Ideal Customer Profile definition needs surgery - not more leads.

Lead Quality Metrics That Matter

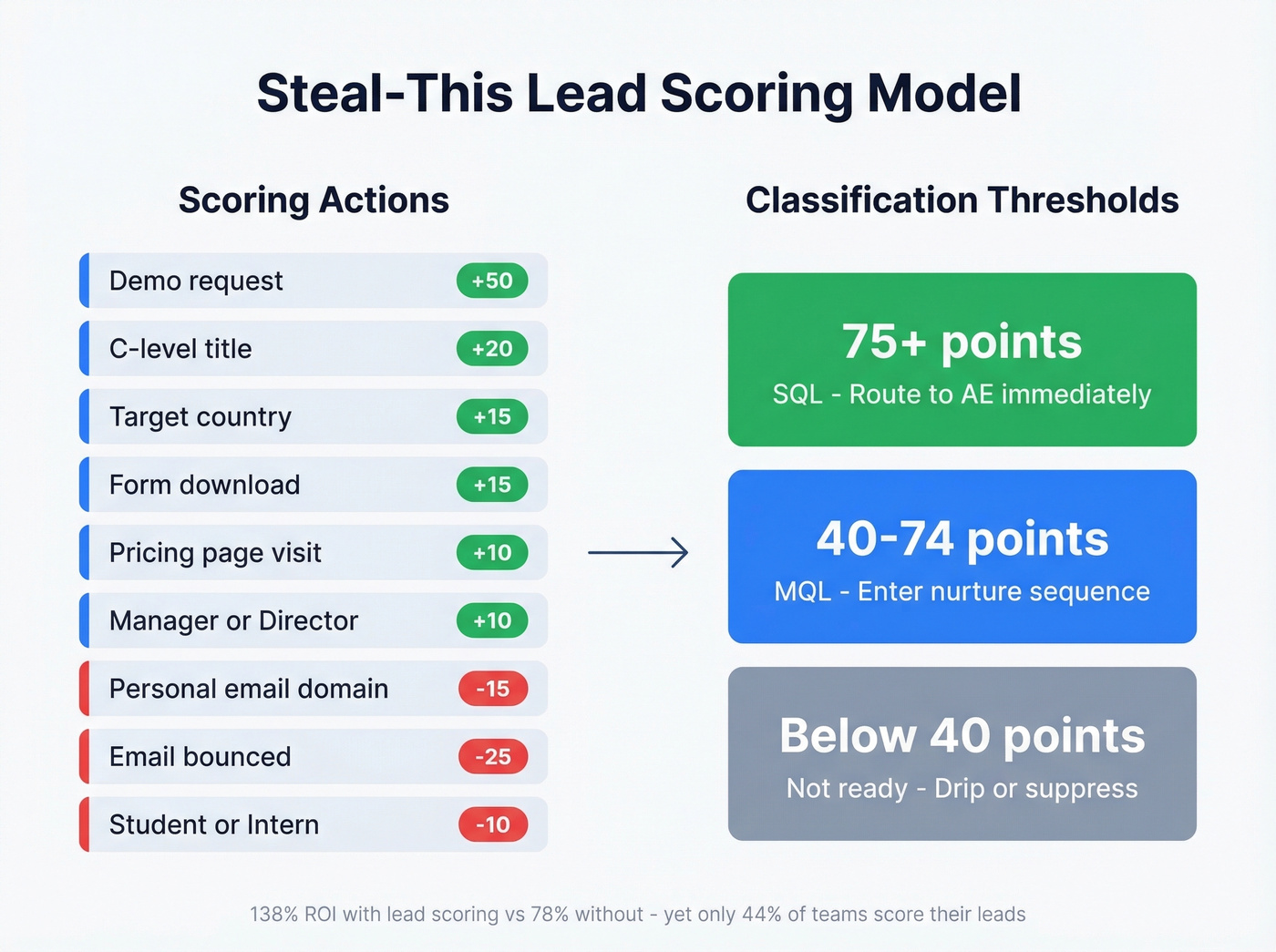

Companies using lead scoring see 138% ROI versus 78% without it. Yet only 44% of organizations actually score their leads. Here's a model you can deploy this week.

| Action / Attribute | Points |

|---|---|

| Demo request | +50 |

| Pricing page visit | +10 |

| Form download | +15 |

| C-level title | +20 |

| Manager / Director | +10 |

| Target country | +15 |

| Student / Intern | -10 |

| Personal email domain | -15 |

| Email bounced | -25 |

| Score Range | Classification | Action |

|---|---|---|

| 75+ | SQL | Route to AE |

| 40-74 | MQL | Nurture sequence |

| Below 40 | Not ready | Drip / suppress |

We've seen most scoring models fail not because of bad logic but because of bad data feeding them. A bounced email is -25 points, sure - but if you don't catch it before the lead enters the model, you're inflating your pipeline with phantom SQLs. Verification matters upstream, before a single score gets calculated. (If you want a deeper playbook, see our lead scoring guide.)

Bad data is the #1 reason scoring models fail. A bounced email is -25 points you never should have assigned. Prospeo's 5-step verification and 7-day refresh cycle means every lead entering your model is real, reachable, and current - not a phantom SQL inflating your pipeline.

Clean data in, qualified leads out. Start verifying for free.

Which Framework Fits Your Sales Motion

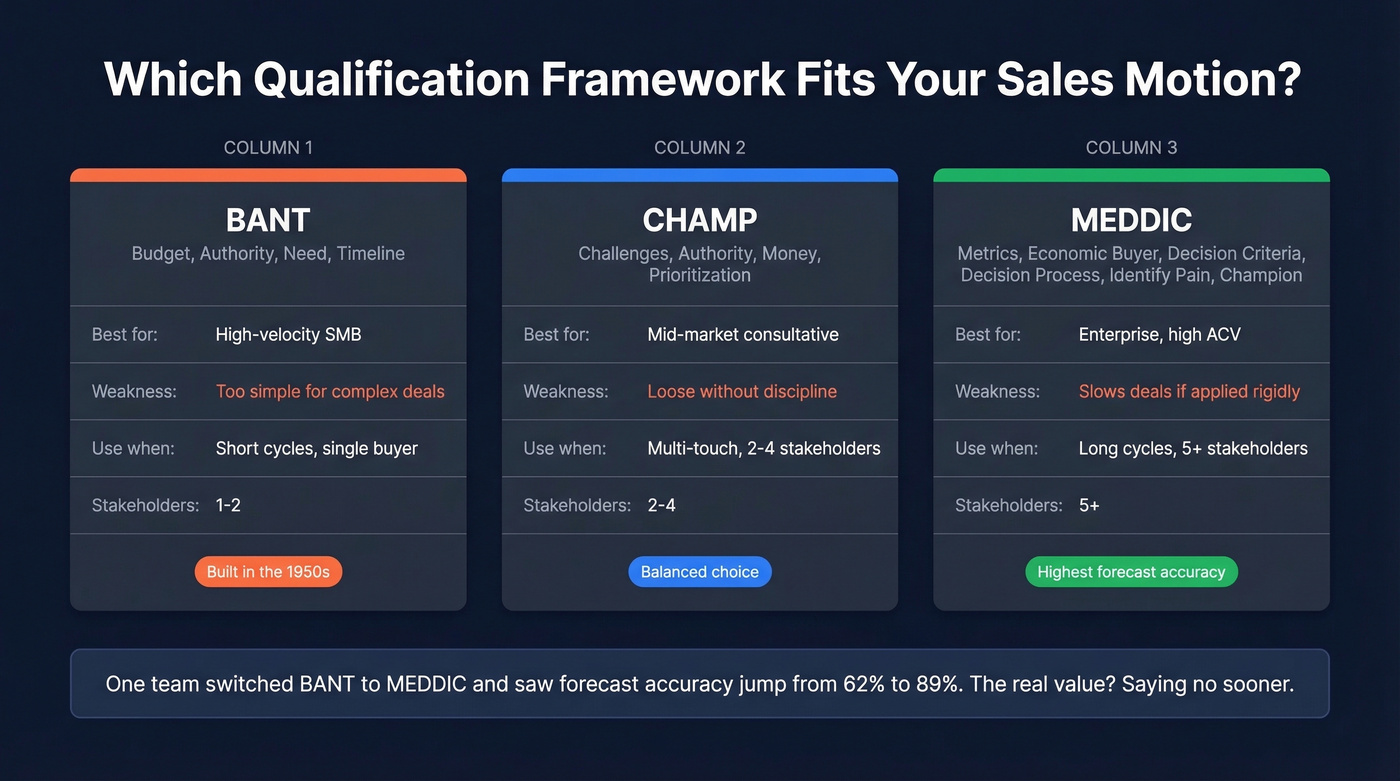

BANT was built at IBM in the 1950s - a world with one decision-maker per deal. Modern B2B buying involves roughly 7 stakeholders. That's a different game entirely.

| Framework | Best For | Weakness | Use When |

|---|---|---|---|

| BANT | High-velocity SMB | Too simple for complex deals | Short cycles, single buyer |

| CHAMP | Mid-market consultative | Loose without discipline | Multi-touch, 2-4 stakeholders |

| MEDDIC | Enterprise, high-ACV | Slows deals if applied rigidly | Long cycles, 5+ stakeholders |

One team we worked with switched from BANT to MEDDIC and saw forecast accuracy jump from 62% to 89%. The framework didn't generate better leads - it forced reps to disqualify faster. That's the real value. Saying "no" sooner frees up hours for the deals that actually close. (If you're going deeper on MEDDIC, use these MEDDIC discovery questions.)

Five Mistakes That Kill Lead Quality

1. Chasing volume over intent. More leads don't equal more revenue. Filter for buying signals before scaling spend.

2. Treating all leads the same. A pricing page visitor and a blog reader aren't the same lead. Segment by behavior, not just source.

3. Running outdated scoring models. Open rates are unreliable with modern email privacy changes - weight on-site behavior like pricing page visits and case study engagement instead. (More on what to track in funnel metrics.)

4. Ignoring post-handoff gaps. Push engagement context into your CRM so reps don't start cold. The consensus on r/sales is that this single fix shortens first-call duration by 30-40%. (This is also a classic sales pipeline challenge.)

5. Slow follow-up. Following up within one hour converts at 53% versus 17% after 24 hours. Meanwhile, 44% of reps give up after one follow-up. That's leaving money on the table. If you need copy you can paste, use these sales follow-up templates.

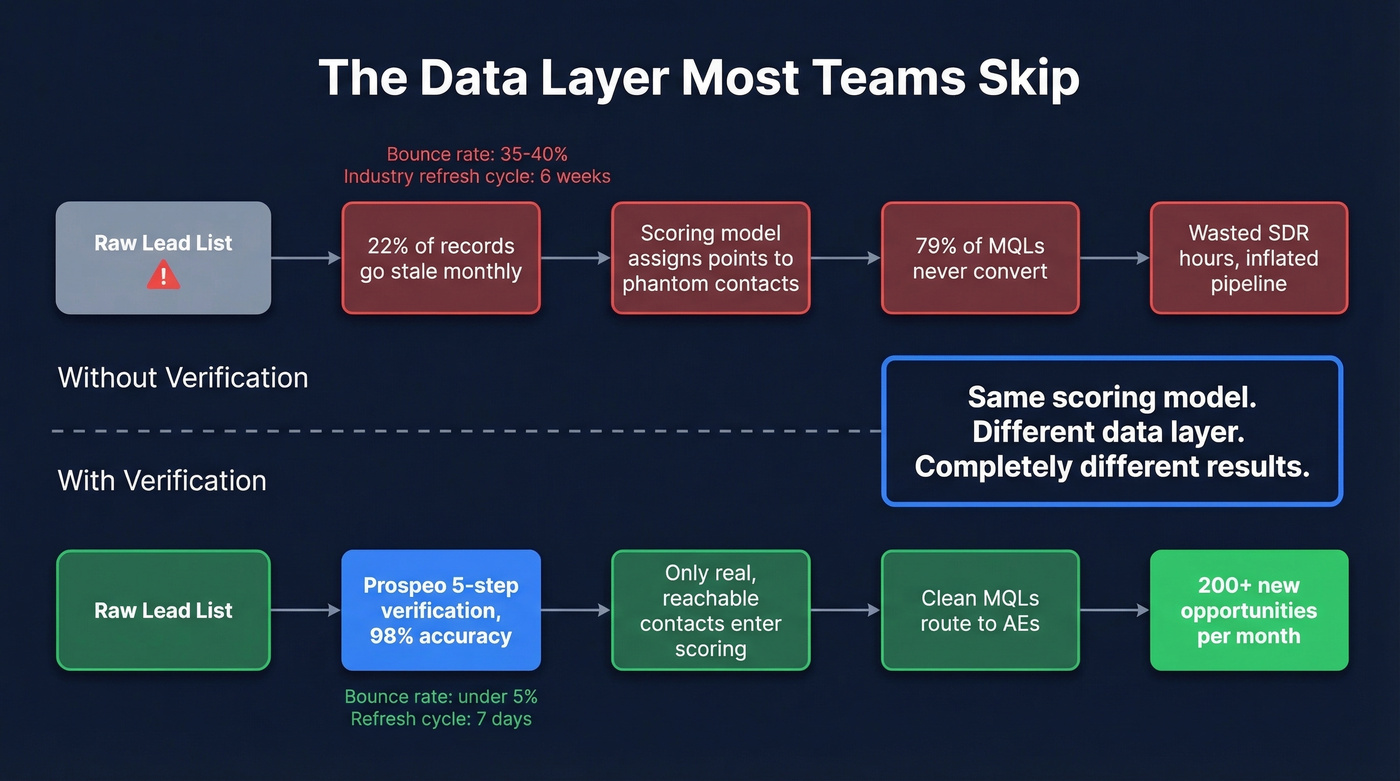

The Data Layer Most Teams Skip

Here's the thing: you don't need a more complex qualification framework. You need cleaner data. Ask any SDR what "lead quality" means, and you'll hear the same answer - it's not about list size, it's about whether the contact still works there and picks up the phone.

B2B contact data decays fast. In biotech, 22% of records go stale each month. And 79% of MQLs never convert, often because teams keep nurturing contacts who've changed roles entirely. When pipeline quality depends on data freshness this much, verification isn't optional - it's infrastructure. (If you're fixing this systematically, start with data enrichment services.)

Prospeo handles this with 98% email accuracy on a 7-day refresh cycle, compared to the 6-week industry average. When Snyk's 50-person AE team switched, their bounce rate dropped from 35-40% to under 5%, generating 200+ new opportunities per month. If your scoring model is solid but your conversion rates still lag, skip the framework debates and audit your data layer first. (To quantify the impact, track your email bounce rate.)

Snyk's 50 AEs cut bounce rates from 35% to under 5% and generated 200+ new opportunities per month - not by changing their scoring framework, but by fixing the data layer underneath it. At ~$0.01/email with 98% accuracy, Prospeo makes lead quality a data problem you can actually solve.

Stop disqualifying leads that were never real contacts to begin with.

FAQ

What's the difference between lead quality and lead quantity?

Lead quality measures conversion likelihood; quantity measures volume. High-quality pipelines convert at 2-3x the rate with lower acquisition costs. Teams that prioritize quality over volume typically see shorter sales cycles and higher win rates because reps spend time on contacts who match the ICP instead of chasing dead ends.

What's a good MQL-to-SQL conversion rate?

Industry averages range 12-21%, while top-performing teams hit 40%. Below 12% signals your ICP definition or scoring model needs rework. Tightening firmographic filters and weighting intent signals like pricing page visits can move this number fast.

How does data accuracy affect lead quality?

Stale emails inflate your pipeline with leads that never convert, dragging down every downstream metric. Verifying contacts on a weekly refresh cycle catches bad records before they waste rep time. Tools like Prospeo, NeverBounce, and ZeroBounce keep your scoring model fed with real, reachable contacts instead of phantom SQLs.