Explicit vs Implicit Lead Scoring in 2026 (+ Template)

A Salesforce study of 3,200 B2B professionals found that 67% cite poor lead prioritization as their biggest productivity drain. Not bad messaging. Not weak product-market fit. Reps chasing the wrong leads.

The root cause is almost always the same: a single-number score that mashes together who a lead is with what they've done, making both signals useless. An intern downloads 14 whitepapers and gets routed to your top AE, while the VP of Engineering at a perfect-fit account who visited your pricing page once sits in a nurture drip for six weeks. Understanding explicit vs implicit lead scoring - and keeping them separate - is the fix.

The Short Version

Explicit scoring = who they are (fit). Implicit scoring = what they do (intent). You need both, kept as separate scores rather than blended into one meaningless number.

Start with 5-7 criteria that predict roughly 80% of your conversions. Set your MQL threshold at 50-75 points. Add negative scoring and monthly decay from day one.

Here's the thing most guides won't tell you: your model is only as good as your data. Stale contacts, bounced emails, and outdated job titles corrupt both scores before they ever reach a rep.

What Is Explicit Lead Scoring?

Explicit scoring evaluates a lead based on who they are - firmographic and demographic attributes that indicate fit with your ideal customer profile. Job title, company size, industry, budget authority. These are the fields you'd check on a napkin before deciding whether someone's worth a sales conversation.

The common failure point is incomplete form submissions. If a lead skips the "company size" field, your explicit score is built on half the picture. Enrichment tools can auto-fill missing firmographic fields so your scores aren't based on half-empty forms, but we'll cover data quality later.

What Is Implicit Lead Scoring?

Not all behavioral signals carry equal weight. A pricing page visit signals far more intent than a blog post read. A demo request is the strongest hand-raise short of "send me a contract."

Implicit scoring measures what a lead does - actions you observe rather than information they volunteer. Pages visited, emails opened, content downloaded, demos requested. The trap is treating all actions equally. We've watched teams give the same 10 points to a case study download and a pricing page visit, then wonder why their MQLs don't convert.

The other failure mode is subtler. Implicit scoring breaks when emails bounce. If 30% of your list has invalid addresses, you can't track engagement on those contacts at all. Your implicit scores end up reflecting the subset who happened to have valid emails - not your actual best leads.

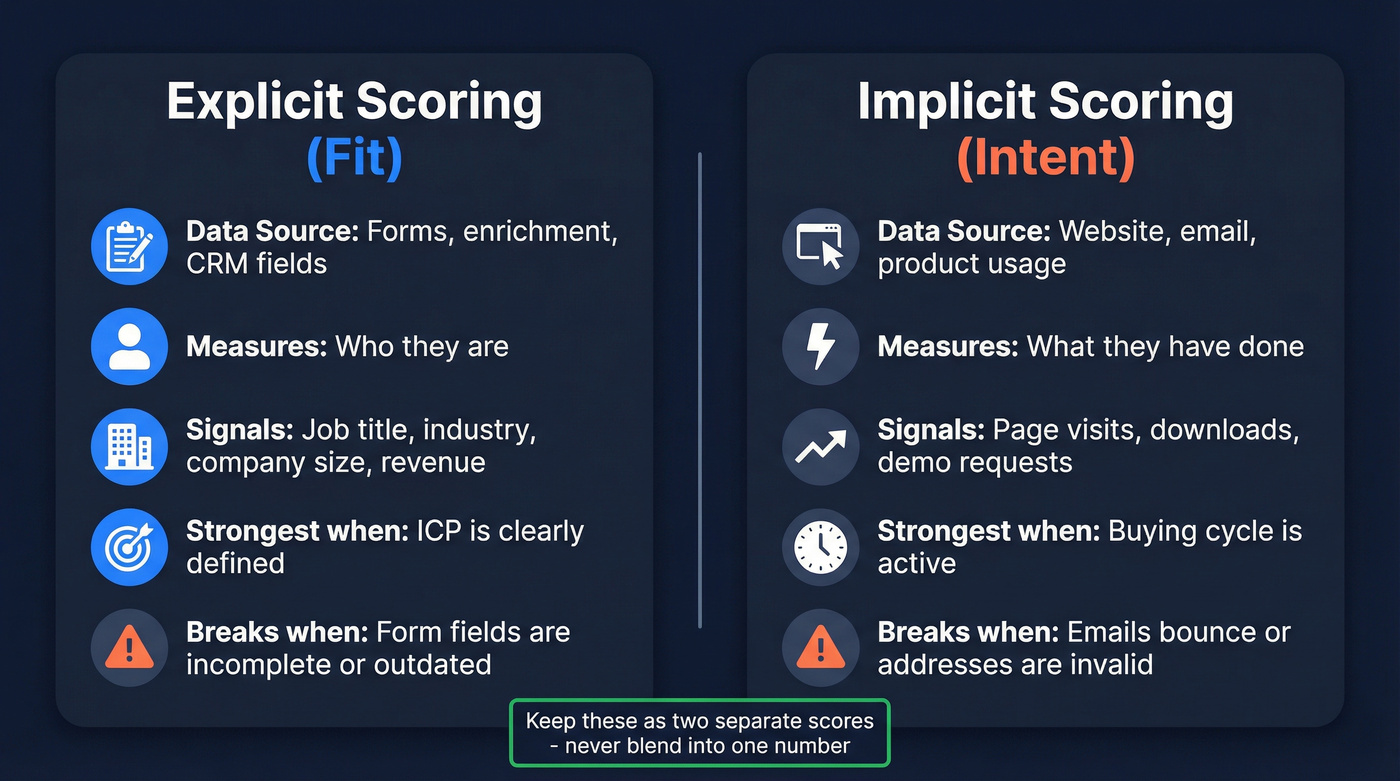

Fit vs. Intent: Side-by-Side

| Dimension | Explicit (Fit) | Implicit (Intent) |

|---|---|---|

| Data source | Forms, enrichment, CRM | Website, email, product |

| What it measures | Who they are | What they've done |

| Example signals | Title, industry, size | Page visits, downloads |

| Strongest when | ICP is well-defined | Buying cycle is active |

| Common failure | Incomplete form data | Bounced/invalid emails |

The Complete Scoring Template

This is the section most guides skip. They explain the concept and leave you to build the model yourself.

Start with 5-7 criteria that predict roughly 80% of your conversions. Don't build a 30-variable model on day one - you'll spend more time tuning weights than actually selling. Here's a complete template covering positive explicit, positive implicit, and negative scoring:

| Category | Signal | Points |

|---|---|---|

| Explicit (+) | C-level decision maker | +30 |

| Explicit (+) | Target industry match | +25 |

| Explicit (+) | Multiple stakeholders engaged | +25 |

| Explicit (+) | Revenue within ICP range | +20 |

| Explicit (+) | Target geography | +15 |

| Implicit (+) | Demo request | +40 |

| Implicit (+) | Pricing page visit | +20 |

| Implicit (+) | Case study or ROI calculator use | +15 |

| Implicit (+) | High-intent asset download | +10 |

| Implicit (+) | Email open + click (same campaign) | +5 |

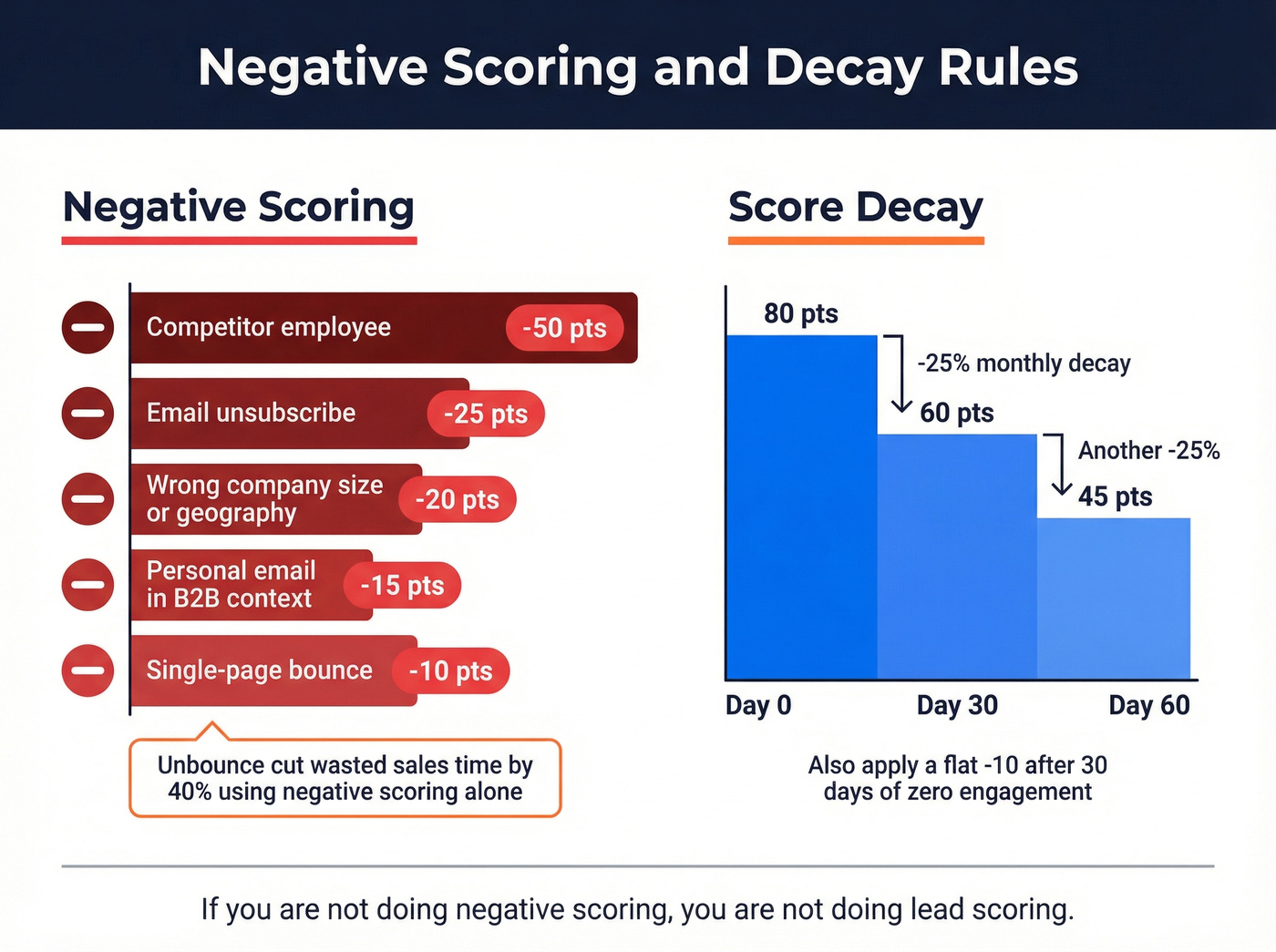

| Negative | Competitor employee | -50 |

| Negative | Email unsubscribe | -25 |

| Negative | Wrong company size | -20 |

| Negative | Personal email in B2B context | -15 |

| Negative | Single-page bounce | -10 |

Those negative scoring rows aren't optional. A competitor employee who downloads your entire resource library shouldn't end up in your pipeline. A lead who unsubscribes just told you something important - your model should listen.

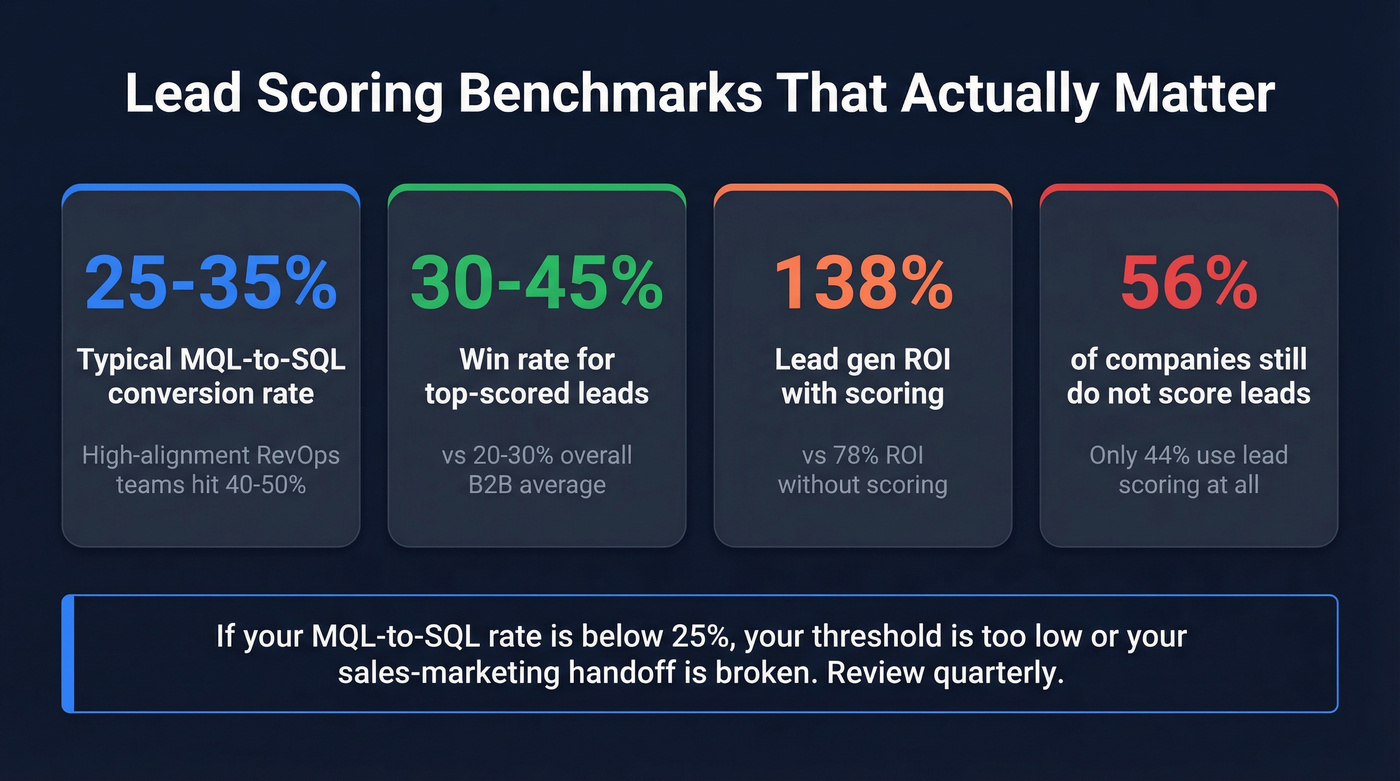

Set your MQL threshold at 50-75 points on a 100-point scale, targeting the top 20% of leads. That range typically yields 15-25% conversion rates from qualified leads to closed deals. If your MQL-to-SQL rate is below 25%, your threshold is likely too low, or your sales-marketing handoff is broken. Review quarterly with closed-loop sales feedback.

Half-empty form fields destroy your explicit scores. Prospeo enriches contacts with 50+ data points - job title, company size, industry, revenue - at a 92% match rate. No more scoring leads on incomplete data.

Stop scoring leads built on guesswork. Enrich them first.

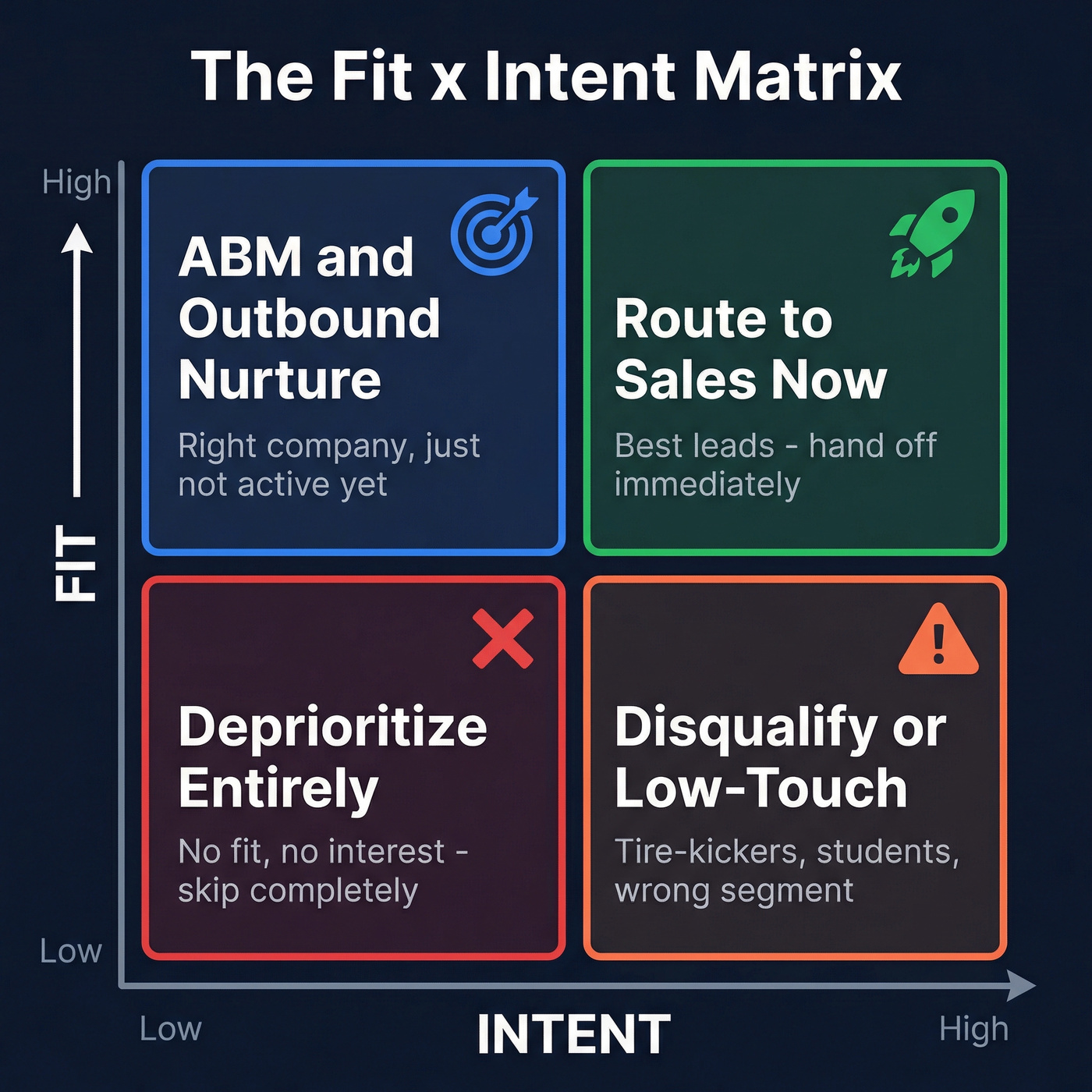

The Fit x Intent Matrix

Combining fit and intent into a single score is a mistake. A lead with a score of 72 tells you nothing - is that a perfect-fit VP who hasn't engaged yet, or a student who's read every blog post you've ever published? This is exactly why fit and intent should live as separate dimensions.

| High Intent | Low Intent | |

|---|---|---|

| High Fit | Route to sales immediately | ABM / outbound nurture |

| Low Fit | Disqualify or low-touch | Deprioritize entirely |

Fit is the gatekeeper for sales attention. A low-fit lead with high intent might feel exciting, but it's usually a tire-kicker, a student, or someone in the wrong segment entirely. Don't let behavioral noise distract your AEs from accounts that actually match your ICP.

High fit + low intent is where ABM earns its keep. These are the right companies - they just haven't raised their hand yet. Outbound sequences, targeted ads, and multi-threaded outreach belong here. Skip the low-fit/low-intent quadrant altogether; no amount of nurturing turns a bad-fit lead into a good customer.

Negative Scoring and Decay

If you're not doing negative scoring, you're not doing lead scoring. Full stop.

Penalize competitor employees by -50 immediately - they're researching you, not buying. Unsubscribes get -25 as explicit disengagement. Wrong company size or geography earns -20 regardless of behavior, and generic or personal emails in a B2B context get -15. Unbounce cut sales time spent on unqualified leads by 40% by negative-scoring generic email domains and certain geographies. That's almost half of wasted rep time eliminated just by subtracting points.

For decay, reduce scores by 25% monthly without new activity and apply a flat -10 after 30 days of zero engagement. A lead who was hot in Q1 but hasn't touched anything since shouldn't still be clogging your pipeline in Q3.

Benchmarks That Actually Matter

MQL-to-SQL conversion rates for typical teams run 25-35%. High-alignment RevOps organizations hit 40-50%. If you're below 25%, your scoring model is either too loose or your sales-marketing handoff is broken. In our experience, teams below that threshold are almost always missing negative scoring entirely.

Win rates tell a similar story. Overall healthy B2B win rates sit at 20-30%, but leads in the top score tier convert at 30-45%. That gap is the entire argument for scoring. Companies with lead scoring achieve 138% lead gen ROI versus 78% without - and yet only 44% of organizations use lead scoring at all.

Let's be honest: if your average deal size is under $15K, you probably don't need a 30-variable scoring model or a $60K predictive platform. A simple rules-based model with negative scoring and monthly decay will outperform anything fancier until you have the data volume to justify it.

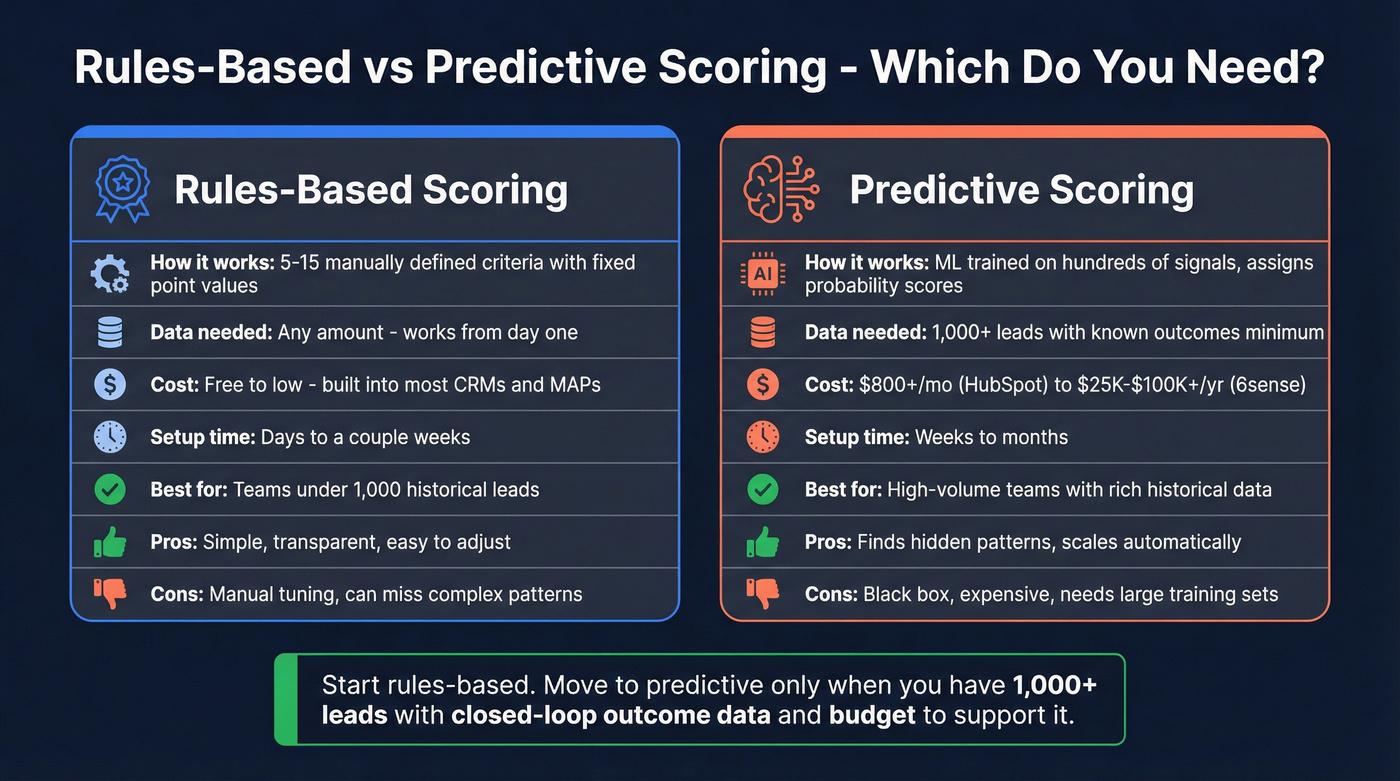

Rules-Based vs. Predictive Scoring

Rules-based scoring uses 5-15 manually defined criteria with fixed point values - exactly what we've been building here. Predictive scoring uses machine learning trained on hundreds of signals to assign probability scores automatically.

Most teams don't need predictive scoring yet. It requires at least 1,000 historical leads with known outcomes to train a model properly. We've watched Series A teams spend five figures on predictive platforms with 400 total records. It never works. The consensus on r/sales and r/RevOps threads is consistent: teams buy the AI tool, realize they don't have enough training data, and end up building the rules-based model they should have started with.

When conditions are right, predictive works well. Pandora increased lead conversion by 30% with Salesforce Einstein, and HPE saw a 50% jump in MQLs with Marketo's predictive capabilities. But the cost barrier is real - HubSpot's predictive scoring requires Professional or Enterprise tiers at $800+/month, and enterprise platforms like 6sense run $25K-$100K+/year. Whichever approach you choose, recalibrate every 3-6 months. Markets shift, buyer behavior changes, and a model trained on last year's data will quietly rot if you don't feed it fresh outcomes.

Why Data Quality Makes or Breaks Your Model

None of this matters if your underlying data is wrong.

If emails bounce, your implicit scores only reflect leads who happened to have valid addresses - a biased sample, not your best leads. If job titles are outdated, your explicit scores grade people on roles they left eighteen months ago. Both scores look fine in the dashboard. Both are lying to you. We've seen teams spend weeks fine-tuning point values when the real problem was that a third of their contact records were stale.

Prospeo's 98% email accuracy and 7-day data refresh cycle mean your scoring model is built on current reality, not stale records. The 83% enrichment match rate returns 50+ data points per contact, filling the firmographic gaps that make explicit scores unreliable. Snyk's team saw bounce rates drop from 35-40% to under 5% after switching, which meant their behavioral tracking actually covered their full pipeline instead of a biased slice of it.

Before you invest a single hour tuning point values, verify your contact data with an email ID validator.

Your implicit scores break when 30% of emails bounce - you can't track engagement on contacts you can't reach. Prospeo's 98% email accuracy and 7-day refresh cycle mean your behavioral signals reflect reality, not stale data.

Accurate scoring starts at $0.01 per verified email.

FAQ

How many scoring criteria should I start with?

Five to seven criteria that predict roughly 80% of your conversions. Keep it simple, review quarterly with sales feedback, and add variables only when you can tie them to closed-won outcomes. Over-engineering on day one creates maintenance debt without improving accuracy.

What's a good MQL threshold?

Aim for 50-75 points on a 100-point scale, capturing the top 20% of leads. If your MQL-to-SQL rate stays below 25%, raise the bar. If pipeline is thin, lower it by 5-10 points and watch conversion rates closely over a full quarter before making another adjustment.

How do I keep my scoring data accurate?

Use enrichment tools to fill missing firmographic fields and verify emails before they enter your model. Refresh contact data at least monthly - a lead who changed jobs six months ago is being scored on a role they no longer hold. For teams running CRM-based enrichment workflows, Prospeo's 7-day refresh cycle and 92% API match rate handle this automatically.

Should I combine explicit and implicit scores into one number?

No. Keep them as two separate dimensions and route leads using a fit x intent matrix. A single blended score hides whether a lead is high-fit/low-intent or low-fit/high-intent, and those two scenarios require completely different follow-up actions.