The Lead Qualification Strategy Guide With Actual Numbers

Your SDR just spent 45 minutes on a discovery call with someone who downloaded three whitepapers and scored "high" in your system. No budget. No authority. Reports to someone who reports to someone who might care. That's not a qualification failure - it's a scoring model built on bad signals and worse data. 67% of lost sales are because sales teams don't qualify leads before starting the sales process, and 61% of B2B marketers send every lead straight to sales when only 27% are actually qualified.

What You Need (Quick Version)

- Pick your framework by deal size and complexity. BANT for deals under $10K with sub-30-day cycles, MEDDIC for $50K+ deals with 90+ day cycles, SPICED for SaaS/subscription and PLG motions.

- Build a scoring model with real point values. The rubric table below gives you exact numbers - not "weight engagement signals appropriately." (If you want a deeper build, use this lead scoring system guide.)

- Set your MQL threshold based on sales capacity, not a best-practice blog post. Your threshold is a staffing equation, not a magic number.

Five Mistakes Killing Your Qualification

No shared definition of "qualified." Marketing says it's a form fill. Sales says it's a confirmed meeting. Neither wrote it down. 50% of prospects aren't a good fit for your business - and without a shared definition, you can't filter them.

Treating every lead the same. Only 5% of sales reps say they receive high-quality leads from marketing. That gap exists because most teams skip the work to qualify and prioritize prospects before handing them off.

Buying lists and calling them "leads." Purchased contacts degrade fast, carry low intent, and tank your domain reputation. They're not leads - they're liabilities. (If this is a recurring issue, start with a lead data enrichment workflow.)

Scoring email opens. Apple Mail Privacy Protection pre-loads tracking pixels for ~50% of recipients. Your "engaged" leads may have never seen your email.

No disqualification criteria. Teams obsess over who qualifies and never define who doesn't. If you don't have negative scoring rules, you're letting bad-fit prospects consume your pipeline. Use a dedicated negative lead scoring model so reps stop arguing edge cases.

Which Framework to Use

Here's the thing: BANT is fine for transactional deals. Using it for enterprise is malpractice. You wouldn't diagnose a complex medical condition with a yes/no checklist, and you shouldn't qualify a $100K deal by asking "do you have budget?"

| Framework | Best For | Deal Size | Cycle Length | Core Question |

|---|---|---|---|---|

| BANT | Transactional, SMB | Under $10K | Under 30 days | Can they buy now? |

| MEDDIC | Enterprise, multi-stakeholder | $50K+ | 90+ days | How do they buy? |

| SPICED | SaaS, PLG, consultative | Varies | Varies | Why change now? |

CHAMP deserves a mention - it flips BANT by leading with Challenges before Budget, which feels more natural in consultative conversations. But it's not different enough from BANT to warrant its own implementation. (If you do want it, see the CHAMP framework.)

The smartest approach we've seen: use BANT as a first screen, then deepen with SPICED or MEDDIC once a lead passes initial qualification. This gives you speed on the front end and rigor where it matters.

Bad data doesn't just waste sends - it actively breaks your scoring model. Every bounced email costs you −25 points on a lead that might be a perfect fit. Prospeo's 98% email accuracy and 7-day refresh cycle mean your qualification signals reflect real buyer behavior, not stale records.

Stop disqualifying good leads because your data is bad.

Build a Scoring Model (With Actual Numbers)

Most scoring guidance tells you to "assign points based on engagement." That's useless. Here's a rubric you can actually implement today. (For more examples, see B2B lead scoring.)

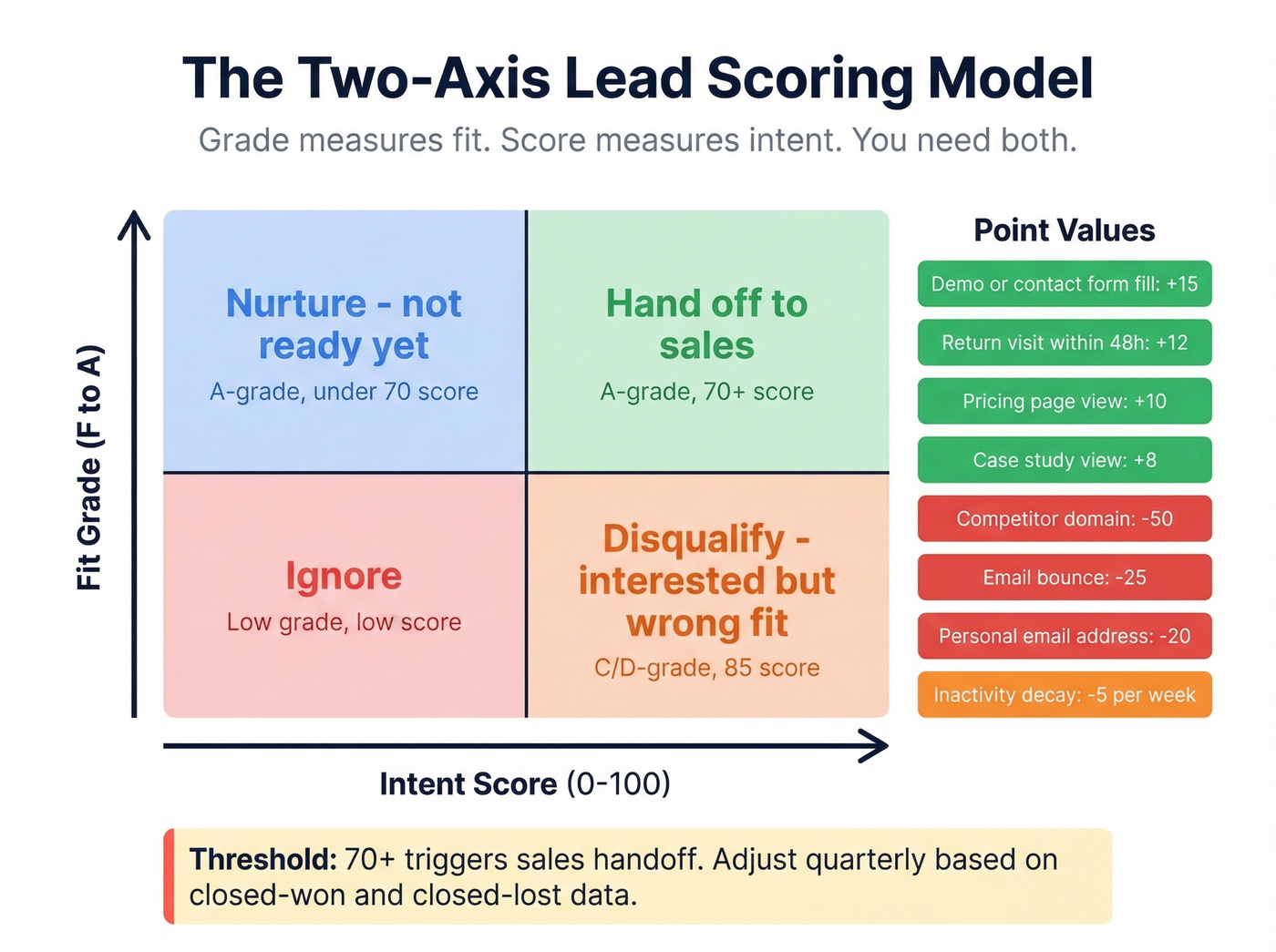

The foundation is a two-axis model. Grade (A through F) measures fit - will this company ever buy from you? Score (0-100) measures intent - are they buying now? A C-grade lead with a score of 85 is someone who's very interested but will never close. Disqualify them. An A-grade lead with a score of 30 needs nurturing, not a sales call.

| Signal | Points | Category |

|---|---|---|

| Pricing page view | +10 | Behavioral |

| Demo/contact form fill | +15 | Behavioral |

| Case study view | +8 | Behavioral |

| Return visit within 48h | +12 | Behavioral |

| Email bounce | −25 | Negative |

| Personal email address | −20 | Negative |

| Competitor domain | −50 | Negative |

Add time-based decay: −5 points per week of inactivity. Without decay, a lead who visited your pricing page six months ago still looks "hot" in your CRM. They're not. (If you want a ready model, use website visitor scoring.)

The threshold that works for most teams: 70+ triggers a sales handoff. But don't treat that as gospel - treat it as a starting hypothesis you'll adjust quarterly.

Build a feedback loop. Pull your last 90 days of closed-won and closed-lost deals, check which signals actually predicted outcomes, and re-weight. If high scorers aren't converting, your signals are wrong. This is the step most teams skip, and it's the one that matters most.

Stop scoring email opens. Apple Mail Privacy Protection pre-loads tracking pixels for ~50% of recipients. Your "engaged" leads may have never opened your email. Weight on-site behavior instead.

How to Set Your MQL Threshold

Your MQL threshold is a sales capacity calculation, not a best-practice number. If your team can handle 200 leads per month and you're sending 400, either hire or raise the threshold. One team tightened their title and seniority filters while loosening activity requirements and saw MQL-to-meeting rates jump 13%. The lesson: fit matters more than engagement volume.

Why Data Quality Makes or Breaks Your Model

If 30% of your emails bounce, your engagement signals are noise. An email bounce carries −25 points in the rubric above - meaning bad data doesn't just waste sends, it actively corrupts your scores by penalizing leads who might be perfect fits with outdated contact info. (To diagnose it fast, start with an email bounce back audit.)

Prospeo verifies emails in real time with 98% accuracy on a 7-day refresh cycle, so your scoring model operates on clean inputs instead of stale records. The free tier gives you 75 emails per month - enough to test whether your bounce rate is a data problem or a targeting problem.

Account-Level Qualification

Individual lead scoring isn't enough anymore. Buying groups average 9+ members, sales cycles run 7-8 months on average, and 61% of buyers are deep into their journey before they ever contact a vendor. If you're scoring one contact at a time, you're seeing a fraction of the picture.

Score accounts, not just contacts. When three people from the same company view your case studies in the same week, that's a buying signal that no individual lead score captures. Look for multi-threaded engagement: different roles, different content types, compressed timeframes. (This is the core of account scoring.)

Tools like Prospeo surface verified contacts for multiple stakeholders at target accounts, giving your scoring model multi-threaded inputs instead of single-contact guesswork.

Defining Lead Qualification Rules That Scale

Most BANT questions - budget range, company size, tech stack - are answerable through pre-call research. Your discovery call should focus on things you can't find online. With 7-10 decision-makers involved in a typical B2B deal and buying groups averaging 9+ members, buying dynamics matter more than firmographics.

Codify your lead qualification rules into explicit criteria that every rep follows - minimum company size, required tech stack overlap, confirmed decision-making authority - so qualifying and prioritizing leads becomes a repeatable process rather than gut instinct. This consistency is what allows you to qualify leads at scale without sacrificing accuracy. (If you need a template, use a lead qualification checklist.)

Five questions worth asking:

- Walk me through your current process for [problem you solve].

- What's the biggest challenge with that process right now?

- Who else is involved in evaluating a solution like this?

- What's driving the timeline - is there an event or deadline pushing this?

- What other solutions are you evaluating, and what's standing out?

Do your firmographic qualification before the call. Use data tools to confirm company size, tech stack, and role seniority. Then spend your 30 minutes on the questions that actually predict whether a deal closes.

Benchmarks - What Good Looks Like

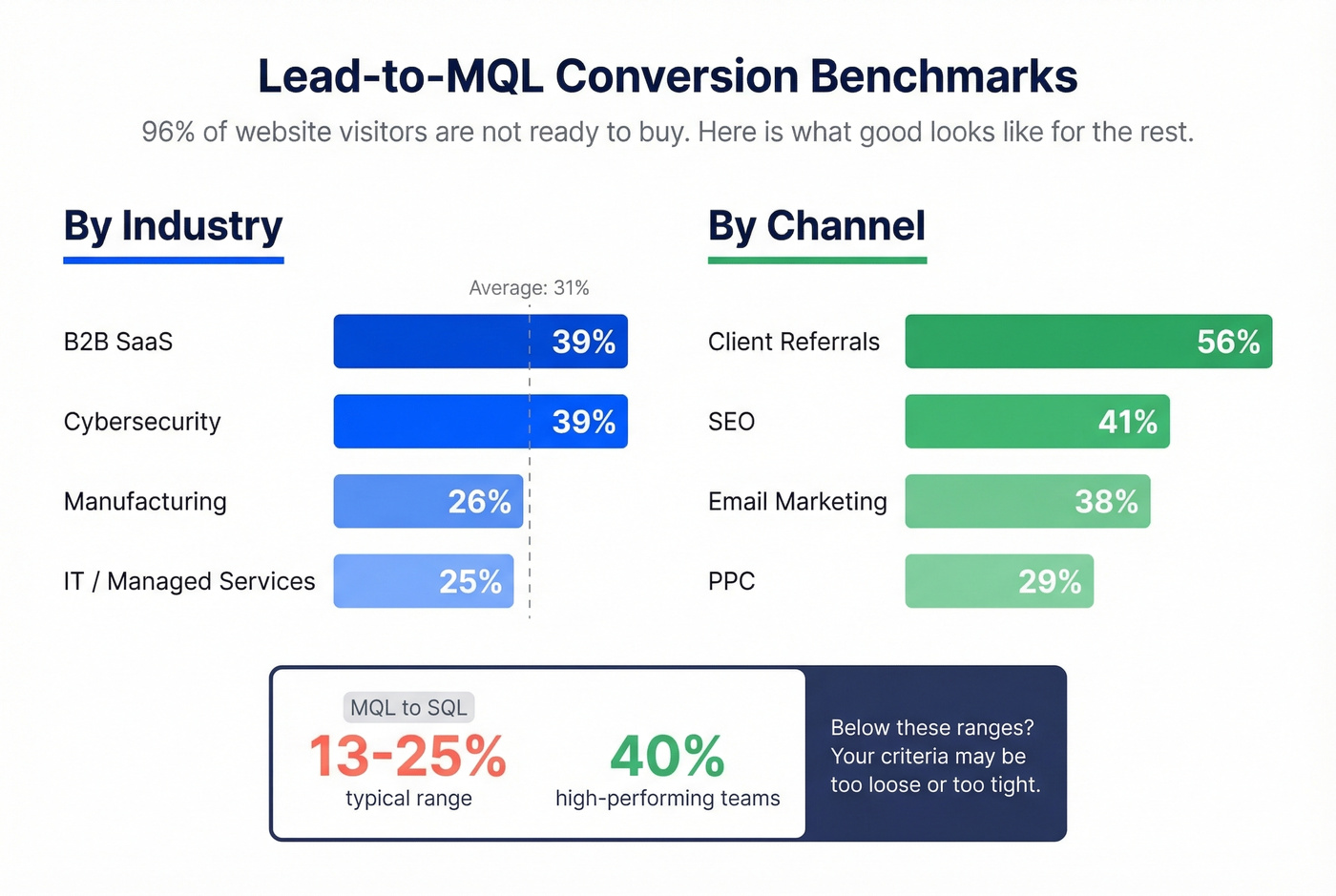

96% of website visitors aren't ready to buy. That's not a failure - it's the baseline reality. The question is whether your lead qualification strategy is converting the right 4%.

The average lead-to-MQL conversion rate is 31% across industries. Here's how that breaks that down:

| Industry | Lead→MQL Rate |

|---|---|

| B2B SaaS | 39% |

| Cybersecurity | 39% |

| IT / Managed Services | 25% |

| Manufacturing | 26% |

| Channel | Lead→MQL Rate |

|---|---|

| SEO | 41% |

| PPC | 29% |

| Email Marketing | 38% |

| Client Referrals | 56% |

For MQL-to-SQL, typical ranges land between 13-25%, with high-performing teams hitting 40%. If your numbers fall below these ranges, your qualification criteria may be too loose - or too tight. Loose criteria flood sales with junk. Tight criteria starve them.

Buying groups average 9+ stakeholders, and scoring one contact per account is guesswork. Prospeo gives you verified emails and direct dials for multiple decision-makers at target accounts - 300M+ profiles with 30+ filters for title, seniority, department, and buyer intent.

Qualify accounts, not just contacts - with data that's actually current.

FAQ

What's the difference between MQL and SQL?

An MQL meets marketing's qualification criteria - a combination of fit grade and engagement score that crosses a defined threshold. An SQL has been vetted by sales through discovery, with confirmed budget, authority, need, and timeline. The handoff happens when a lead crosses your scoring threshold and sales accepts it after initial conversation.

How often should you recalibrate your scoring model?

Quarterly at minimum. Pull your last 90 days of closed-won and closed-lost deals, check which scoring signals predicted outcomes, and re-weight accordingly. If high scorers aren't converting, your signals are wrong. If low scorers are closing, you're missing important buying indicators.

Can you qualify leads without a CRM?

Yes, but it doesn't scale. A spreadsheet with fit grade and behavior score columns works at low volume. Beyond 50-100 leads per month, you need infrastructure to qualify leads at scale - and Prospeo's enrichment returns 50+ data points per contact to feed your model automatically.