Marketing Lead Scoring: The Practitioner's Guide to Models That Actually Work

Marketing says they sent 200 MQLs to sales last month. Sales says they got 200 names. That gap isn't a communication problem - it's a scoring problem. Only 27% of leads sent to sales are actually qualified, which means three out of four "marketing qualified" leads waste your reps' time.

We've watched this play out at dozens of companies. The fix isn't more meetings between marketing and sales. It's a shared scoring model with shared definitions, built on clean data (and solid CRM hygiene).

What You Need (Quick Version)

Fewer than 500 leads a month? Build a 100-point model in a spreadsheet. Split it into a fit score and an activity score. Set your MQL threshold at 50, SQL at 80. Review monthly with sales.

If you need software, HubSpot Marketing Hub Professional starts at $800/mo and supports rules-based scoring plus predictive scoring. But before any of that, verify your contact data. Scoring garbage produces garbage - and we'll come back to why that matters more than most teams realize.

What Scoring Actually Does

Lead scoring assigns a numeric value - typically 0 to 100 - to each lead based on two signal types. Explicit signals reflect who the lead is: title, company size, industry, geography. Implicit signals reflect what they've done: pages visited, forms submitted, emails engaged with, demos requested.

It's not a magic formula that predicts who'll buy. It's a prioritization framework that tells reps where to spend their next hour. 44% of organizations use lead scoring today, which means the majority still route leads by gut feel or chronological order. Both are worse than even a basic model (and a simple lead scoring system beats vibes every time).

Why Prioritization Matters

Companies using lead scoring see 138% ROI versus 78% without it. Speed-to-lead compounds the effect - following up within the first hour makes you roughly 7x more likely to qualify the lead. And 70% of prospects are lost due to inadequate follow-up, which is why SLAs matter more than the score itself. Scoring without routing is just math homework.

Here's the cost context most articles skip. The average B2B sales team runs 8.3 tools at $187/rep/month, with 73% reporting tool overlap that wastes $2,340/rep/year. Lead scoring doesn't need to be another tool on the pile. A spreadsheet model that actually gets used beats a $35,000/year platform that sits idle (especially if you're already trying to reduce the cost of sales tech stack).

Build Your Scoring Model

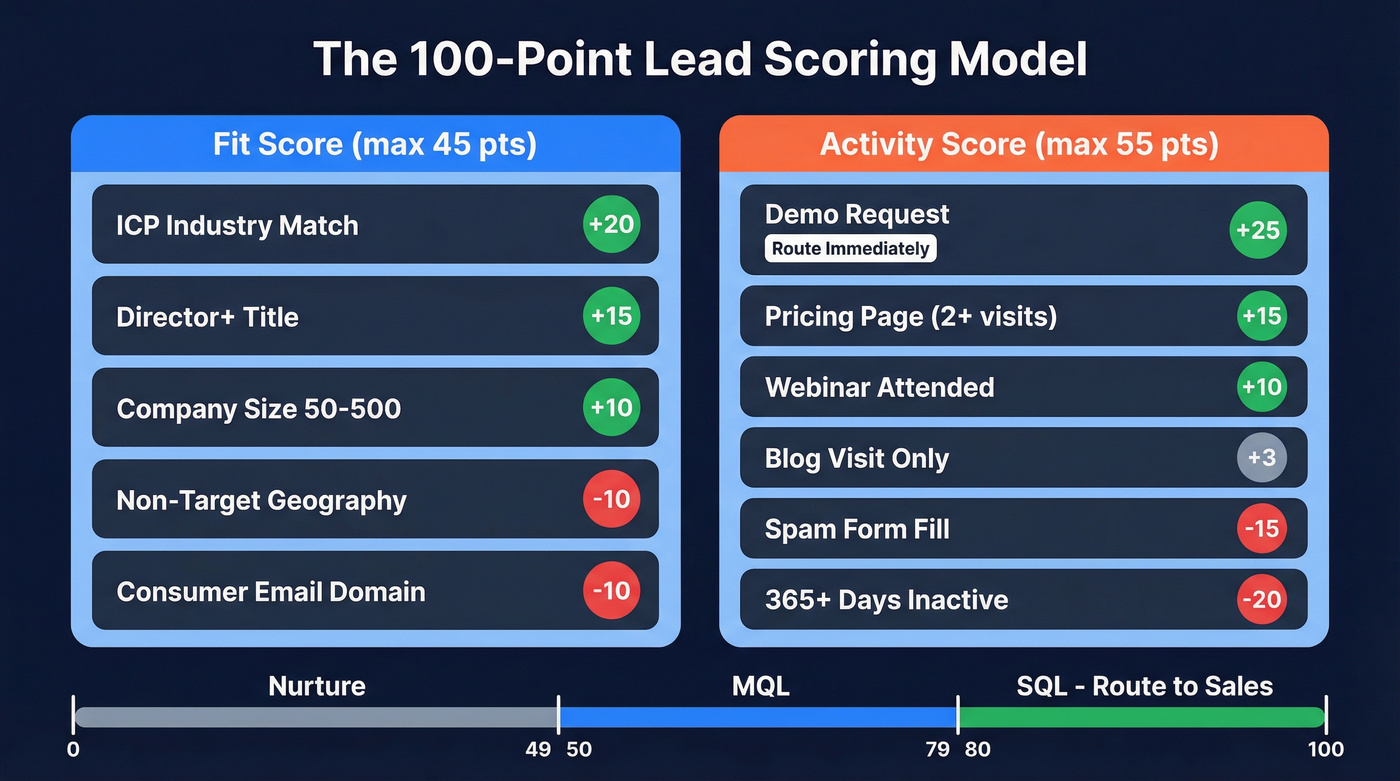

The table below splits into fit and activity, with negative scoring to penalize disqualifiers and apply decay (more on negative lead scoring if you want rules examples).

| Dimension | Criteria | Weight | Points | Notes |

|---|---|---|---|---|

| Fit | ICP industry match | High | +20 | Firmographic |

| Fit | Director+ title | High | +15 | Persona match |

| Fit | 50-500 employees | Medium | +10 | Size fit |

| Fit | Non-target geography | Negative | -10 | Disqualifier |

| Fit | Consumer email domain (gmail, yahoo) | Negative | -10 | B2B disqualifier |

| Activity | Demo request | Critical | +25 | Route immediately |

| Activity | Pricing page (2+ visits) | High | +15 | High intent |

| Activity | Webinar attended | Medium | +10 | Engagement |

| Activity | Blog visit only | Low | +3 | Low signal |

| Activity | Keyboard-mash form fill (asdf, test@test.com) | Negative | -15 | Spam detection |

| Activity | 365+ days inactive | Negative | -20 | Decay / removal |

With this model, a Director at an ICP company who attended a webinar and visited the pricing page twice scores 70 - an MQL. Add a demo request and they hit 95 - route to sales immediately.

Calibrating Point Values

Don't guess at the numbers. Use your conversion data. Take your lead-to-customer conversion rate as a baseline - say 40 customers from 280 leads gives you a 14% baseline. Now look at individual actions. If leads who fill out a form close at 25%, that's 1.8x your baseline. Assign 18 points, or use whatever scale maps to your 100-point budget. If leads from a particular channel close at 8%, assign negative points or zero.

This conversion-rate calibration method separates a real model from a guess-and-check exercise. Run it quarterly with actual closed-won and closed-lost data (and if you need a broader framework, use a lead qualification framework so scoring aligns with how deals actually close).

Score Decay

Activity scores should degrade 10-20% per month of inactivity. A lead who downloaded a whitepaper 11 months ago and went silent isn't the same lead who downloaded it last week. Without decay, your MQL list fills up with ghosts (this is also where B2B contact data decay quietly wrecks your pipeline).

One Model Is Enough

Some guides recommend running multiple scoring models - one per persona, one for upsell, one for new business. For teams under 5,000 leads/month, a single model with a fit/activity split handles the job. Multiple models create maintenance debt that nobody pays down.

Your scoring model breaks the moment your contact data is wrong. Prospeo's 5-step verification delivers 98% email accuracy and refreshes every 7 days - so your fit scores reflect reality, not stale CRM records.

Stop scoring leads you can't actually reach.

Routing Rules and SLAs

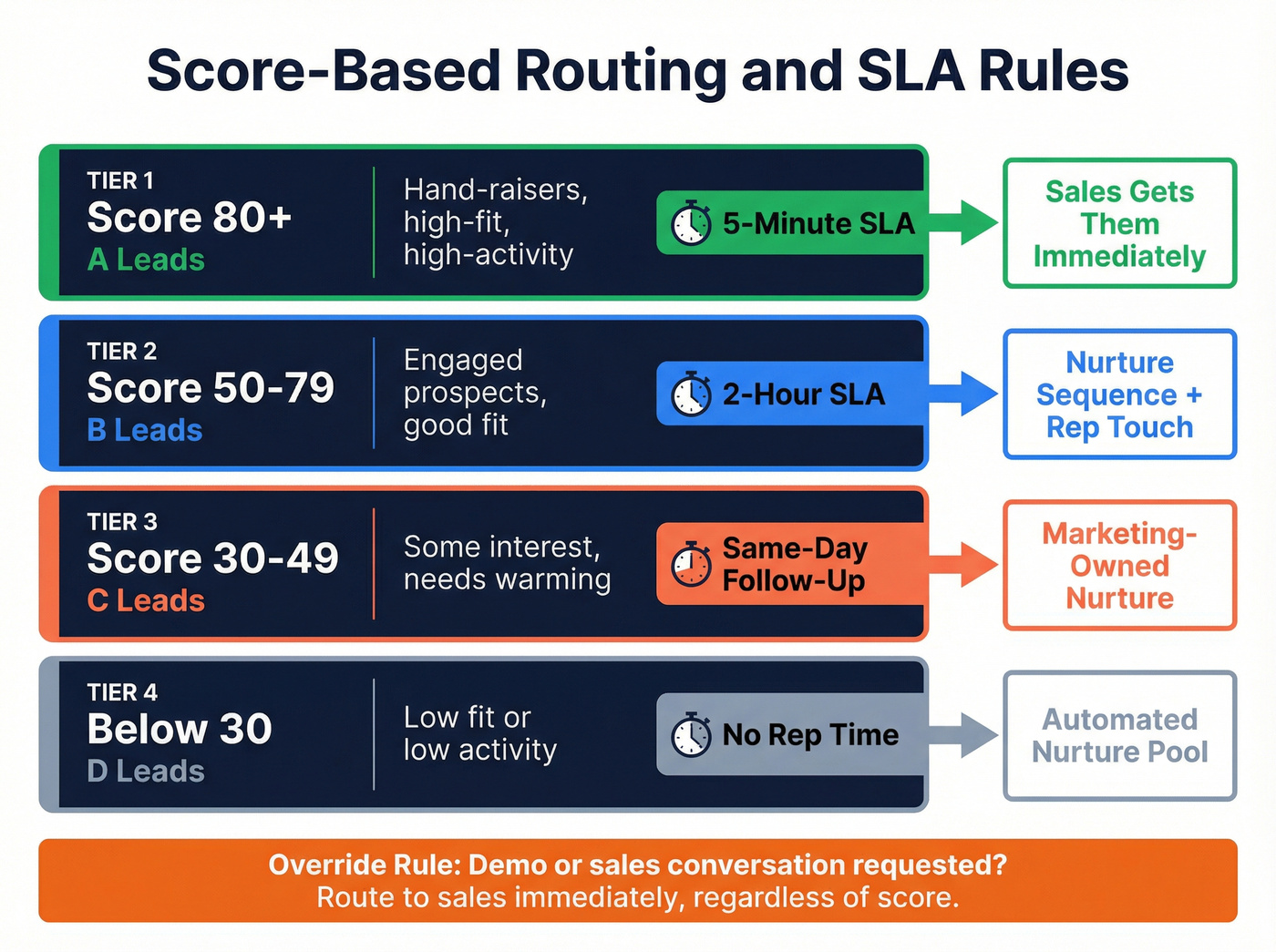

A score without a routing rule is just a number in a spreadsheet. The scoring model is a contract between marketing and sales - shared definitions, shared thresholds, shared accountability (this is exactly what RevOps lead scoring is supposed to govern).

- Score 80+ (A leads): 5-minute response SLA. Hand-raisers or high-fit, high-activity leads. Sales gets them immediately.

- Score 50-79 (B leads): 2-hour response window. Nurture sequences plus a rep touch.

- Score 30-49 (C leads): Same-day follow-up. Marketing-owned, automated nurture.

- Below 30: Nurture pool. Don't waste rep time.

One non-negotiable: if someone requests a demo or a sales conversation, route to sales immediately regardless of score. Full stop.

Review the model monthly with sales. Not a 90-minute meeting - a 20-minute check. Which MQLs converted? Which ones were junk? The consensus on r/salesops and r/revenue_operations is consistent: the most common complaint about lead scoring isn't the model itself, it's that sales never bought into it. The monthly sync fixes that.

Why Most Scoring Programs Fail

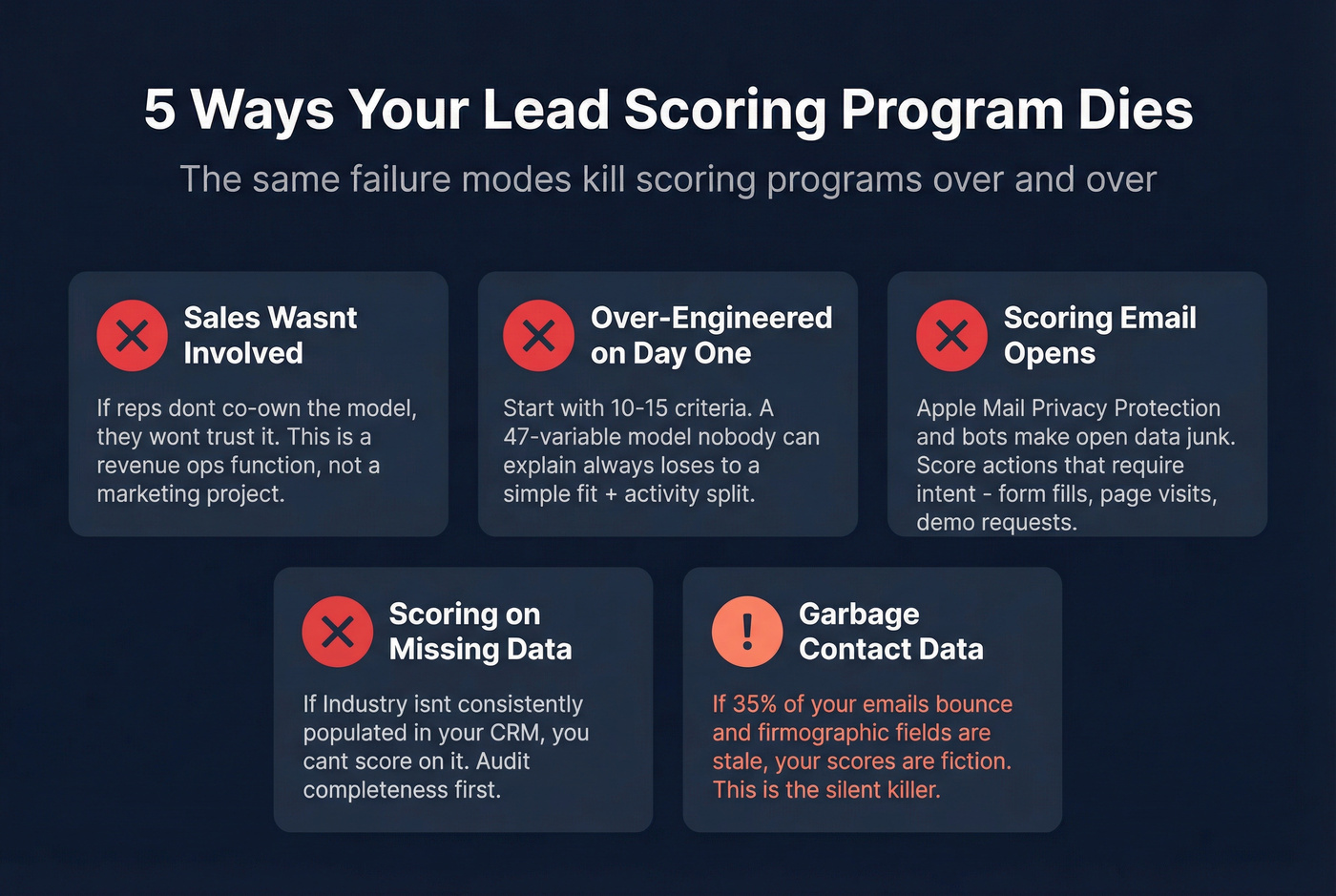

The same five failure modes kill scoring programs repeatedly.

Sales wasn't involved. If reps don't co-own the model, they won't trust it. This is a revenue operations function, not a marketing project (and it usually improves with better revenue operations alignment).

Over-engineered on day one. Start with 10-15 criteria you know work. You can always add complexity later. We've seen teams spend three months building a 47-variable model that performed worse than a simple fit + activity split because nobody could explain what the scores meant.

Scoring email opens. Here's the thing - email opens are a junk signal. Stop scoring them. Apple Mail Privacy Protection pre-fetches content, bots click tracking pixels, and the data is fundamentally unreliable. Score form fills, page visits, demo requests - actions that require intent.

Scoring on data you don't capture. If "Industry" isn't consistently populated in your CRM, you can't score on it. Audit data completeness before building criteria around it.

Garbage contact data. The failure mode nobody talks about. If 35% of your emails bounce and your firmographic fields are stale, your scores are fiction. This one deserves its own section.

Data Quality Makes or Breaks Scoring

You can build a perfect model, calibrate it with conversion data, get sales buy-in, set up routing - and it'll still fail if a third of your contact records are wrong. Stale emails, outdated titles, companies that were acquired two years ago. The model scores what it sees, and if what it sees is junk, the output is fiction.

This is where data verification earns its keep. Before you score a single lead, run your list through email verification to strip out bounces, catch-all traps, and stale records. Prospeo handles this at 98% accuracy on a 7-day refresh cycle across 143M+ verified addresses - the free tier gives you 75 emails/month, enough to audit whether your existing data is as clean as you think (and if you want a broader checklist, start with CRM verify).

Garbage contact data is the scoring failure mode nobody talks about. Prospeo enriches CRM records with 50+ data points at a 92% match rate - job title, company size, industry - so every criterion in your model actually has data behind it.

Enrich your CRM and make every scoring rule count.

Tools Worth Considering in 2026

Let's be honest: predictive scoring is overkill for 90% of B2B teams. If you've got clean data and fewer than a few thousand leads per month, rules-based scoring wins on simplicity, transparency, and time-to-value. Skip the ML pitch until you've outgrown a spreadsheet (or until you're ready for AI lead qualification with real routing logic).

| Tool | Type | Starting Price | Best For |

|---|---|---|---|

| HubSpot Marketing Hub | Rules + predictive | $800/mo (Professional) | All-in-one scoring + automation |

| Salesforce Einstein | Predictive (ML) | ~$50-75/user/mo add-on | Existing Salesforce shops |

| ActiveCampaign | Rules + automation | ~$49/mo | SMB and mid-market automation |

| 6sense | ABM + intent-based | ~$35,000/yr | Enterprise ABM with intent budget |

| Marketo Engage | Rules + advanced | ~$1,500-3,000+/mo | Enterprise marketing automation |

HubSpot is the default recommendation for most teams - it lives inside the same platform as your email, forms, and CRM. Salesforce Einstein makes sense only if you're already deep in the Salesforce ecosystem. 6sense is powerful but requires a 3-6 month implementation and a budget north of $35K/year, so skip it unless you're running enterprise ABM. ActiveCampaign punches above its weight for smaller teams. Marketo is enterprise-grade and priced accordingly.

FAQ

Lead scoring vs. lead grading - what's the difference?

Lead scoring assigns points based on both behavior and firmographic fit, producing a numeric score. Lead grading uses letter grades (A-D) for firmographic fit only. Most modern systems combine both into a single composite score - that's the approach that works.

How often should you recalibrate?

Run a 20-minute sync with sales monthly. Recalibrate point values quarterly using closed-won and closed-lost data. If close rates shift by more than 10%, your model is stale and needs immediate adjustment.

Can you score leads without dedicated software?

Yes. Teams with fewer than 500 leads/month can run a spreadsheet model effectively. Start there, validate thresholds with sales, then graduate to HubSpot or ActiveCampaign when volume demands automation. The model matters more than the tool.

What's the best free option for cleaning lead data before scoring?

Prospeo's free tier includes 75 email verifications per month at 98% accuracy - enough to audit your existing database quality. For larger lists, paid plans run roughly $0.01 per email. Clean data is the prerequisite for any marketing lead scoring model that actually delivers results.