Sales Qualified Lead Criteria: The Scoring Model, Benchmarks, and Frameworks That Actually Work

It's Monday pipeline review. Your AE is pitching a "hot lead" who downloaded an eBook six weeks ago and hasn't responded to three follow-ups. Your VP wants to know why 60% of "qualified" leads never book a second call. You've read five articles on sales qualified lead criteria and none gave you a single number to work with.

Here's the quick version. Every SQL framework reduces to four questions: need, budget, authority, timeline. The framework you pick matters less than having a scoring model with actual thresholds. If your MQL-to-SQL conversion rate sits below roughly 13% across segments, your criteria are broken - not your sales team. What follows is a copy-paste scorecard, industry conversion benchmarks, and a framework-to-deal-type map you can implement this week.

What Makes a Lead Sales-Qualified

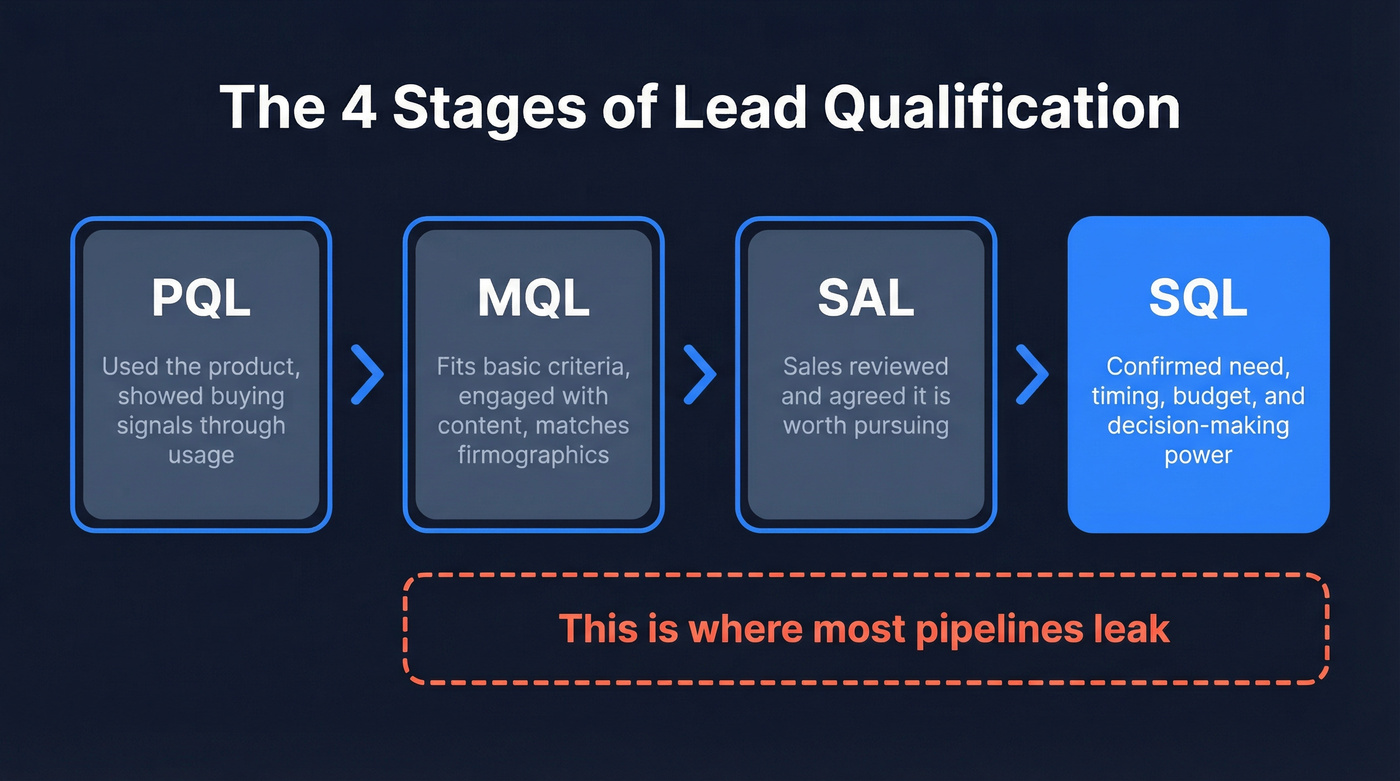

Four stages matter:

- PQL - used the product, showed buying signals through usage.

- MQL - fits basic criteria and engaged with content (downloaded a guide, visited pricing, matches firmographics).

- SAL - sales reviewed the MQL and agreed it's worth pursuing.

- SQL - sales confirmed need, timing, and decision-making power. The prospect is ready to discuss scope and pricing.

The gap between MQL and SQL is where most pipelines leak. An MQL says "this person might be interested." An SQL says "this person has a problem we solve, money to spend, authority to sign, and a reason to act now." That's a fundamentally different conversation, and understanding how to identify qualified leads at this stage is what separates high-performing teams from everyone else.

The Criteria That Actually Matter

Experienced sellers on r/sales don't think in acronyms - and we've seen the same thing in practice. A veteran seller's 3-criteria framework used for 20+ years boils down to three things.

Requirements and urgency. What do they need, why, and when? The "when" is everything. A regulatory deadline, an audit, a breach, a top-down mandate - these create urgency that no amount of nurturing can manufacture.

Budget. Not just "do they have money" but "is budget approved, and does our price fit their expectation?" Ask directly or frame it around investment for similar projects.

Competition. Who else are they evaluating, and where do you win or lose on their specific requirements? If you can't beat the competition on the prospect's core needs, disqualify.

Here's the contrarian take: relationship isn't a qualification criterion. A warm relationship with someone who has no budget and no urgency is a coffee meeting, not a deal.

These three criteria map cleanly to the universal four (need, budget, authority, timeline) that every methodology ultimately reduces to. The framework name doesn't matter. The rigor does.

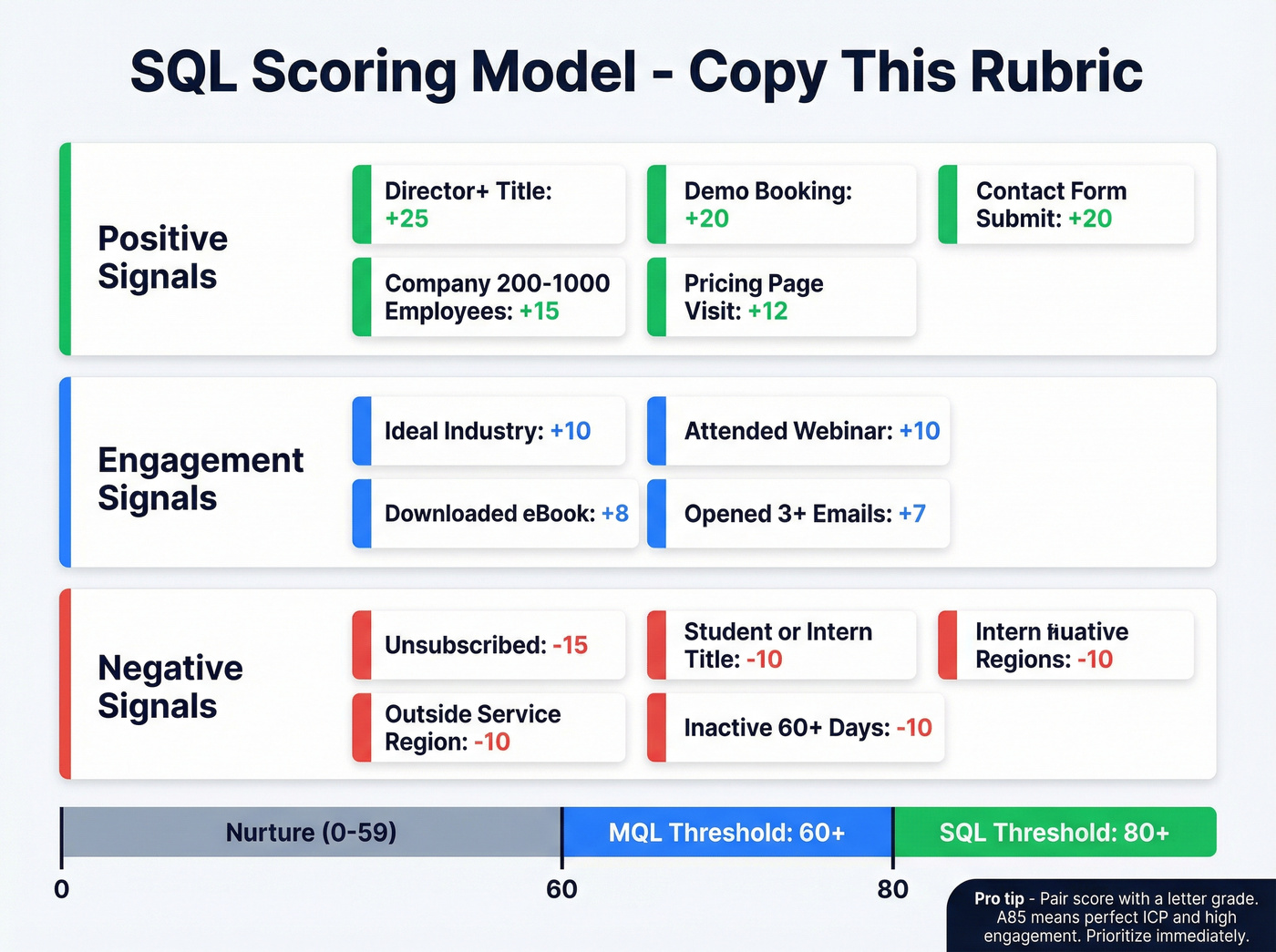

A SQL Scoring Model You Can Copy

Drop this rubric into HubSpot today: Settings, Properties, find "HubSpot Score," Edit. For Salesforce, pull conversion data from Reports, New Report, Opportunities grouped by Stage - divide Won by Total to get your conversion rate.

Start with fewer than 10 criteria and expand once you've validated against closed-won data. If you need a baseline, start with a simple lead scoring model and iterate.

| Criterion | Type | Points |

|---|---|---|

| Director+ title | Demographic | +25 |

| Company 200-1,000 | Firmographic | +15 |

| Ideal industry | Firmographic | +10 |

| Pricing page visit | Behavioral | +12 |

| Demo booking | Behavioral | +20 |

| Contact form submit | Behavioral | +20 |

| Attended webinar | Behavioral | +10 |

| Downloaded eBook | Behavioral | +8 |

| Opened 3+ emails | Behavioral | +7 |

| Student/intern title | Negative | -10 |

| Gmail/personal domain | Negative | -8 |

| Outside service region | Negative | -10 |

| Unsubscribed | Negative | -15 |

| Inactive 60+ days | Negative | -10 |

| MQL threshold | 60+ | |

| SQL threshold | 80+ |

The negative scores are where most teams fall short. That intern who visited your pricing page three times? Without negative scoring, they're an MQL. With it, they're correctly flagged as noise. The "Inactive 60+ days" row is a form of decay scoring - points should erode over time as engagement fades, not just accumulate forever.

For more granularity, pair the numeric score with a letter grade for fit. A = perfect ICP, F = disqualify. An "A85" tells you the lead fits your ICP and is highly engaged - prioritize immediately. A "C25" is a poor-fit tire-kicker. Skip it. If you haven't documented your ICP, use an Ideal Customer Profile Template to standardize fit scoring.

Companies using automated lead scoring see a 28% increase in qualification efficiency. That said, the gains only stick if the model includes disqualification logic - not just positive signals.

A scoring model with tight thresholds means nothing if half your contact data bounces. Prospeo's 98% email accuracy and 7-day data refresh cycle ensure every lead that hits your SQL threshold has a valid, verified path to a real conversation - not a dead inbox.

Stop qualifying leads you can't actually reach.

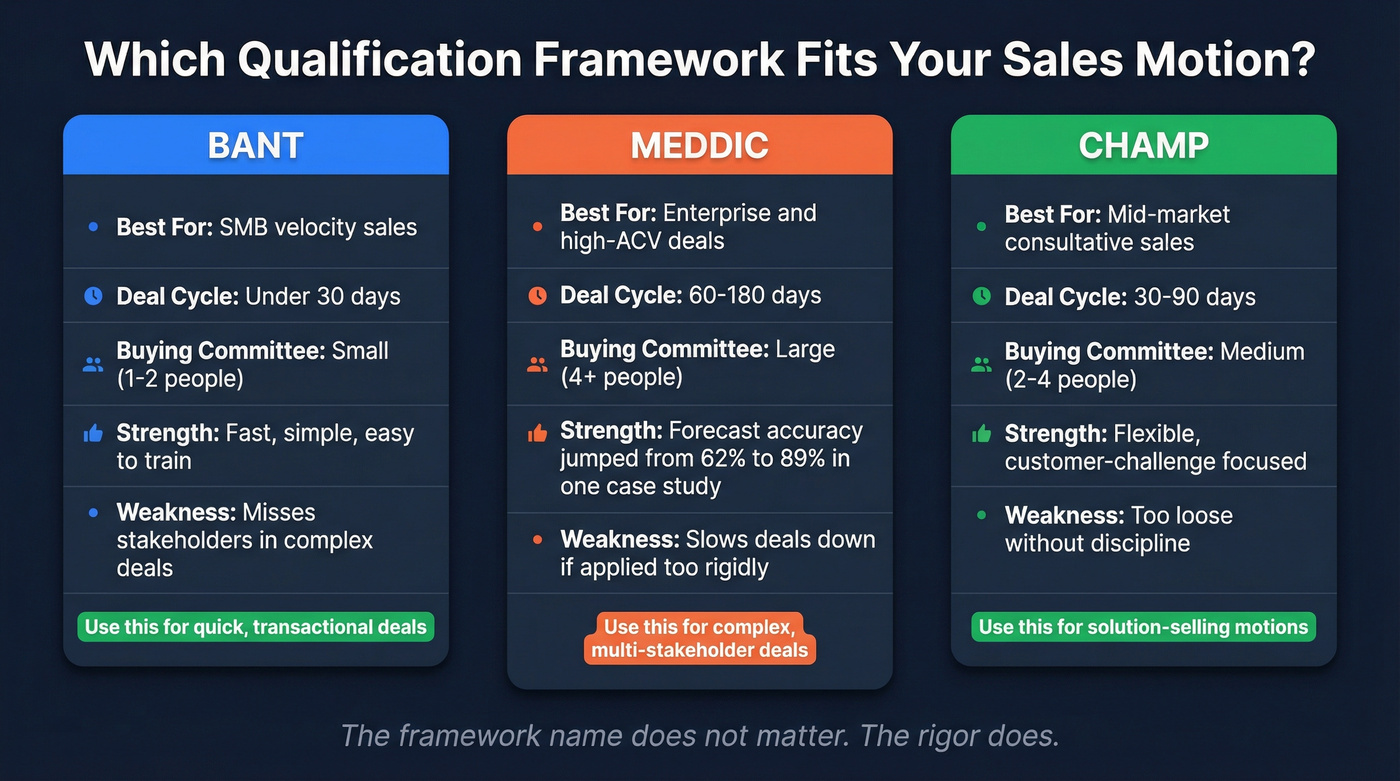

Which Framework Fits Your Sales Motion

| Framework | Best For | Deal Cycle | Buying Committee | Weakness |

|---|---|---|---|---|

| BANT | SMB velocity | <30 days | Small (1-2) | Misses stakeholders |

| MEDDIC | Enterprise / high-ACV | 60-180 days | Large (4+) | Slows deals if rigid |

| CHAMP | Mid-market consultative | 30-90 days | Medium (2-4) | Too loose if undisciplined |

Look, BANT is fine for SMB. Stop using it for enterprise. One team we worked with saw forecast accuracy jump from 62% to 89% after switching from BANT to MEDDIC for their enterprise segment. They'd been forcing a velocity framework onto 6-month deal cycles and wondering why forecasts were fiction.

On the discovery side, Gong data shows reps who ask 11-14 targeted questions hit a 74% success rate versus 46% for those asking fewer than 7. Focus questions on need, budget, authority, and timing rather than running through a generic checklist. If you want a tighter script, use these discovery questions to standardize what "qualified" means across reps. And cold outreach isn't dead - 82% of buyers will take a meeting if the rep demonstrates relevance upfront.

Benchmarks - Is Your Process Working?

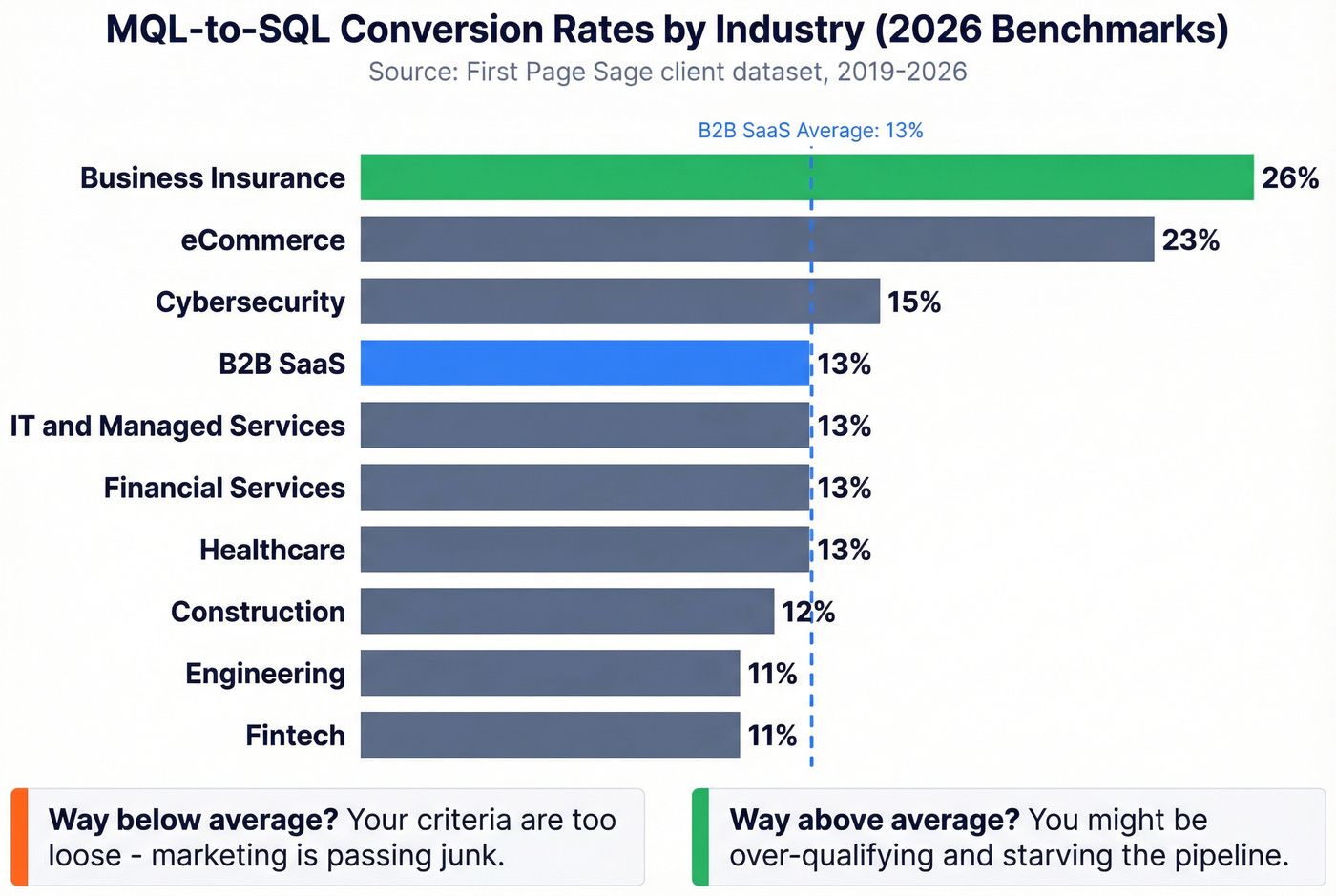

MQL-to-SQL conversion by industry:

| Industry | MQL-to-SQL Rate |

|---|---|

| Business Insurance | 26% |

| eCommerce | 23% |

| Cybersecurity | 15% |

| B2B SaaS | 13% |

| IT & Managed Services | 13% |

| Financial Services | 13% |

| Healthcare | 13% |

| Construction | 12% |

| Engineering | 11% |

| Fintech | 11% |

These are industry averages from First Page Sage's client dataset spanning 2019-2026. Within high-performing organizations, internal conversion rates run higher - typically 25-35%, with tightly aligned RevOps teams pushing 40-50% - because their criteria are tighter from the start. If you want more context, compare against broader sales conversion rate benchmarks.

If you're significantly below your industry average, your criteria are too loose and marketing is passing junk. Way above? You're probably over-qualifying and starving the pipeline. Neither extreme is healthy.

Let's be honest about what "review quarterly" actually means: pull your highest-scoring leads, compare them against closed-won deals, and check whether the scores predicted the wins. If they didn't, your weights are wrong and it's time to recalibrate.

Why Qualification Criteria Break Down

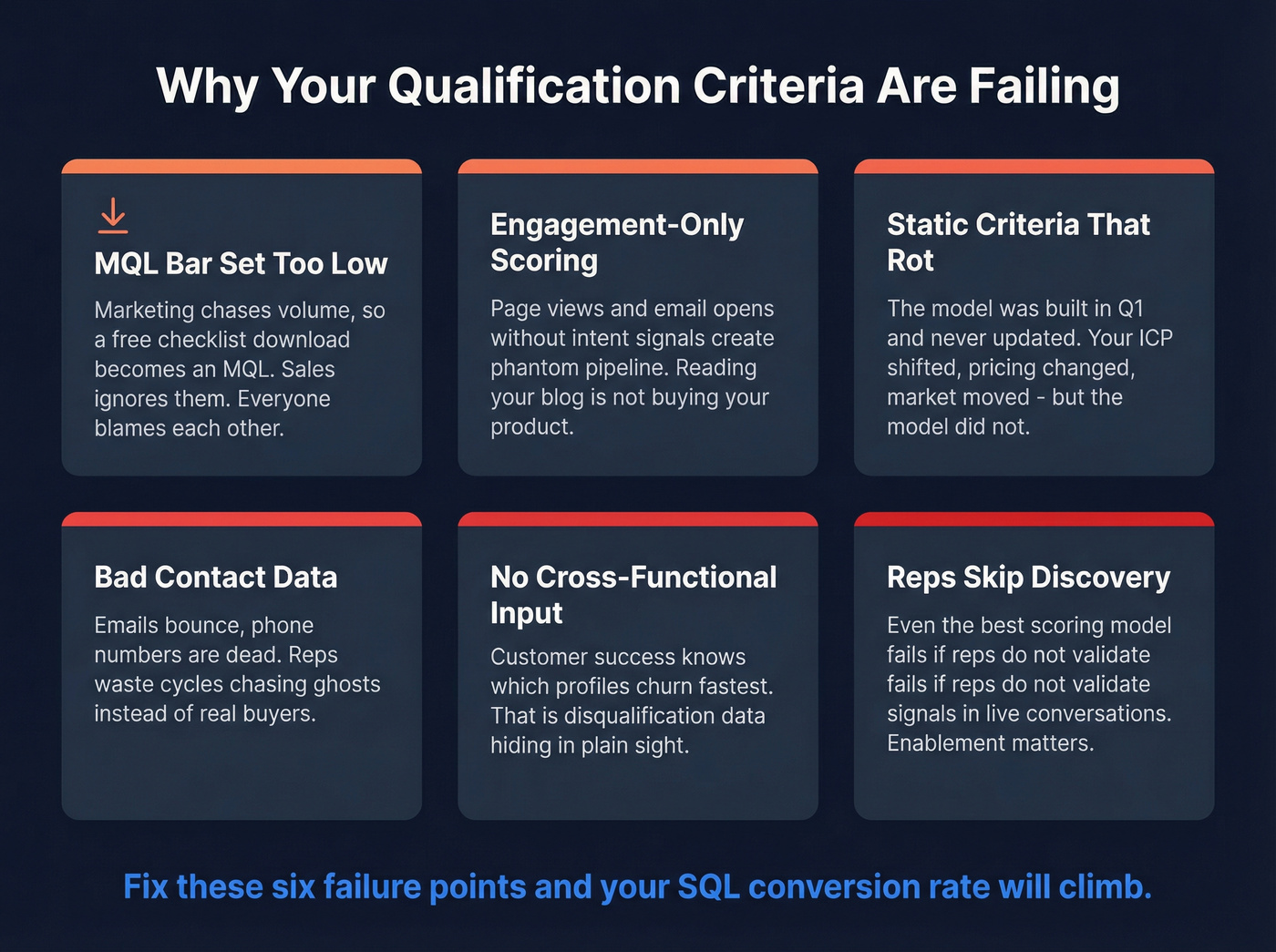

Even a well-designed scoring model fails in predictable ways.

The MQL bar is set too low. Marketing needs volume to hit lead targets, so a free checklist download becomes an MQL. Sales ignores most of these leads. Everyone blames each other. We've watched this cycle play out at dozens of companies and it always ends the same way - a tense all-hands where someone finally says "these leads are garbage" and the real conversation starts. If this is happening, fix your lead generation workflow before you tweak weights.

Engagement-only scoring. Page views and email opens without intent signals create phantom pipeline. Someone reading your blog isn't buying your product. Use identifying buying signals to separate curiosity from intent.

Static criteria that rot. The scoring model was built during a kickoff meeting in Q1 and hasn't been touched since. Your ICP shifted, your pricing changed, your market moved - but the model didn't.

Bad contact data. Your scoring model is only as good as the data feeding it. If emails bounce and phone numbers are dead, reps waste cycles chasing ghosts. This is where data freshness matters more than most teams realize - Prospeo refreshes its database every 7 days with 98% email accuracy, so reps score and call real people instead of outdated records. If you're diagnosing deliverability issues, start with email bounce rate benchmarks and fixes.

No cross-functional input. Involve your customer success team in defining qualification criteria. They know which customer profiles churn fastest - that's disqualification data hiding in plain sight. A lightweight churn analysis can surface patterns worth scoring negatively.

Reps skip discovery. Even the best scoring model fails if reps skip discovery steps. Enablement - not just criteria - determines whether SQLs actually convert. Knowing how to determine a qualified lead on paper means nothing if reps don't validate those signals in live conversations.

You just built a scoring model with firmographic, behavioral, and negative criteria. Now enrich every lead with 50+ data points - title, company size, industry, intent signals - so your scores reflect reality, not guesswork. Prospeo's 92% enrichment match rate fills the gaps automatically.

Fuel your lead scoring with data that's refreshed every 7 days.

FAQ

What's the difference between an MQL and an SQL?

An MQL has shown interest and fits basic demographic or firmographic criteria. An SQL has been vetted by sales with confirmed need, budget, authority, and timeline - ready for a deal conversation. The average MQL-to-SQL conversion rate across B2B SaaS is roughly 13%, meaning most MQLs don't make the cut.

How many discovery questions should reps ask to qualify?

Gong data shows 11-14 targeted questions correlate with a 74% success rate, versus 46% for reps asking fewer than 7. Focus on need, budget, authority, and timing - not a generic checklist.

How often should you recalibrate your scoring model?

Quarterly at minimum. Compare highest-scoring leads against closed-won deals. If they don't match, recalibrate your scoring weights. A model built six months ago is already drifting as your ICP and market evolve.

What tools help keep lead data accurate for scoring?

Scoring models fail when contact data decays. Prospeo verifies emails at 98% accuracy on a 7-day refresh cycle, compared to the 6-week industry average. Hunter and Clearbit are alternatives, but Hunter caps enrichment on free plans and Clearbit requires Salesforce integration for full value.