Lead Qualification and Segmentation: The Operational Playbook

Your SDR team just flagged that a big chunk of the leads marketing sent over last quarter never responded to a single email. Not because the messaging was bad - because the email addresses were wrong, the job titles were outdated, or the companies were outside your ICP. That's not a lead gen problem. That's a lead qualification and segmentation problem.

68% of B2B organizations struggle with lead conversion due to poor qualification. Highspot's 2026 enablement report found that 47% of enterprise GTM teams can't deliver a strong customer experience for leads, and 41% struggle with timely, personalized engagement. The root cause is almost always the same: qualification and segmentation are treated as separate activities when they're actually two halves of one system.

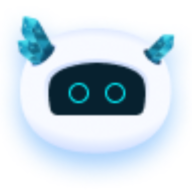

Qualification filters - "is this lead worth pursuing?" Segmentation groups - "what should we say, and when?" Run them independently and you get a pipeline full of noise.

The Short Version

- Pick a qualification framework by deal size. BANT works for deals under $25K. MEDDIC belongs in enterprise cycles above $50K. Don't use a kitchen scale to weigh a truck.

- Segment by at least 4 dimensions - firmographic, behavioral, intent, and buying stage - before you score anything.

- Build a dual-component scoring model with concrete MQL/SQL thresholds. Don't just define stages on a whiteboard and call it done.

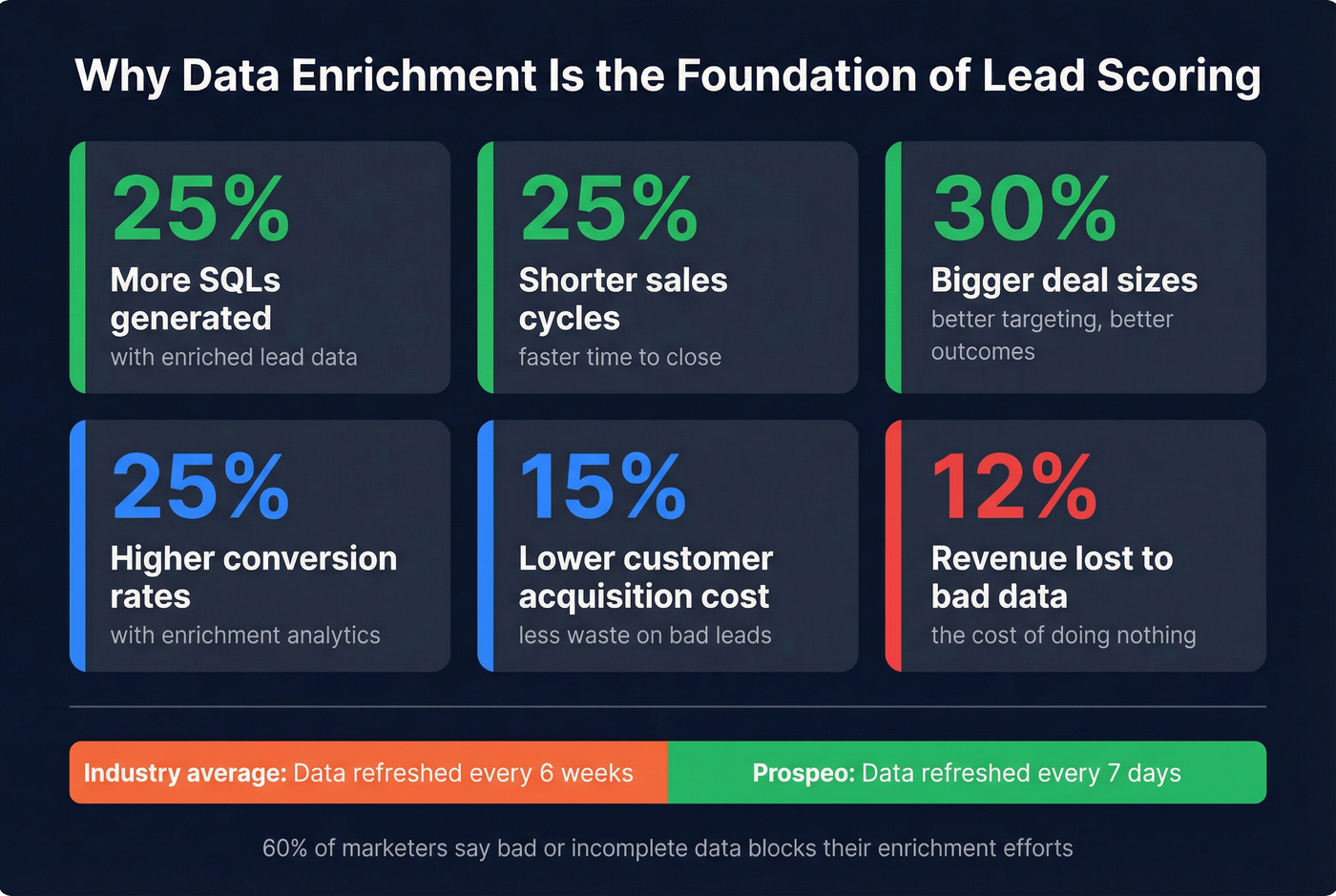

- Fix your data first. Enriched leads convert 20-30% better than non-enriched ones, and poor data quality costs roughly 12% of revenue. Your scoring model is only as good as the records feeding it.

Qualification vs. Segmentation

Let's be precise about the distinction, because conflating these two concepts is where most RevOps breakdowns start.

Qualification is a filter. It answers one question: should we spend time on this lead? You're evaluating budget, authority, need, timing - whatever criteria your framework demands - and making a binary-ish call. Pass or recycle.

Segmentation is a grouping mechanism. Once you know a lead is worth pursuing, segmentation determines what you say, through which channel, and when. It's the difference between sending a CFO a pricing-focused case study and sending a VP of Engineering a technical architecture doc.

The conversion math makes this tangible. Average sales call conversion rates run 1-5%. With pre-qualification baked in, that number can climb to 22.5%.

Segmentation complexity scales with buying-group size - the average B2B purchase involves seven stakeholders, but Forrester pegs enterprise buying groups at 14 to 23 people. You can't personalize for 23 stakeholders without segmentation. You can't avoid wasting time on the wrong accounts without qualification. They're inseparable.

6 Segmentation Dimensions That Drive Revenue

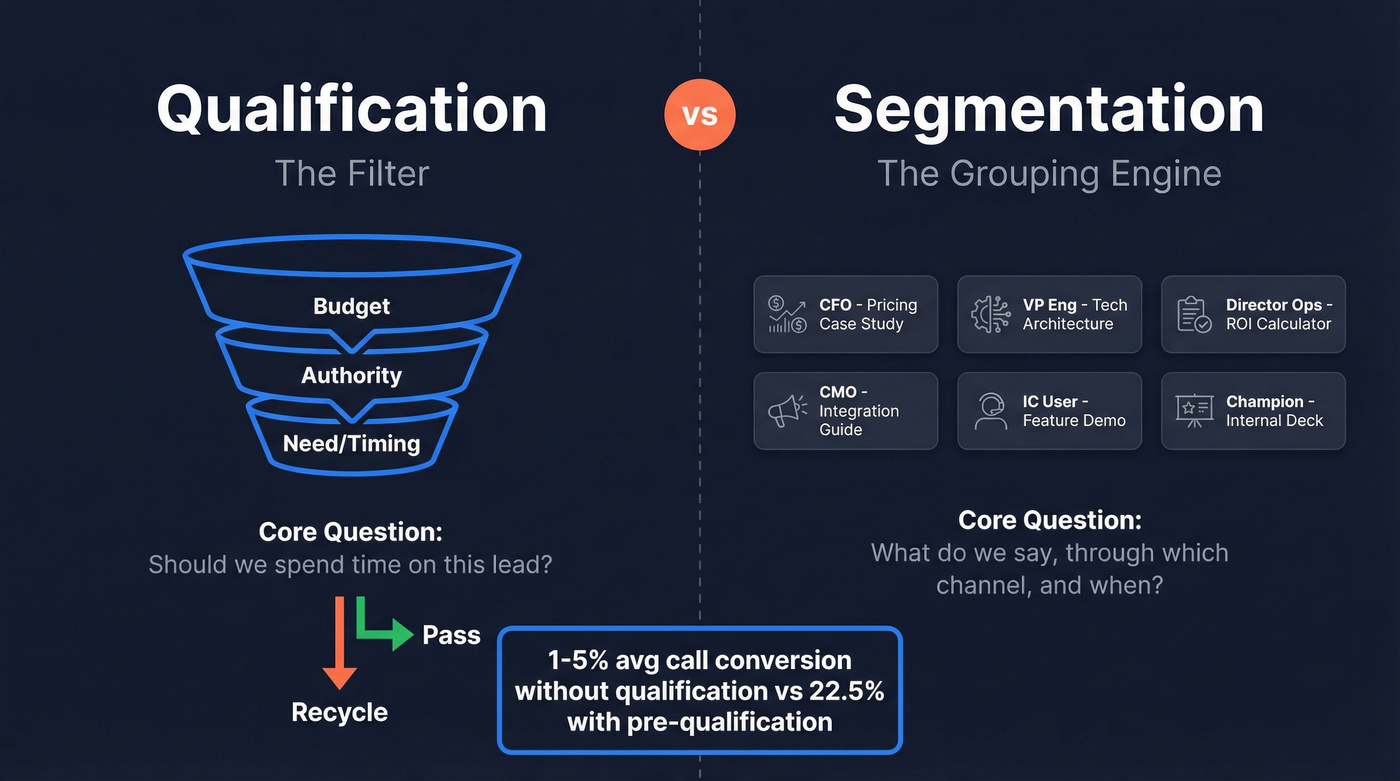

Most teams segment by industry and company size, then stop. That's two dimensions out of six that actually matter.

Firmographic - industry, company size, revenue, geography. The baseline. A fintech startup with 50 employees needs a completely different pitch than a Fortune 500 bank, even if both are "financial services."

Behavioral - site visits, content downloads, feature usage. A lead who visited your pricing page three times this week signals far higher intent than one who downloaded a single whitepaper six months ago. (If you want a deeper breakdown, start with behavioral segmentation.)

Intent signals - research behavior, keyword/topic surges, competitor evaluation. This is where you catch buyers before they raise their hand. If an account suddenly spikes on "CRM migration" topics, that's a buying signal regardless of whether they've touched your site. (More on operationalizing this in intent signals.)

Engagement history - past interactions, deal status, previous objections. A recycled lead who churned 18 months ago because of missing integrations needs a different message than a fresh inbound, especially if you've since built those integrations.

Demographic - job title, seniority, department, location. Critical for multi-threading into buying groups. The VP of Engineering and the CFO at the same company belong in different segments with different content. (See demographic data for what to capture and where it breaks.)

Buying stage - awareness, consideration, decision. A lead downloading a "what is X" guide isn't ready for a demo invite. Send them the comparison guide instead.

When buying groups run 14-23 stakeholders deep, account-level segmentation becomes essential for ABM. You need to know not just that Acme Corp is in-market, but that their VP of Engineering is researching your category while their CFO is evaluating a competitor. Each person gets a different segment, a different message, a different cadence. (If you're building this out, use an ABM account plan to keep it consistent.)

Choosing the Right Framework

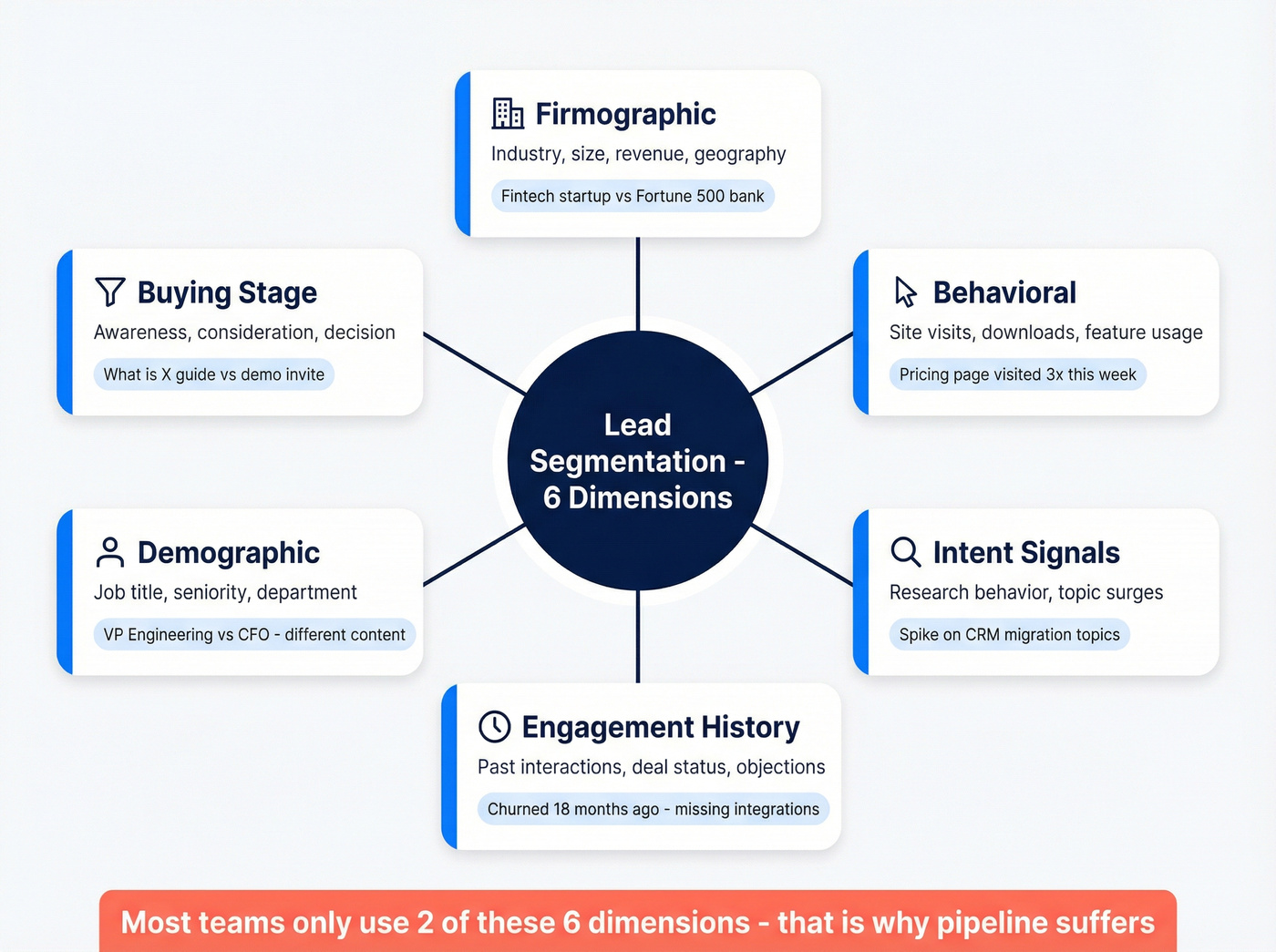

Here's the thing: if you're closing deals below $10K, you probably don't need a qualification framework at all - just a clear ICP definition and a fast enrichment workflow. Frameworks matter when deal complexity justifies the overhead. (If you need a full menu of models, see our lead qualification framework guide.)

Reps spend just 24% of their time actually selling - the rest goes to admin, research, and chasing poorly qualified leads. The right framework reclaims that time.

| Framework | Best For | Deal Size | Key Question | Why It Works |

|---|---|---|---|---|

| BANT | Transactional | <$25K | Can they buy? | 59% conversion lift; fast binary filter |

| CHAMP | Fluid-budget discovery | $25-50K | What's the pain? | 15% win rate lift; surfaces hidden budget |

| MEDDIC | Enterprise | >$50K, 3+ mo cycles | Who decides and how? | 25% win rate gain; maps decision process |

| ANUM | Inbound-heavy | Varies | Do they have authority? | Prioritizes authority first for high-volume inbound |

| FAINT | Early-stage cos | Varies | Can they find budget? | Unlocks deals where budget doesn't exist yet |

52% of sales reps still trust BANT as their primary framework. That makes sense for high-volume, transactional pipelines. But the smartest teams we've worked with use a layered approach: BANT for initial screening, CHAMP for discovery, and MEDDIC for late-stage deal qualification. Each framework handles a different phase of the buyer's journey.

Using BANT on a $200K enterprise cycle leaves critical decision-maker dynamics unexplored. Document which framework applies at which stage, enforce it consistently across reps, and audit quarterly using win rate, cycle length, and deal size data. Treat qualification as a live model, not a set-it-and-forget-it policy. Inconsistent criteria is one of the fastest ways to destroy forecast accuracy - and the consensus on r/sales is that framework drift is the silent killer of pipeline predictability. (To keep it measurable, tie it back to pipeline predictability metrics.)

You can't qualify leads with stale job titles and dead emails. Prospeo's 7-day data refresh cycle and 83% enrichment match rate mean your scoring model runs on data that's actually current - not 6-week-old records that tank your conversion rates.

Enriched leads convert 20-30% better. Start with data you can trust.

Building a Scoring Model That Works

Lead Grade + Lead Score

Most teams build one score and call it done. That's a mistake. You need two components working together. (If you're rebuilding from scratch, follow a full lead scoring system setup.)

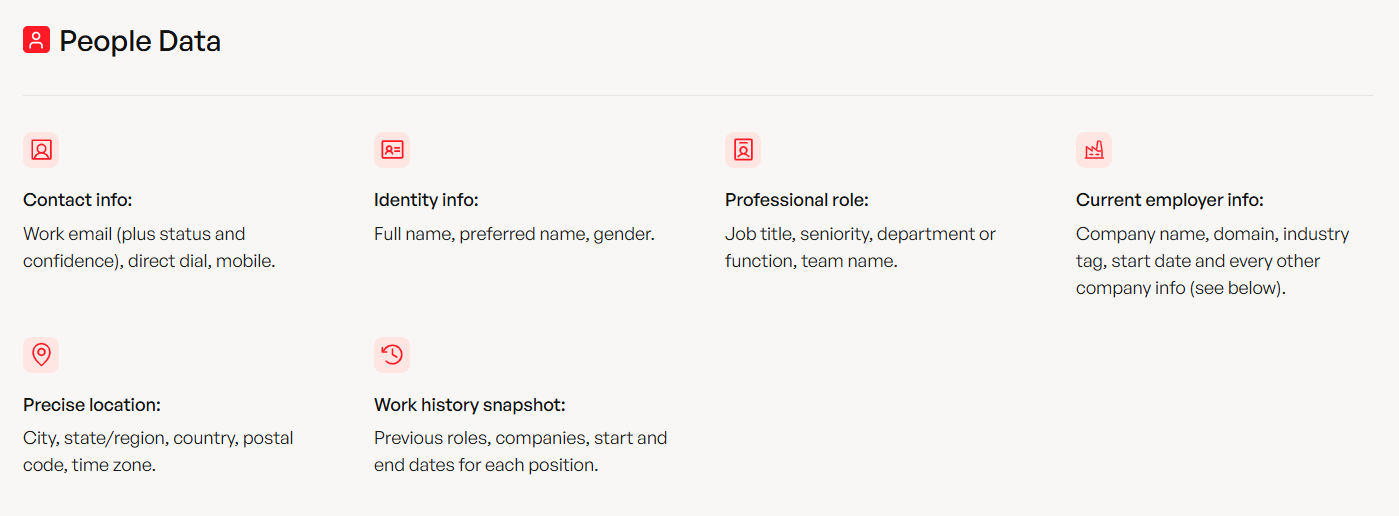

Lead Grade measures firmographic fit - who the lead is. Industry match, company size, revenue range, tech stack. This is the "should we care?" signal. A perfect-fit company gets a high grade regardless of whether they've visited your site. Enrichment tools auto-populate the firmographic fields that Lead Grade depends on - without enrichment, you're grading leads on incomplete data.

Lead Score measures behavioral intent - what the lead is doing. Demo requests, pricing page visits, content downloads, email engagement. This is the "are they ready?" signal.

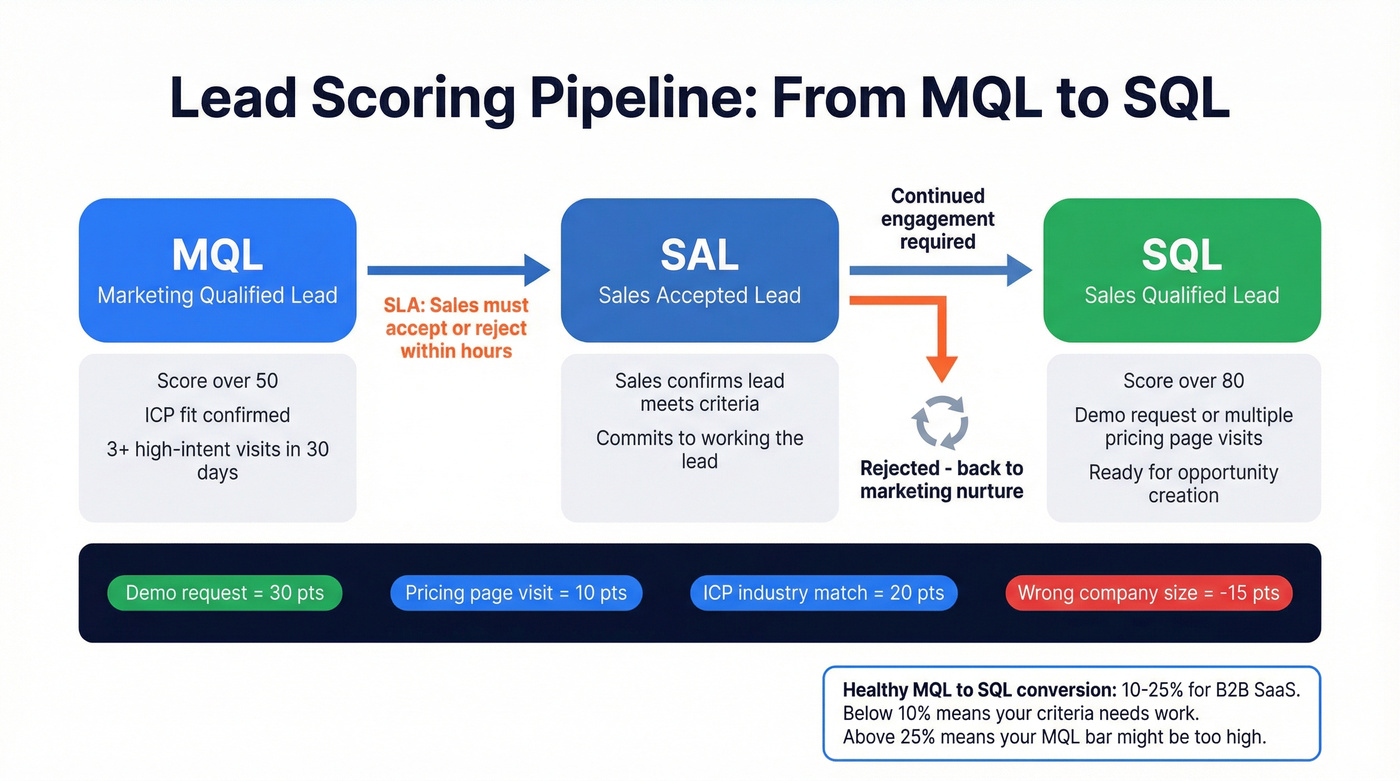

Concrete point examples that work in production: demo request = 30 pts, pricing page visit = 10 pts, ICP industry match = 20 pts, wrong company size = -15 pts. Two paths lead to MQL status: hand-raisers who skip the behavioral accumulation, and behavior-based MQLs who cross the threshold through repeated engagement.

MQL to SAL to SQL Thresholds

Defining stages on a whiteboard is easy. Operationalizing the handoff is where teams fall apart.

A threshold model that works: MQL triggers at score >50, based on ICP fit plus 3+ high-intent visits in 30 days. SQL triggers at >80 after a demo request or multiple pricing page visits. Between them sits SAL - Sales Accepted Lead - where sales confirms the lead meets criteria before committing to work it. (For governance and ongoing validation, use lead scoring systems best practices.)

The SLA piece is non-negotiable. Sales must accept or reject an MQL within a few hours. Not "when they get around to it." Speed-to-lead is one of the biggest predictors of conversion for inbound leads, and rejected leads get recycled back to marketing for continued nurture. (If you're running inbound at scale, align this with inbound lead qualification.)

Measure MQL-to-SQL conversion religiously. For B2B SaaS, 10-25% is typical depending on channel and deal size. Below 10% signals a qualification criteria problem. Above 25% might mean your MQL bar is too high and you're leaving pipeline on the table.

Data Enrichment - The Foundation Layer

Look, you don't need a more sophisticated scoring model. You need cleaner data. (Start with CRM hygiene before you touch scoring weights.)

If your CRM data is older than 30 days, your scoring model is operating on stale assumptions. Lead Grade can't work when job titles are outdated. Behavioral scoring can't work when email addresses bounce. Segmentation can't work when firmographic fields are blank. Poor data quality costs roughly 12% of revenue, and 60% of marketers say bad or incomplete data blocks their enrichment efforts.

The impact of enrichment is hard to ignore: 25% more SQLs, 25% shorter sales cycles, 30% bigger deals. Companies using enrichment analytics see conversion rates climb 25% while CAC drops 15%. We've seen enrichment alone lift MQL-to-SQL conversion by double digits before anyone touches the scoring weights.

Prospeo returns 50+ data points per contact at a 92% API match rate, with records refreshed on a 7-day cycle compared to the 6-week industry average. That freshness gap matters when your scoring model penalizes stale titles or routes leads based on company size that changed two quarters ago. Snyk cut bounce rates from 35-40% to under 5% and generated 200+ new opportunities per month after switching their enrichment layer.

AI-Powered Lead Scoring

Traditional scoring is static - you set rules, assign points, and hope the weights still make sense six months later. What changes with AI: the model retrains on every closed-won and closed-lost deal, continuously adjusting which signals actually predict conversion. (If you're evaluating vendors and workflows, see AI lead qualification.)

Before AI scoring: Your team manually assigns 30 points to demo requests because "it feels right." Six months later, you discover that pricing page visits actually predict closed-won deals 2x better than demos - but nobody updated the weights.

After AI scoring: The model notices the pattern within weeks, automatically reweights, and surfaces it in a dashboard. Score bands in practice: 95+ is highly likely to convert, 50-94 is likely, and <50 is unlikely. Route accordingly.

When should you adopt AI scoring? When you have enough historical data to train on - typically 500+ closed deals minimum. Before that threshold, rule-based scoring with well-chosen weights will outperform a starved ML model every time. Skip this if a vendor tries to sell you AI scoring when you have 80 closed-won deals in your CRM. It won't work.

Tools to Operationalize It All

CRMs handle scoring logic and routing. Enrichment tools feed the data layer. You need both.

| Tool | Entry Price | Best For |

|---|---|---|

| HubSpot Smart CRM | Free; from $9/seat/mo | All-in-one for SMBs |

| Salesforce | From $25/user/mo | Enterprise customization |

| Pipedrive | From $14/user/mo | Sales-first teams |

| Zoho CRM | From $14/user/mo | Budget-conscious teams |

| monday CRM | From $12/user/mo | Visual workflow builders |

For enrichment and intent, the market breaks down by budget. Prospeo offers a free tier with credit-based paid plans, 98% email accuracy via proprietary infrastructure, and intent data across 15,000 topics powered by Bombora - no contracts required. It integrates natively with Salesforce, HubSpot, Smartlead, Instantly, Lemlist, Clay, Zapier, and Make, so enriched data flows directly into your scoring rules. ZoomInfo runs $15-40K/year and makes sense for large enterprise teams that need the full GTM suite. Clearbit sits around $1,000-2,500/month for small teams. Demandbase and 6sense are enterprise ABM plays at $30-100K+/year.

The CRM handles the scoring rules and routing automation. The enrichment tool ensures those rules operate on accurate, current data. Skip the enrichment layer and your scoring model is running on last quarter's org chart.

5 Mistakes That Kill Your Pipeline

| Mistake | Symptom | Fix |

|---|---|---|

| Stale or missing data | Bounce rates above 5%, scores based on outdated job titles | Use an enrichment tool that refreshes weekly, not quarterly |

| Optimizing for volume over quality | Marketing celebrates 5,000 MQLs while sales converts 2% | Track pipeline velocity, not lead volume - MQLs are a vanity metric without conversion context |

| Inconsistent criteria across reps | One SDR qualifies on budget, another on timeline, a third on gut feel | Document shared definitions, enforce them in your CRM, audit quarterly |

| Ignoring intent signals | Perfect-fit accounts sit untouched while actively researching your category | Layer behavioral and intent signals into scoring - a perfect-fit account actively researching is worth 10x one that isn't |

| Slow follow-up | Hot inbound leads wait 24 hours and talk to your competitor first | Route in seconds, not hours - the majority of lost sales trace back to inadequate qualification, not bad products |

The most common complaint in RevOps communities is that MQLs are garbage - and it's almost always a data quality problem, not a scoring logic problem. Fix the data layer first, then worry about refining your scoring weights.

Segmenting across 6 dimensions requires rich, accurate data on every contact. Prospeo returns 50+ data points per lead - firmographics, tech stack, intent signals across 15,000 topics - so your segments actually drive personalized outreach instead of generic noise.

Stop segmenting on two dimensions when you have access to all six.

FAQ

What's the difference between MQL and SQL?

An MQL shows ICP fit and early engagement - content downloads, repeat site visits, score above 50. An SQL demonstrates buying intent through demo requests or pricing page visits, typically scoring above 80. The gap between them is where handoff SLAs and the SAL stage live. Measure MQL-to-SQL conversion by channel to spot qualification drift early.

How often should you update your scoring model?

Review quarterly at minimum and recalibrate whenever you shift ICP, move upmarket, or see MQL-to-SQL conversion drop below 10%. AI models retrain continuously on closed-won/lost data; rule-based models need manual weight adjustments at least every 90 days.

How does qualification feed email segmentation?

Once a lead passes your qualification threshold, their score and firmographic data determine which email segment they enter. A high-scoring enterprise lead gets routed into a consultative nurture sequence, while a mid-score SMB lead receives a product-led onboarding cadence. The qualification output directly feeds the segmentation logic - that's why both systems need to share the same enriched data layer.

What's a good MQL-to-SQL conversion rate?

For B2B SaaS, 10-25% depending on channel and deal size. Below 10% means your MQL definition is too loose or your data is stale. Above 25% may mean you're setting the bar too high and leaving viable pipeline on the table. Track by channel and segment for the full picture.

What's the cheapest way to get accurate enrichment data?

Prospeo's free tier includes 75 email credits and 100 Chrome extension credits per month with 98% email accuracy - enough for small teams running real campaigns. Paid plans start at roughly $0.01 per lead with no contracts. For teams under 10 reps, credit-based pricing beats enterprise contracts every time.