Manufacturing Lead Scoring: The 2026 Playbook for Industrial Leads That Actually Convert

You just got back from IMTS with 2,000 badge scans. Half are engineering students, a quarter are competitors scoping your booth, and somewhere in that pile are the three accounts that'll actually send you a PO. Every lead scoring guide on the internet uses SaaS examples - MQL thresholds based on ebook downloads and pricing page visits. That's useless when your sales cycle runs 6-12 months, your average deal involves a custom quote, and your website conversion rate sits around 2.2%.

This guide builds a manufacturing lead scoring model designed for how industrial companies actually buy.

The Short Version

Manufacturing signals matter more than generic engagement. CAD downloads, RFQ form submissions with volume and certification details, and capabilities page visits are worth 10x a blog view. Score them accordingly.

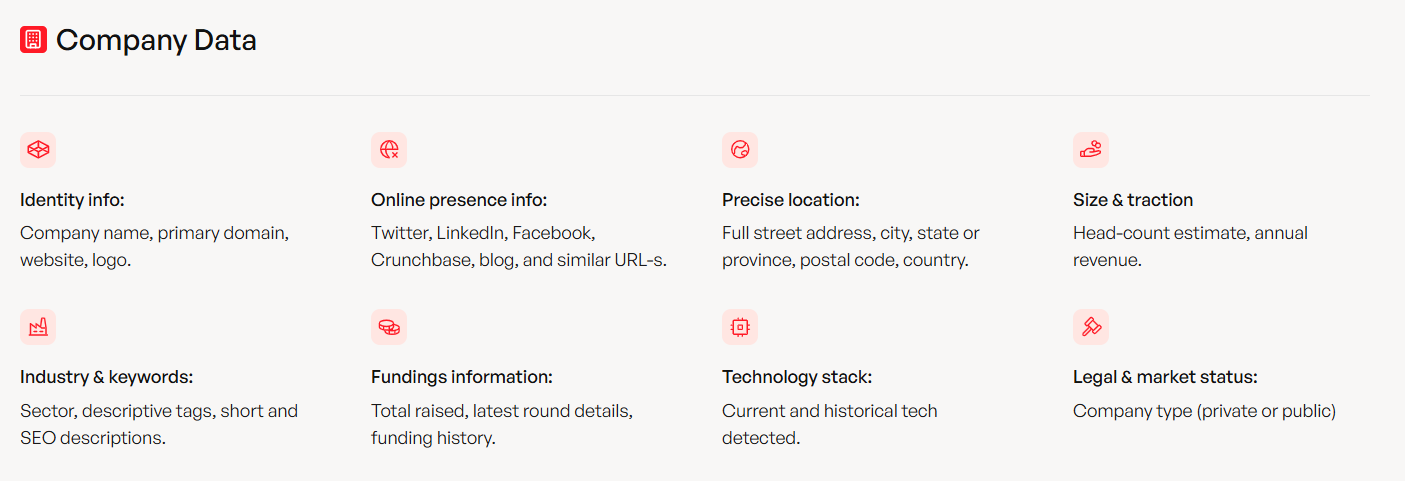

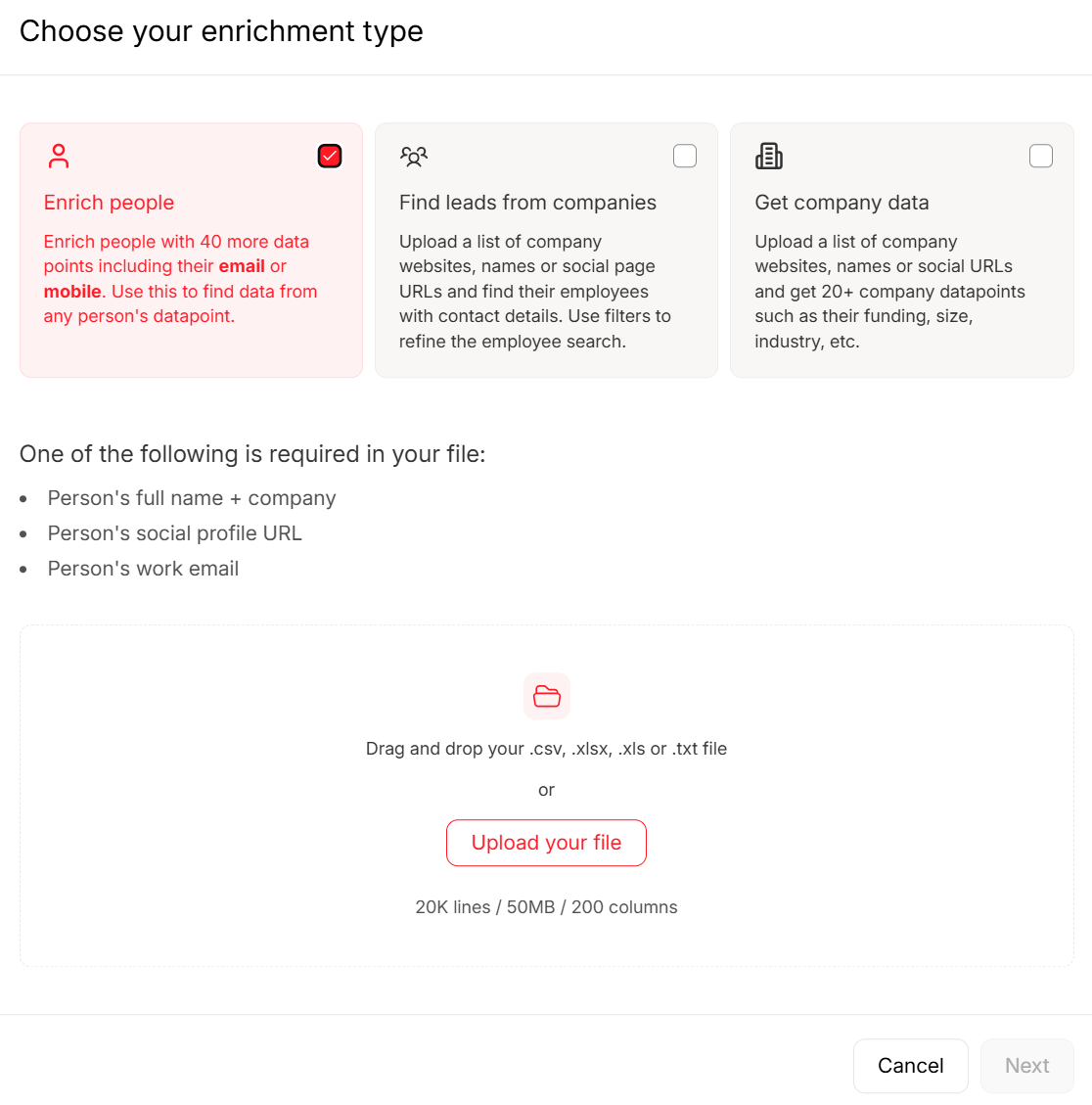

Fix your data before you score it. 60% of marketers cite bad or incomplete data as their top barrier. Enrich firmographic gaps - NAICS codes, headcount, revenue, plant locations - before your scoring model touches a single lead. (If you need a baseline framework, start with a lead scoring model and adapt it to industrial signals.)

Start with a fit x intent matrix. Grade companies A-D on firmographic fit, score behavioral intent 1-4, and route based on the intersection. Graduate to ML when you've got 12+ months of clean conversion data.

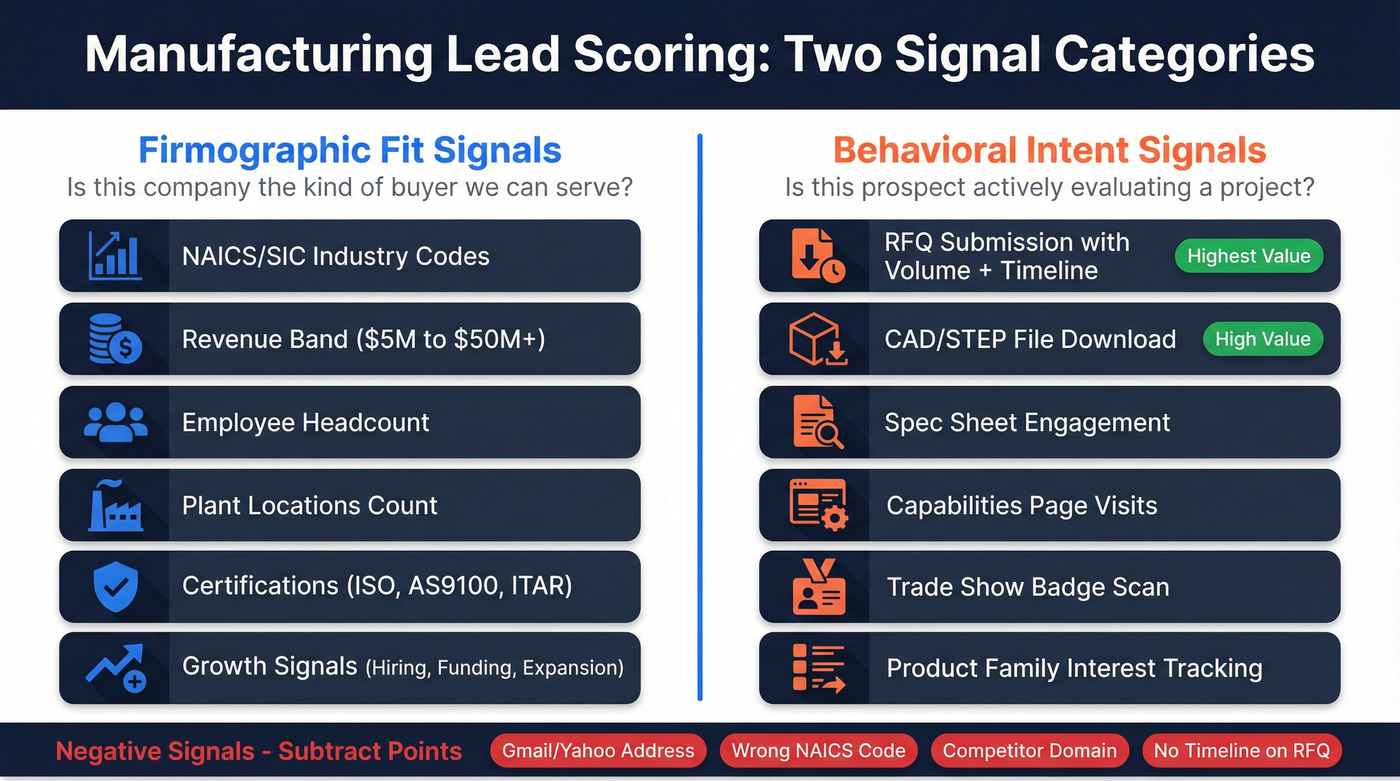

Scoring Criteria for Manufacturers

Generic lead scoring treats all behaviors equally. A webinar registration scores the same whether the attendee is a procurement director at a Tier 1 automotive supplier or a marketing intern at a SaaS company. In manufacturing, the signals that predict a real opportunity are entirely different - and they split cleanly into two categories.

Firmographic Fit Signals

Fit scoring answers one question: is this company the kind of buyer we can actually serve?

NAICS/SIC codes are your first filter. A company classified under NAICS 332 (fabricated metal products) is a different prospect than one under 541 (professional services), even if both visited your capabilities page. Layer on revenue bands, employee headcount, and number of plant locations. Then check certifications: ISO 9001, AS9100, ITAR, NADCAP. A shop running three plants with AS9100 certification and $50M+ revenue is a categorically different lead than a 15-person job shop with no certifications - and your scoring model should reflect that gap immediately.

Growth signals add a timing dimension. Companies that recently announced expansion, posted new engineering roles, or closed a funding round are more likely to have active projects and allocated budget. (This is the same logic behind hiring signals and other trigger-based prospecting.)

These signals are hard to track manually, which is where enrichment matters. Prospeo's 30+ search filters can fill in missing firmographic data like headcount growth, funding events, revenue ranges, and technographics so your scoring model works with complete records instead of guessing. If you want the broader data taxonomy, see firmographic and technographic data.

Behavioral Intent Signals

A prospect who downloads your STEP file and submits an RFQ with annual volume and certification requirements is telling you they have an active project. Someone who read a blog post is telling you nothing.

The highest-value industrial intent signals, roughly in order:

- RFQ form submission - especially when the form captures part type, annual volume, required certifications, timeline, and whether drawings are available. Each qualifying field the prospect fills in adds confidence. (If you’re formalizing this, use an inbound lead qualification checklist so sales and marketing score the same way.)

- CAD/STEP file downloads - this is a technical-intent signal that barely exists in SaaS scoring models. Someone downloading your 3D model is evaluating your part for fit in their assembly. That's bottom-funnel behavior. Gate CAD/STEP file access behind a short form capturing role, company, and project details - this turns a download into a qualifying event.

- Spec sheet engagement - downloading material specs, tolerance guides, or finishing options signals active project evaluation.

- Capabilities page visits - a single capabilities page visit outweighs multiple email opens. It means the prospect is checking whether you can actually do the work.

- Trade show lead source - badge scans from IMTS, FABTECH, or industry-specific shows carry more weight than organic blog traffic, but only when paired with qualifying data.

- Product-family interest tracking - which product lines or service categories a prospect engages with tells sales what to lead with in the first conversation.

Negative signals matter too. Gmail and Yahoo addresses, NAICS codes outside your serviceable range, competitor domains, and "no timeline" responses on RFQ forms should actively subtract points. (For setup patterns and examples, see negative lead scoring.)

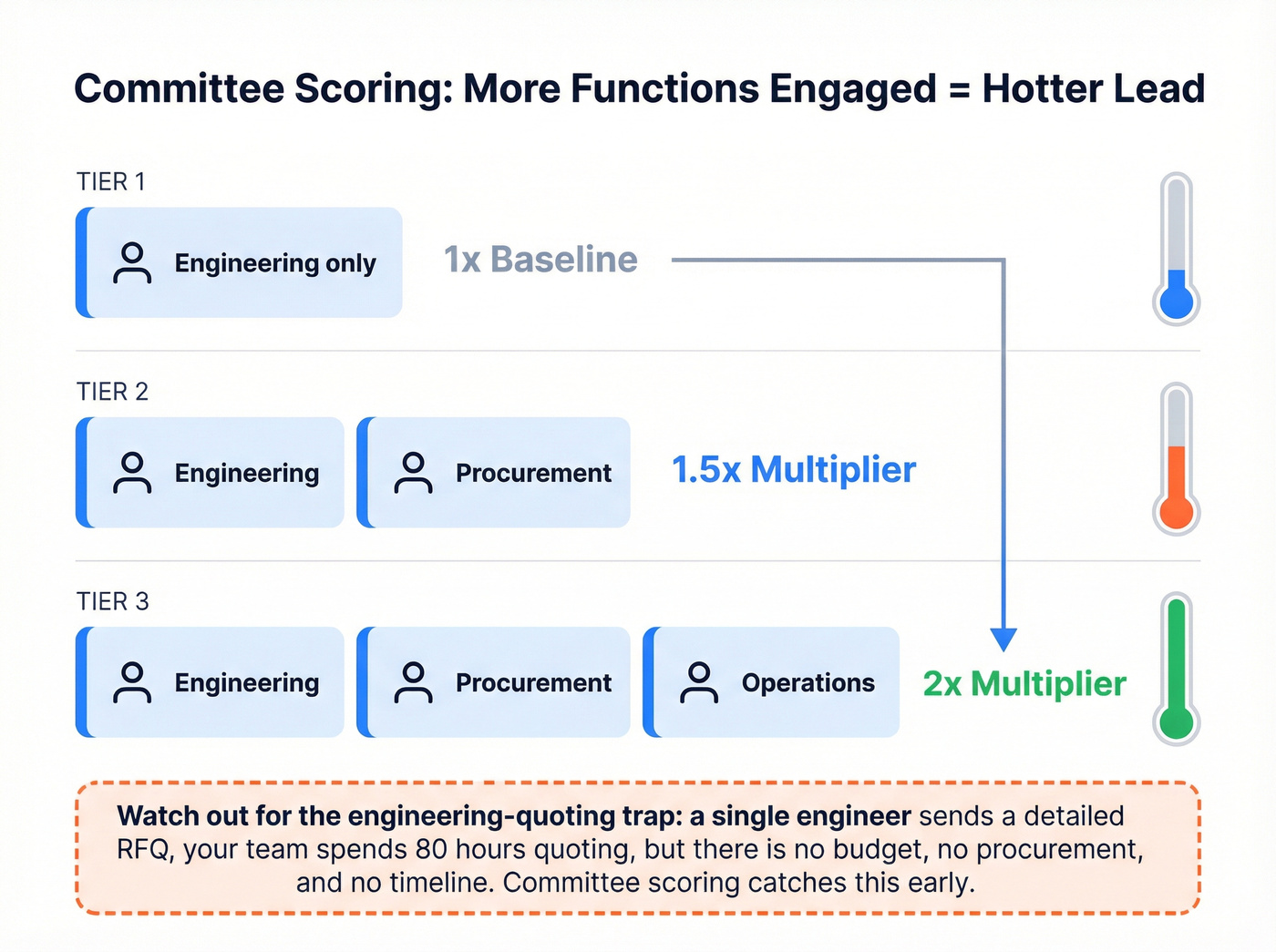

Multi-Stakeholder Committee Scoring

Manufacturing buying decisions involve operations, engineering, procurement, finance, and leadership. A lead where only one function has engaged scores lower than one where engineering downloaded CAD files, procurement visited the capabilities page, and operations submitted an RFQ. This is essentially multithreading applied to scoring.

Let's be honest about the biggest time sink in manufacturing sales: the engineering-quoting trap. In our experience, it burns more sales hours than any other single issue. An engineer at a shop with under $5M in revenue sends a detailed RFQ, your applications team spends 80 hours on a custom quote, and it turns out there's no budget, no procurement involvement, and no timeline. Committee scoring prevents this by tracking engagement breadth - how many distinct functions at the same account have interacted with your content - giving you a much clearer picture of whether a deal is real.

Score each unique function that engages as a multiplier. One function engaged = baseline. Two functions = 1.5x. Three or more = 2x. This doesn't replace fit and intent scoring; it layers on top. A high-fit, high-intent lead with multi-stakeholder engagement is your hottest opportunity. A high-intent lead with only one contact engaged needs nurturing, not a sales handoff.

Building Your Fit x Intent Matrix

Instead of a single blended score, grade on two axes: firmographic fit (A-D) and behavioral intent (1-4). The intersection determines routing. (If you’re running ABM, this maps cleanly to ABM account prioritization.)

| Intent 1 (Hot) | Intent 2 (Warm) | Intent 3 (Cool) | Intent 4 (Cold) | |

|---|---|---|---|---|

| Fit A | SQL - Sales now | SQL - Sales 48hr | MQL - Nurture | Monitor |

| Fit B | SQL - Sales 48hr | MQL - Nurture+ | MQL - Nurture | Monitor |

| Fit C | MQL - Nurture+ | MQL - Nurture | Monitor | Disqualify |

| Fit D | Monitor | Monitor | Disqualify | Disqualify |

Fit grading criteria:

- A: Target NAICS, $25M+ revenue, 100+ employees, required certifications, growth signals present

- B: Adjacent NAICS, $10-25M revenue, 50-100 employees, some certifications

- C: Broad manufacturing NAICS, $5-10M revenue, 25-50 employees, no certifications

- D: Non-manufacturing NAICS, under $5M revenue, or disqualifying attributes

Intent scoring criteria:

- 1 (Hot): RFQ with volume + timeline + drawings, or CAD download + capabilities page + return visit within 7 days

- 2 (Warm): Spec sheet download + capabilities page, or trade show scan + follow-up engagement

- 3 (Cool): Blog/content engagement only, single capabilities page visit, email opens without clicks

- 4 (Cold): No behavioral engagement, or only negative signals

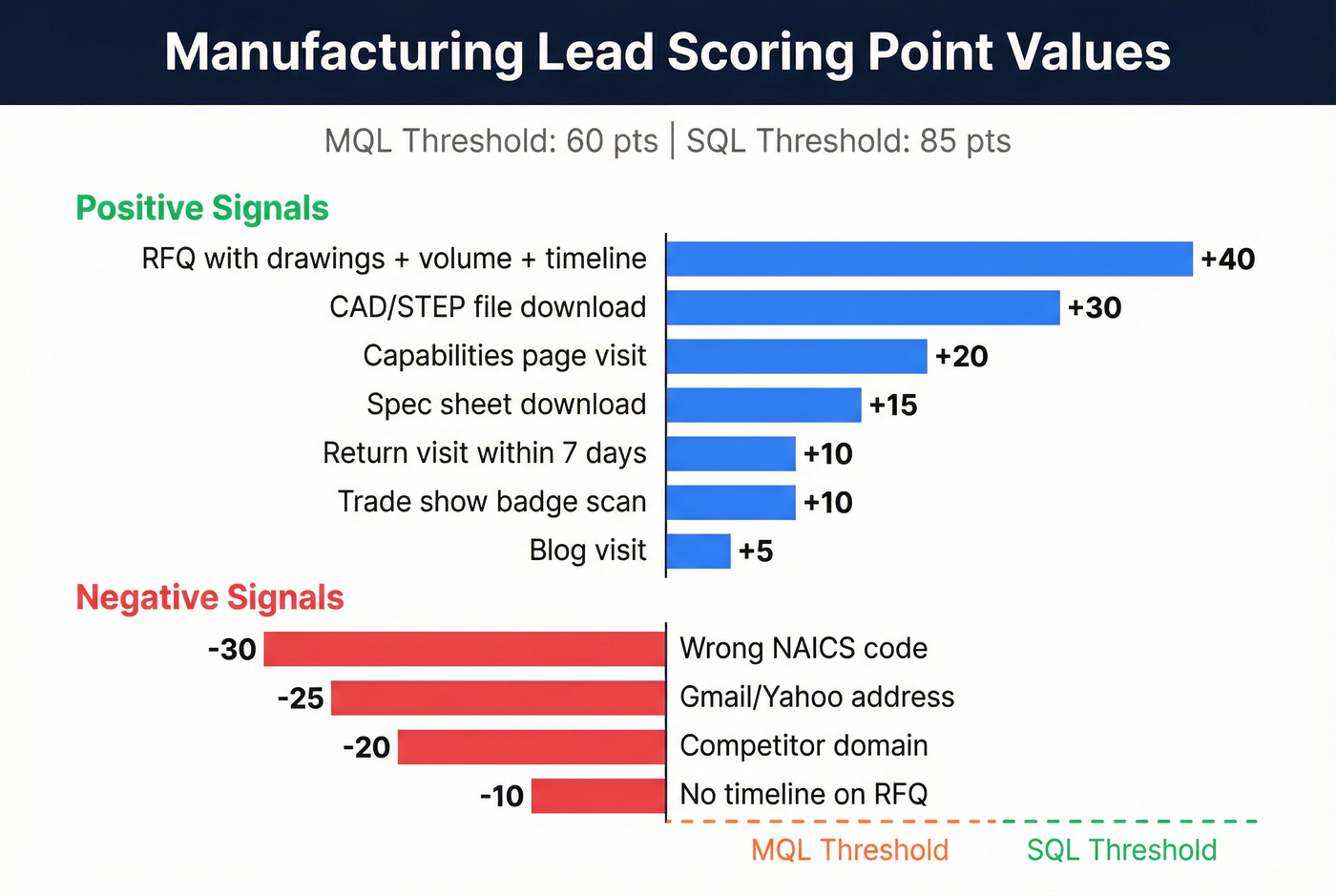

Example Point Values

The matrix gives you the framework. These point values make it implementable:

| Signal | Points |

|---|---|

| RFQ with drawings + volume + timeline | +40 |

| CAD/STEP file download | +30 |

| Capabilities page visit | +20 |

| Spec sheet download | +15 |

| Trade show badge scan | +10 |

| Blog visit | +5 |

| Return visit within 7 days | +10 |

| Gmail/Yahoo address | -25 |

| Wrong NAICS code | -30 |

| Competitor domain | -20 |

| "No timeline" on RFQ | -10 |

MQL threshold: 60 points. SQL threshold: 85 points. Calibrate these against your actual conversion data after 90 days. If your MQL-to-SQL conversion rate drops below 20%, your model is handing leads to sales too early. Tighten your thresholds or add qualifying criteria. (For the operational handoff, build an SLA-style workflow like RevOps lead scoring.)

60% of marketers say bad data kills their scoring models. Prospeo fills firmographic gaps - NAICS codes, headcount growth, funding events, revenue, and technographics - with 98% email accuracy and a 7-day data refresh cycle. Your fit × intent matrix needs complete records to work.

Stop scoring leads with missing data. Enrich them first.

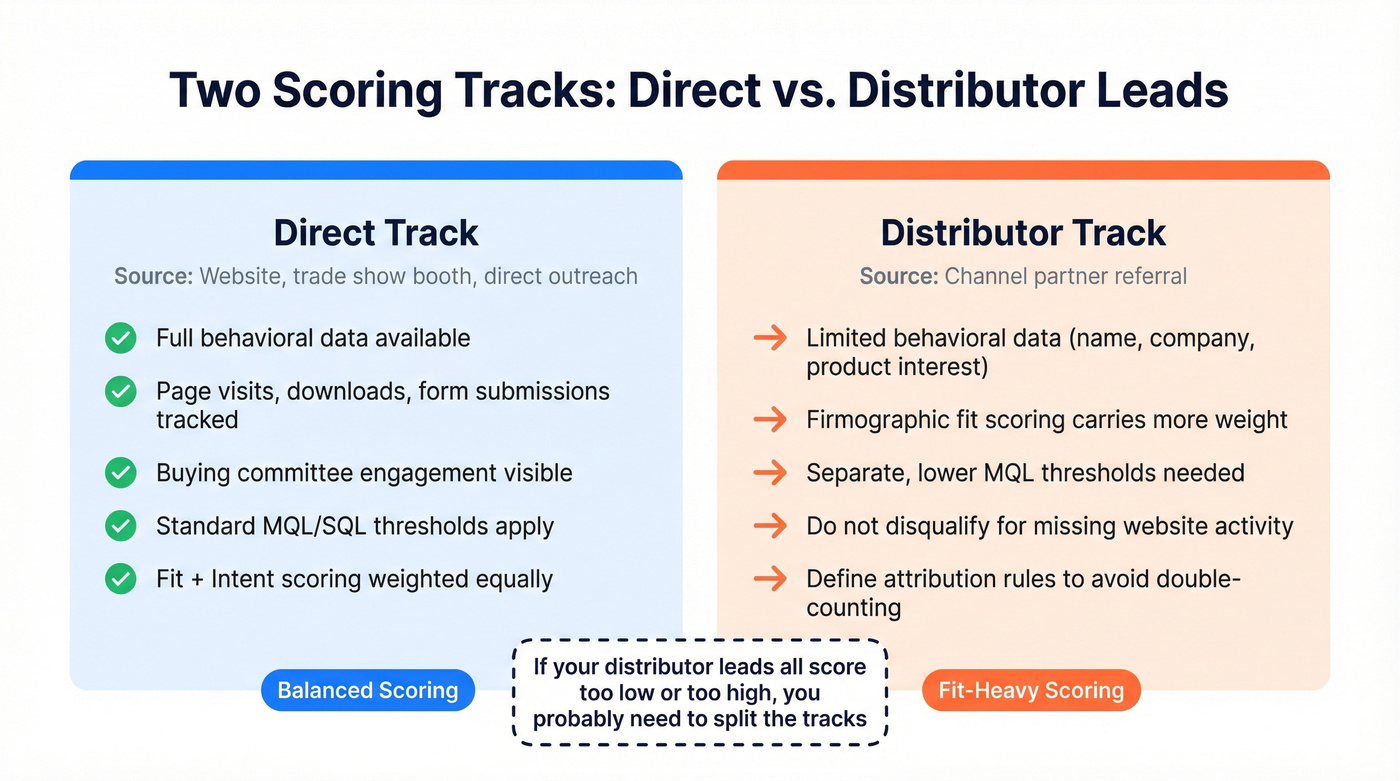

Distributor vs. Direct Leads

Score distributor-referred leads on a separate track. Treating them identically to direct website leads is a guaranteed way to misallocate sales resources.

Direct track: The lead came through your website, trade show booth, or direct outreach. You've got full behavioral data - page visits, downloads, form submissions - and can track engagement across the buying committee.

Distributor track: The lead came through a channel partner. You'll typically have less behavioral data, often just a name, company, and product interest, so firmographic fit scoring carries more weight. Set separate MQL thresholds. A distributor lead with strong fit but limited behavioral data shouldn't be disqualified just because you can't see their website activity. Define clear attribution rules so you aren't double-counting leads that touch both channels.

We've watched teams spend quarters debugging their scoring model when the real fix was splitting the tracks. If your distributor leads all score too low or all score too high, that's your problem.

Data Quality: The Real Bottleneck

Look - if your average contract value is under $50K, you don't need a sophisticated scoring model. You need clean data.

Poor data quality costs companies roughly 12% of revenue, and 70% of companies struggle integrating their CRM with marketing automation. That means scoring rules fire on incomplete, stale, or duplicated records. Your trade show badge scans are the worst offenders: missing NAICS codes, consumer email addresses, and job titles that haven't been updated in two years. Companies that invest in enrichment see 25% more SQLs and 25% shorter sales cycles, which tells you where the real gains are. (If you’re cleaning the foundation, start with CRM hygiene and a data quality scorecard.)

Enrichment is step zero. Prospeo's CRM enrichment returns 50+ data points per contact with an 83% match rate - NAICS codes, revenue, headcount, technographics, funding signals, verified emails, and verified mobile numbers. The 98% email accuracy means your outreach sequences don't bounce, and the 7-day refresh cycle keeps records current. Upload your trade show CSV, enrich it in bulk, and your scoring model has complete records to work with instead of gaps.

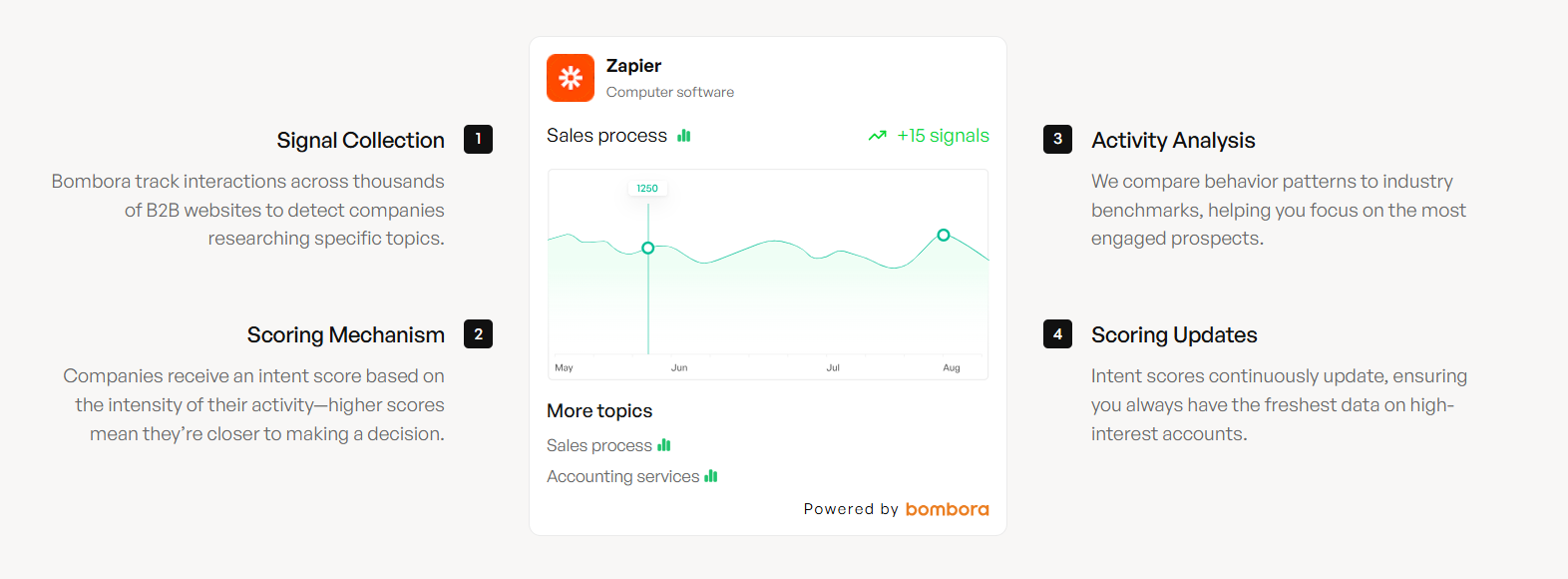

AI/ML Scoring for Manufacturers

Rules-based scoring works as a starting point. Once you've got 12+ months of clean conversion data, ML-based scoring surfaces patterns you'd never encode manually. (If you’re deciding when to switch, use an AI lead qualification readiness checklist.)

The implementation path: collect historical data covering firmographics, engagement, lead source, product interest, and outcome. Engineer manufacturing-specific features - CAD downloads in the first two weeks, capabilities page visits, trade show source, expansion signals. Train a model using gradient boosting or random forests, which handle the uneven signal distribution common in manufacturing where a single capabilities page visit outweighs 50 email opens. Score leads as probabilities and retrain quarterly. Manufacturing teams using ML scoring report an 18% conversion lift over rules-based models.

Route based on score bands: 8+ goes to sales immediately, 5-7 enters a structured nurture track, below 5 gets low-touch automation.

Skip ML if you don't have at least 12 months of conversion data and a few hundred closed-won records. You'll overfit on noise and end up worse than a simple rules-based model. Expect 4-8 weeks to implement rules-based scoring with enriched data, and 8-12+ weeks for a proper ML model.

Common Mistakes

Premature handoff. If your MQL-to-SQL conversion is below 20%, you're wasting sales capacity. Tighten scoring thresholds or add qualifying criteria before routing. (For a structured fix, see how to nurture MQLs to SQLs.)

Treating all content engagement equally. A buyer's guide download and a trends webinar registration shouldn't score the same. Weight content by purchase intent stage, not just engagement. Extend this thinking beyond initial conversion too - score for upsell and cross-sell potential once an account is active.

Ignoring negative signals. Consumer emails, wrong NAICS codes, competitor domains, "no timeline" on RFQ forms - these should actively subtract points. Most models only add, and that's how unqualified leads sneak through.

Never recalibrating. Your scoring model isn't a set-it-and-forget-it system. Review thresholds quarterly against actual conversion data. The consensus on r/sales is that most teams build a model, launch it, and never touch it again - then blame the model when pipeline quality drops. The model was probably fine at launch. Your market shifted.

Manufacturing lead scoring breaks when you can't identify which functions are engaged at an account. Prospeo's 30+ search filters let you map entire buying committees - operations, engineering, procurement - with verified emails and direct dials. 83% of leads come back enriched with 50+ data points.

Map the full buying committee before your next custom quote.

FAQ

What's a good MQL-to-SQL conversion rate for manufacturers?

20-30% is healthy for industrial companies. Below 20% means your scoring model hands leads to sales too early - tighten your fit or intent thresholds. Above 30% likely means you're being too conservative and leaving pipeline on the table. Track this monthly and adjust.

How long does it take to implement lead scoring?

A rules-based model with enriched data takes 4-8 weeks, including CRM cleanup and threshold calibration. An ML-based model requires 12+ months of historical conversion data and 8-12 weeks to build, test, and deploy. Start rules-based, graduate to ML once you have the data volume.

How does lead scoring help prioritize sales accounts in manufacturing?

The fit x intent matrix ranks every account by two dimensions simultaneously, so your sales team focuses on A1 and A2 accounts first - the ones with the strongest firmographic match and the most buying signals. Reps work leads in the order most likely to close, which directly reduces wasted quoting hours and shortens the path to revenue.

What's the best free tool for enriching manufacturing lead data?

Prospeo offers a free tier with 75 email credits and 100 Chrome extension credits per month - enough to test enrichment on a small lead list. HubSpot's free CRM includes basic scoring rules. For teams running real campaigns, Prospeo's paid plans return 50+ data points per contact at roughly $0.01 per lead, which is 90% cheaper than enterprise alternatives.